In the rush to build ever-more powerful AI models, a quiet crisis brews beneath the surface: how do we trust the data that trains them? Enterprises pour billions into machine learning, yet murky dataset origins leave them exposed to lawsuits, regulatory fines, and outright model failures. ZK proofs for training data emerge as the elegant fix, letting developers prove AI model provenance without spilling sensitive details. This isn't just tech wizardry; it's the backbone for compliant, reliable AI in a world demanding transparency.

Consider healthcare giants sharing patient records across borders or finance firms auditing trading algorithms. Traditional audits demand full data disclosure, grinding innovation to a halt. But zero-knowledge proofs flip the script. They mathematically attest that a model trained on licensed, ethical datasets while keeping the actual data hidden. I see this as a balanced pivot: privacy preserved, trust amplified.

Why Dataset Provenance Matters More Than Ever

Regulators aren't waiting. By 2026, laws like SB 1786 mandate provenance tags on generative AI outputs, from altered videos to synthetic audio. Compliance teams scramble as vendor-supplied models carry hidden risks; one tainted dataset can torpedo an entire deployment. Data lineage helps engineers debug pipelines, but provenance verifies rights and origins, crucial for dataset provenance verification.

Take the vendor black box. You deploy their LLM, but what if it ingested pirated books or biased medical scans? Without proofs, you're liable. ZK tech bridges this, offering zero knowledge AI attestations that regulators crave without exposing trade secrets.

5 Key Benefits of ZK Proofs for AI Provenance

- Privacy-preserving verification: Prove dataset origins and integrity without exposing data, as in the ZKPROV framework.

- Compliance with data licensing: Verify adherence to licensing and regulations like SB 1786 without revealing sensitive info, per ExecMesh and ZKPROV.

- Reduced legal risks: Mitigate risks from unverified training data, addressing regulatory pressures noted by KuppingerCole.

- Faster audits: Enable succinct proofs for quick verification of training, as in verifiable fine-tuning protocols.

- Enhanced model trust: Boost confidence via verifiable provenance, supporting trusted AI in healthcare with zkFL-Health.

Unpacking Zero-Knowledge Proofs in AI Contexts

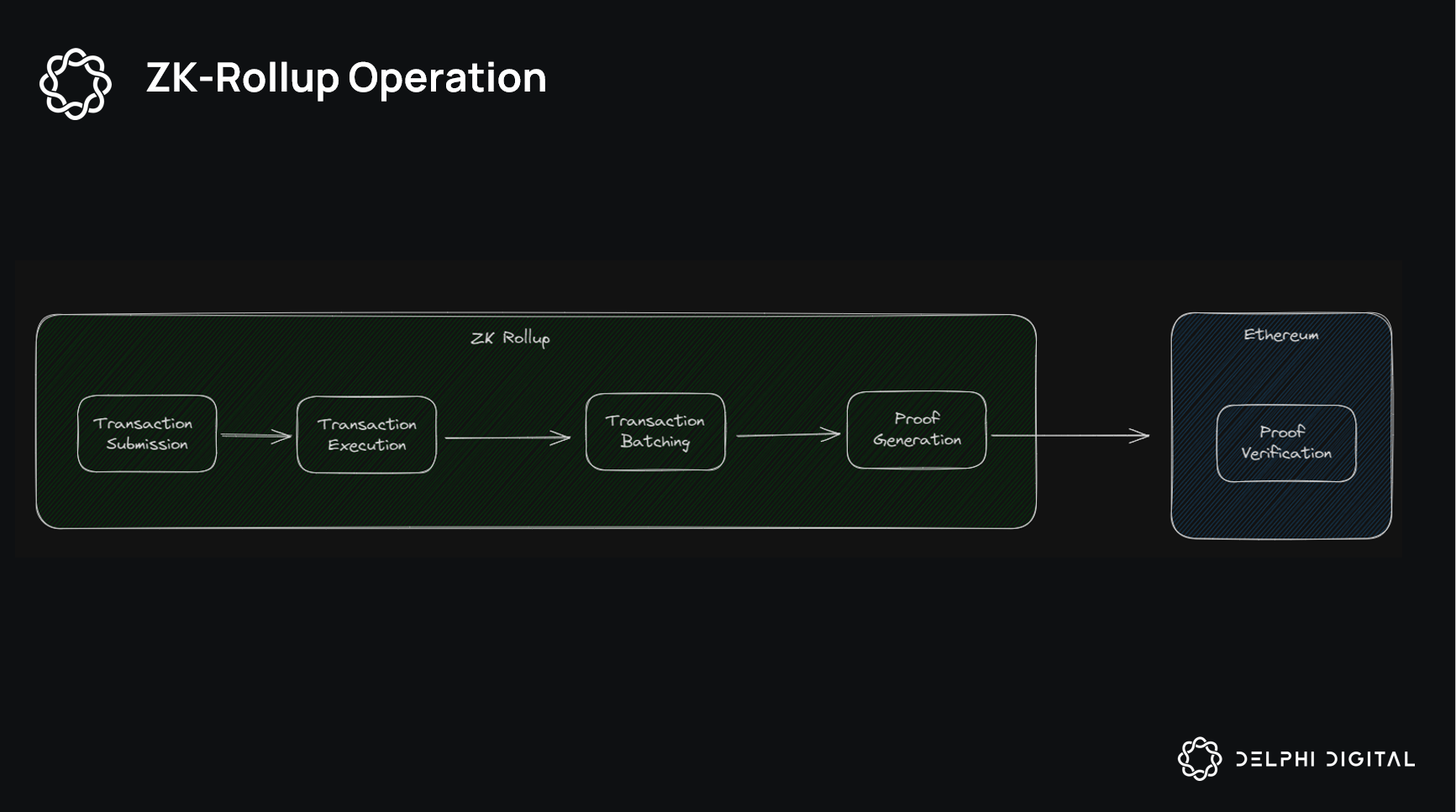

At its core, a zero-knowledge proof lets party A convince party B of a truth without revealing underlying facts. In AI, this means generating a compact proof that your model derived from a committed dataset hash, trained under specific parameters. No peeking at records; just ironclad assurance.

This shines in high-stakes fields. Finance models prove clean market data usage; healthcare AIs confirm HIPAA-compliant training. It's not hype; it's deployable now, scaling with recursive proofs for massive datasets.

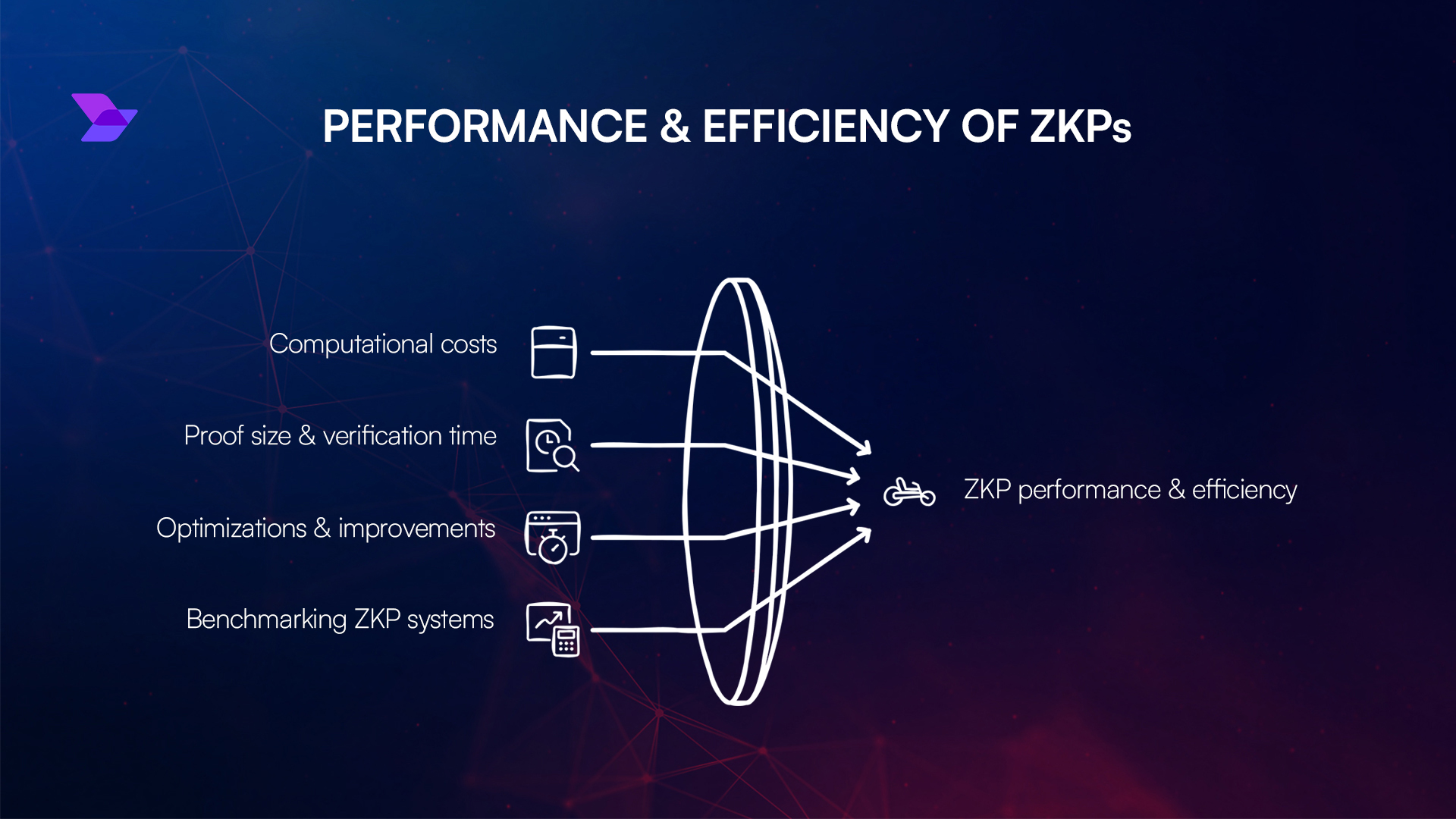

Critics argue proofs add compute overhead, but optimizations like zk-SNARKs slash that. The real win? Asymmetric returns on trust: minimal extra work yields massive compliance gains.

Pioneering Frameworks Reshaping the Landscape

Enter ZKPROV, the brainchild of Mina Namazi and team. This framework binds LLMs to authorized datasets via succinct proofs, verifying training data licensing ZK without model or data leaks. Imagine releasing a model; auditors check the proof, done. No more fishing expeditions.

Building on that, Hasan Akgul's verifiable fine-tuning protocol spits out proofs for public-initialized models under auditable commitments. It enforces policies like "no toxic data" seamlessly. Then there's zkFL-Health, merging federated learning with ZK and TEEs for medical AI. Multi-institution collab without trust erosion; proofs confirm correct, private training.

These aren't lab curiosities. ZKPROV tackles provenance gaps head-on, while zkFL-Health eyes real-world clinics. As 2026 provenance strategies finalize, such tools position early adopters ahead, blending security with speed.

Yet adoption hinges on more than proofs; it demands seamless integration. Tools like ExecMesh push boundaries with cryptographic commitments for verifiable AI provenance, generating audit trails that sidestep full ZK maturity. This hybrid appeals to compliance officers craving quick wins amid regulatory heat from acts like SB 1786.

In healthcare, where ethical data sharing defines progress, zero-knowledge proofs unlock collaborative training. Picture hospitals pooling anonymized scans: zkFL-Health's federated setup with ZK attestations ensures each contribution stays private, yet the final model proves origin purity. No more siloed innovation; instead, robust AI that regulators bless without invasive probes. I view this as essential ballast in volatile fields, where one data breach erodes years of trust.

Comparison of Key ZK Frameworks for AI Training Data Provenance

| Framework | Strengths | Use Cases | Maturity |

|---|---|---|---|

| ZKPROV (LLM binding) | Binds LLMs to authorized datasets via ZKPs without revealing sensitive data or parameters; ensures trustworthy provenance and confidentiality | Verifying LLM training on authorized datasets | Research prototype (arXiv 2025) |

| Verifiable Fine-Tuning (policy enforcement) | Succinct ZKPs prove model derived from public init under declared training program and auditable dataset commitment; enforces policies | Policy-compliant fine-tuning of LLMs | Research prototype (arXiv 2025) |

| zkFL-Health (federated medical) | Combines federated learning with ZKPs and TEEs for privacy-preserving, verifiably correct collaborative training | Multi-institutional medical AI deployments | Research prototype (arXiv 2025) |

| ExecMesh (audit trails) | Commitment-based verification and audit trail generation for cryptographic AI provenance | Compliance and audit trails in AI systems | Preprint stage (early implementation) |

Navigating Challenges and Overhead

Skeptics highlight proof generation costs, especially for billion-parameter models. Fair point; early zk-SNARKs guzzled resources. But recursive aggregation and hardware accelerators flip that narrative, compressing proofs to kilobytes verifiable in seconds. The Montreal AI Ethics Institute's experiments confirm: ZK enforces compliance without crippling pipelines, a net positive for ZK proofs training data.

Another hurdle? Standardization. Who's the trusted certifier for dataset hashes? Emerging oracles and multi-party computation address this, letting stakeholders co-sign commitments. Opinion: ignore the noise. The asymmetric upside dwarfs friction; firms skipping ZK now face 2026 fines that make compute look trivial.

Beyond tech, cultural shifts matter. Data engineers chase lineage for debugging; legal eagles demand provenance for rights audits. ZK unifies them, proving training data licensing ZK via commitments that travel with models. KuppingerCole nails it: untraceable vendor data is your risk. Flip it with proofs, and models become assets, not liabilities.

Enterprise Strategies for Tomorrow

Forward-thinking players embed ZK early. Start with dataset hashing at ingestion, train with proof-enabled libraries, release with attestations. Finance outfits verify clean feeds sans exposure; content creators prove synthetic media roots per new laws. House of ZK's proof-carrying intelligence elevates this: not just origins, but runtime correctness too.

NIH-backed ethical AI in healthcare exemplifies. Verified sharing from medical sources bolsters datasets, ZK ensuring no leaks. Scale to enterprises: imagine supply chains where AI provenance proofs cascade, from raw data to deployed bot. This builds ecosystems resilient to scrutiny.

Proof is in deployment. icme. io spotlights how ZKPs let creators attest compliance sans reveals, vital for regulated outputs. Preprints like Artificial Intelligence-enhanced ZKPs hint at self-optimizing proofs, blending AI with crypto for lighter lifts. My take: this convergence accelerates trustworthy ML, rewarding balanced portfolios of privacy and verifiability.

Embracing zero knowledge AI attestations isn't optional; it's the pivot from opaque hype to durable value. As frameworks mature and regs tighten, those wielding ZK proofs command the field, turning data shadows into beacons of certainty.

No comments yet. Be the first to share your thoughts!