Imagine building the next breakthrough AI model, but regulators and users demand ironclad proof that your training data came from licensed, ethical sources- without you spilling a single byte of that precious dataset. Sounds impossible? Enter zero-knowledge proofs for AI training data provenance. These cryptographic wizards let you verify model provenance verification and scream 'trust me, it's legit!' without revealing the secrets. At ZKModelProofs. com, we're all about making this a reality, and the tech is exploding right now.

Let's break it down. Zero-knowledge proofs, or ZKPs, are like proving you know the password to a safe without saying what it is. In AI, they confirm a model was trained on specific, committed datasets- think hashes or commitments- without exposing the raw data. This tackles head-on the black-box nightmare of modern machine learning, where tainted data leads to biased or illegal models. No more finger-pointing; just pure, verifiable truth.

ZKPROV Steps Up: Bind Datasets, Models, and Outputs Seamlessly

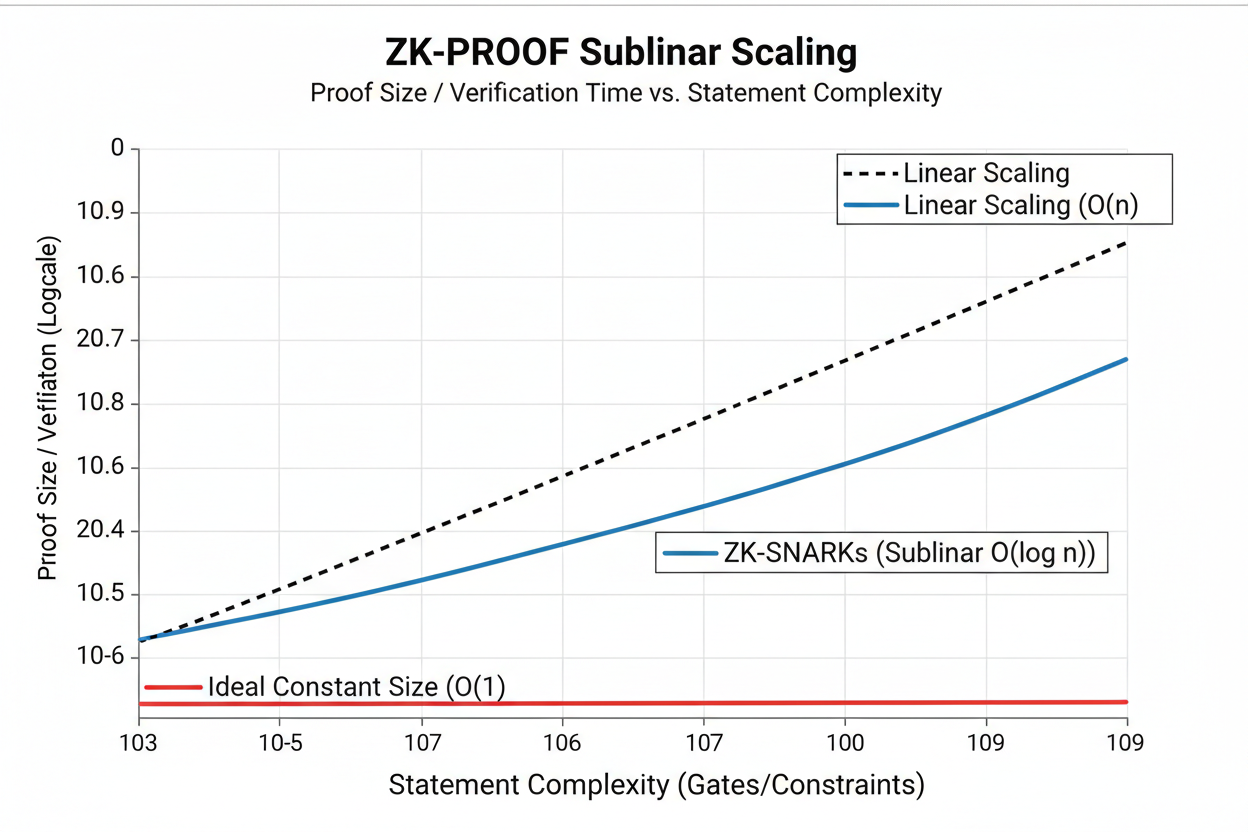

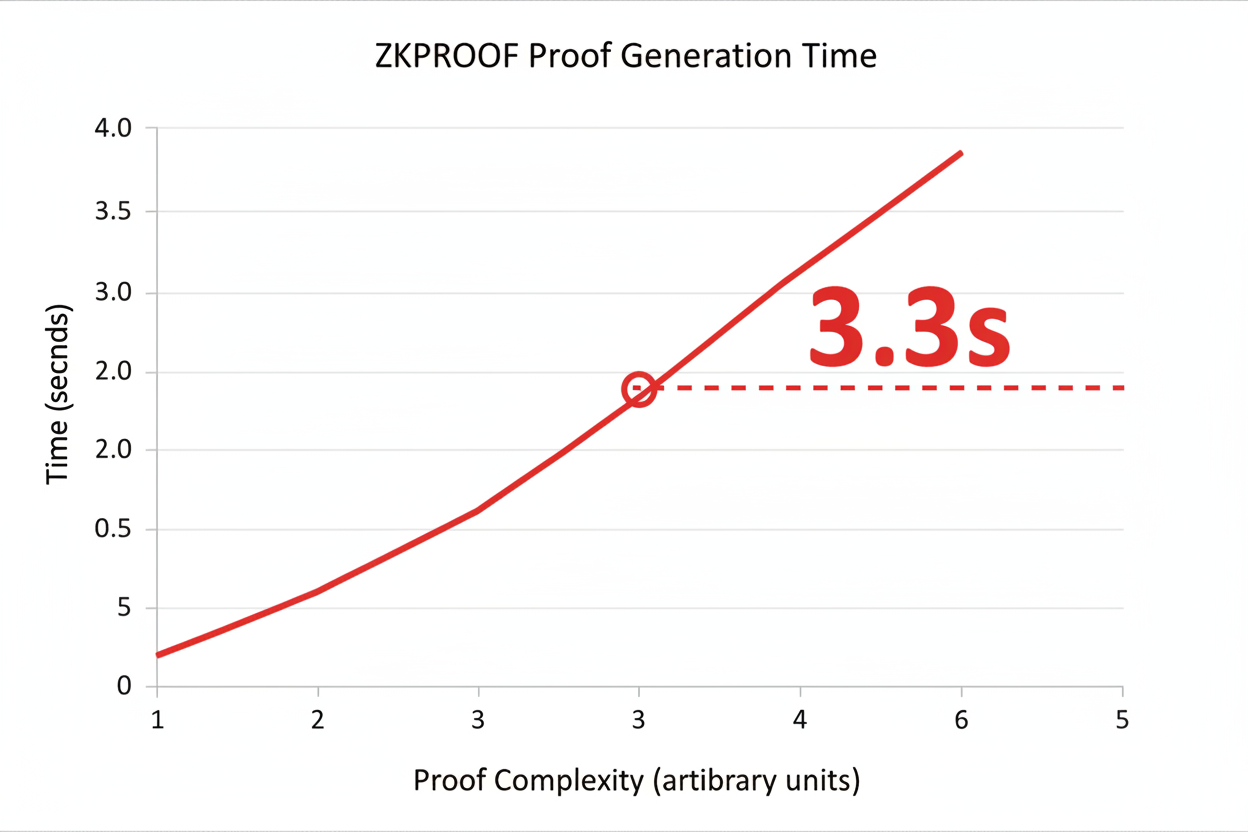

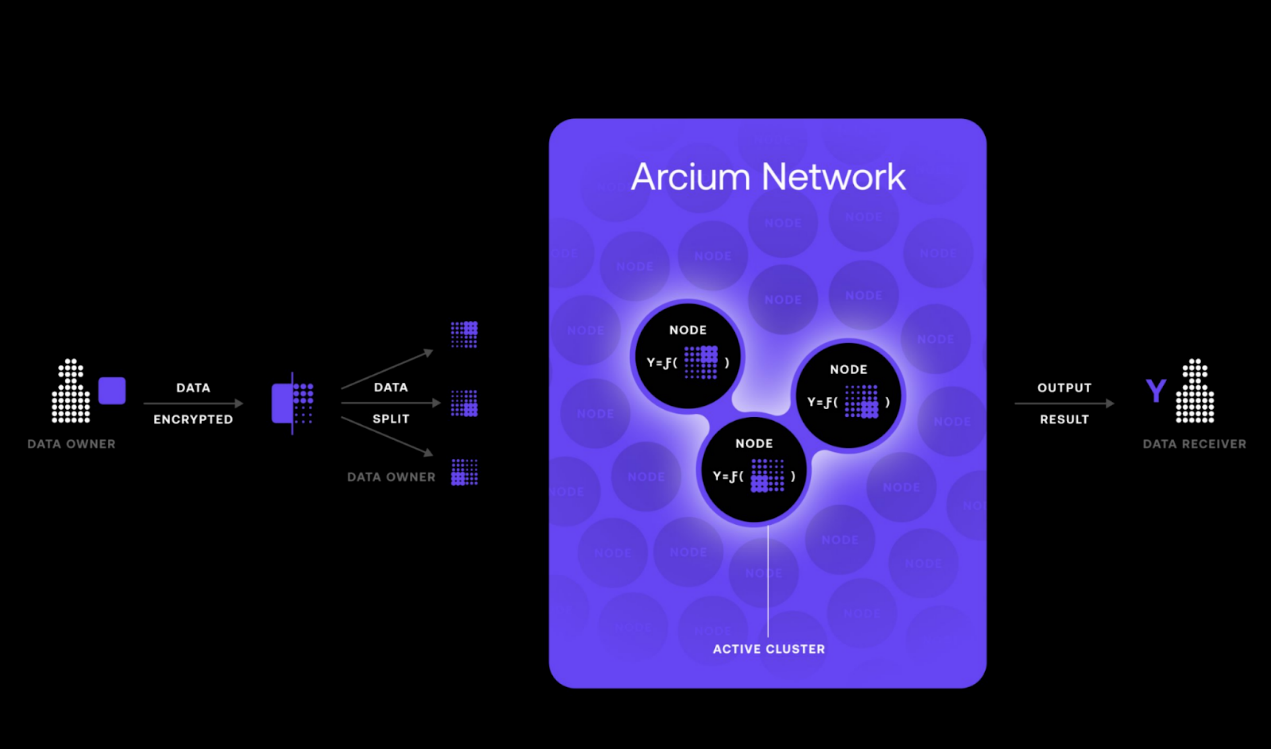

Hot off the presses from arXiv, ZKPROV is flipping the script on LLM trustworthiness. This framework ties your training datasets, model parameters, and even prompt responses into one neat package, topped with a ZK proof. Users query the model, get a response, and boom- the proof validates it all trained correctly on authorized data. Experimental runs? Sublinear scaling, proofs generated and verified in under 3.3 seconds for 8-billion-parameter behemoths. That's not lab fantasy; it's deployable now.

ZKPROV's Core Wins

- Sublinear Proof Scaling: Proof gen & verification scale slower than data size—perfect for massive AI training!

- Lightning-Fast: Under 3.3s End-to-End for models up to 8B params. Real-world speed without compromises!

- Formal Security Guarantees: Ironclad privacy for datasets—no leaks, full confidentiality proven mathematically.

- Binds Data-Model-Response: Complete provenance chain from training data to LLM outputs, verifiable via ZK proofs!

I love how it sidesteps older methods that leaked parameters or required full data disclosure. ZKPROV's formal guarantees mean you prove zero knowledge dataset attestation while keeping everything confidential. For developers chasing AI data licensing compliance, this is gold. Picture deploying models in finance or healthcare, where one data slip-up means lawsuits galore.

ZKPROV binds training datasets, model parameters, and responses, attaching zero-knowledge proofs to the LLM's outputs to validate these claims.

zkPoT and zkML: Proving Training Happened Right, Every Time

Zero-knowledge proofs of training- zkPoT- take it further. You commit to a dataset and model upfront, train it per spec, then prove it all matched without showing your cards. Papers from ACM and IACR hammer this home: prove correct training on committed data, no reveals. Kudelski Security's ZKML verifies entire training pipelines cryptographically. It's tamper-proof magic- commitments ensure nothing sneaky happened mid-process.

Why does this fire me up? Because in crypto and DeFi- my old stomping grounds- we live by 'don't trust, verify. ' AI needs that ethos yesterday. ZKML bridges privacy and performance, letting you leverage detailed datasets for top-tier models while upholding standards. Wilson Center nails it: train on rich data, expose nothing. Cloud Security Alliance echoes verifying model integrity sans architecture leaks.

Real-World Wins: zkFL-Health and Beyond for Sensitive Sectors

Fast-forward to 2026 context: zkFL-Health mashes federated learning, ZKPs, and trusted execution environments for medical AI. Clients train locally, commit updates; aggregator crunches in a TEE, spits a ZK proof of correct aggregation- all on-chain for audits. No trusting one party; immutable trail ensures confidentiality and integrity. Perfect for multi-institutional collab where clinical regs demand proof over promises.

This isn't pie-in-the-sky. Telefónica Tech and Medium deep dives show ZKPs proving statements sans extras, with proof privacy baked in. For privacy preserving ML proofs, it's the future. Enterprises can attest dataset origins, comply with licensing, and build trust- all while hoarding competitive edges. I've traded volatile altcoins; this volatility in AI trust? ZK proofs stabilize it boldly.

Medium's Ankita Singh spotlights tamper protection via commitments. World Network intros ZKML protocols where provers convince verifiers sans leaks. Harvard echoes ZKPROV's reliability checks. The trend? ZKPs infiltrating AI workflows, solving provenance puzzles in regulated realms like never before.

But let's get real- implementing this isn't all smooth sailing. Scaling ZK proofs for massive AI datasets? Computationally intense, right? Early zkPoT efforts struggled with proof times eating days for decent models. ZKPROV smashes that with sublinear scaling, but we need hardware accelerations and optimized circuits to go mainstream. Still, the momentum's there; think GPU farms tuned for ZKML, slashing verify times to milliseconds.

Overcoming Hurdles: From Theory to Production AI Pipelines

I've seen DeFi protocols crumble under unverified smart contracts; AI faces the same if training opacity persists. ZK proofs flip that script, but adoption needs developer-friendly tools. Enter platforms like ours at ZKModelProofs. com- generating attestations for ZK proofs AI training data with zero hassle. No PhD in crypto required. zkFL-Health shows the path: combine with TEEs for hybrid security, on-chain commitments for audits. Verifiers check proofs in seconds, recording everything immutably.

Opinion time: fortune favors the bold, and bold means betting on ZK now. Hesitate, and competitors with verified models snatch market share. In finance, prove your fraud-detection AI trained on licensed transaction data sans exposure- regulators eat that up. Healthcare? zkFL-Health's collab model means hospitals pool expertise without data dumps, complying with HIPAA on steroids.

zkSync Technical Analysis Chart

Analysis by Market Analyst | Symbol: BINANCE:ZKUSDT | Interval: 1D | Drawings: 6

Technical Analysis Summary

To annotate this ZKUSDT chart effectively in my balanced technical style, start by drawing a primary downtrend line connecting the October 2026 high around 0.75 to the recent February low near 0.10, using 'trend_line'. Add horizontal lines at key support 0.095 and resistance 0.25 with 'horizontal_line'. Mark the consolidation rectangle from mid-December 2026 to early January 2027 between 0.15-0.25 using 'date_price_range'. Place arrow_mark_down at the MACD bearish crossover in late January and callout on high volume dumps. Add long_position entry zone at 0.095 support and short_position if breaks below. Use text for labels like 'Bearish Breakdown' and fib_retracement from Oct high to Dec low for potential retracement levels.

Risk Assessment: medium

Analysis: Volatile crypto with clear downtrend but ZKP fundamentals supportive long-term; current oversold near 0.10 but no reversal confirmation yet

Market Analyst's Recommendation: Hold cash, watch for long above 0.15 or short bounces to 0.25 - medium risk setups only

Key Support & Resistance Levels

📈 Support Levels:

- $0.095 - Strong volume shelf near all-time low Feb 2026 strong

- $0.15 - Prior consolidation low Dec 2026 moderate

📉 Resistance Levels:

- $0.25 - Failed bounce high Jan 2026, downtrend resistance strong

- $0.4 - Intermediate Nov 2026 breakdown level weak

Trading Zones (medium risk tolerance)

🎯 Entry Zones:

- $0.095 - Bounce from strong support with volume divergence medium risk

- $0.24 - Short entry on resistance rejection medium risk

🚪 Exit Zones:

- $0.2 - Profit target at minor resistance 💰 profit target

- $0.08 - Stop loss below key support 🛡️ stop loss

- $0.15 - Short profit at support retest 💰 profit target

- $0.28 - Short stop above resistance 🛡️ stop loss

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: decreasing on ups, spiking on downs - distribution

Bearish volume profile confirms downtrend, low vol on Jan bounce signals weakness

📈 MACD Analysis:

Signal: bearish crossover late Jan

MACD histogram contracting negative, line below signal - momentum fading bearish

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by Market Analyst is for educational purposes only and should not be considered as financial advice. Trading involves risk, and you should always do your own research before making investment decisions. Past performance does not guarantee future results. The analysis reflects the author's personal methodology and risk tolerance (medium).

These proofs aren't just checks; they're competitive moats. Imagine tendering for enterprise contracts: 'Our model? Proven via ZK on premium datasets. ' Boom, trust unlocked. Privacy-preserving proofs mean you train on gold-standard data- scraped ethically, licensed properly- without rivals reverse-engineering your edge.

Developer Toolkit: Building Verifiable AI Today

Start simple: commit datasets via hashes, train with spec-compliant code, generate zkPoT. Tools from IACR papers optimize for optimum paths, minimizing prover costs. ZKML frameworks verify pipelines end-to-end. For LLMs, ZKPROV's response-binding proofs mean every output carries provenance- query, respond, verify. Under 3.3 seconds overhead? That's negligible for production.

Challenges remain, sure. Proof sizes bloat storage, but recursive proofs compress them. Interoperability across ZK systems? Emerging standards will fix that. My take: crypto taught me volatility rewards verifiers. AI's trust crisis is your volatility play- build with ZK, win big.

Sectors beyond health and finance beckon. Autonomous vehicles verifying sim data origins. Creative AI proving stock footage licensing. Everywhere data sensitivity clashes with model hunger, ZK steps in. zkFL-Health's aggregator model scales to any collab, TEEs shielding intermediates, ZKPs proving correctness. On-chain trails? Audit dreams for insurers and watchdogs.

The explosion continues. From arXiv breakthroughs to enterprise pilots, model provenance verification via ZK is non-negotiable. Developers, grab these tools- attest origins, comply seamlessly, dominate ethically. At ZKModelProofs. com, we're pioneering this daily, empowering you to prove without exposing. In AI's wild frontier, verifiable boldness pays off handsomely.

No comments yet. Be the first to share your thoughts!