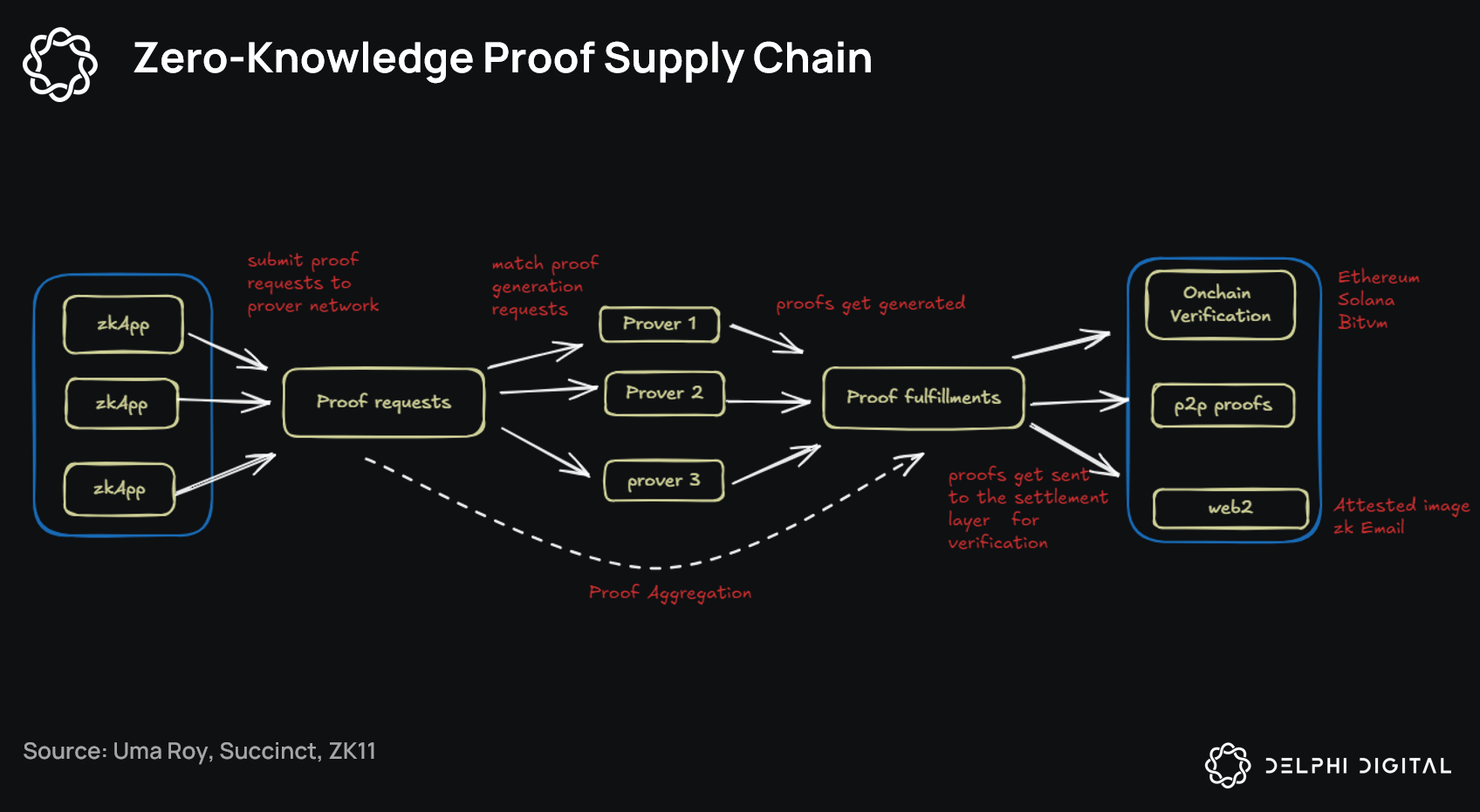

In the shadowy intersection of artificial intelligence and cryptography, a quiet revolution brews. Developers and enterprises grapple with the black-box nature of AI models, where training data origins remain opaque, breeding risks from licensing violations to poisoned datasets. Enter zkML blueprints: meticulously crafted zero-knowledge proofs tailored for machine learning, promising verifiable AI model provenance without compromising privacy. These aren't just theoretical constructs; they're practical circuits enabling trust in AI training pipelines, as seen in repositories like inference-labs-inc/zkml-blueprints on GitHub.

Traditional ML workflows treat data provenance as an afterthought, relying on brittle audits or self-reported attestations. zkML flips this script. By encoding training processes into zero knowledge machine learning circuits, provers can demonstrate that a model was trained on compliant datasets - think licensed images, proprietary texts, or audited medical records - all while revealing zero sensitive details. This balances innovation's speed with accountability's demands, a strategic necessity in regulated sectors like finance and healthcare.

Decoding the Core of zkML Blueprints

At their heart, zkML blueprints distill complex ML operations into arithmetic circuits compatible with SNARKs or STARKs. Take zkml-blueprints from Inference Labs: a treasure trove of mathematical formulations for layers like convolutions and transformers, optimized for proof efficiency. These designs sidestep the computational abyss of proving full training runs by focusing on commitments - cryptographic hashes of datasets that verifiers can challenge without unpacking the data itself.

Opinion ahead: while inference-focused tools like ZKML compilers grab headlines for 5x faster verification, training provenance demands bolder strokes. Proving an 8B-parameter LLM trained on authorized data? That's where the magic - and the market edge - lies. Recent GitHub advancements, including worldcoin's awesome-zkml list, curate these resources, underscoring a maturing ecosystem ripe for adoption.

ZKPROV and the Dawn of Scalable Training Attestations

June 2025 marked a pivot with ZKPROV, binding datasets, parameters, and outputs in proofs generated under 3.3 seconds for billion-parameter models. This framework doesn't just verify; it enforces dataset licensing zk proofs, letting enterprises attest compliance on-chain or via APIs. Imagine deploying a model with a tamper-proof badge: "Trained solely on licensed Creative Commons data. " No more lawsuits over scraped web content.

Balanced view: Scalability remains the bottleneck. ZKPROV shines for mid-sized LLMs, but hyperscalers eye recursion for trillion-parameter proofs. Pair it with zkDL's deep learning optimizations, and you get circuits verifying inference correctness sans model exposure - a dual punch for end-to-end verifiability.

From arXiv surveys to Kudelski Security's breakdowns, the consensus builds: ZKML isn't hype. It's infrastructure. Open-sourcing efforts like Daniel Kang's zkml framework democratize this, allowing indie devs to bake provenance into custom models.

Verifiable Fine-Tuning: Protocols That Stick

October 2025's Verifiable Fine-Tuning Protocol elevated the game. It produces succinct proofs confirming a model descended from a public base via a committed dataset and policy. This targets fine-tuning pitfalls - where most real-world adaptation happens - ensuring no shadow data creeps in.

Strategically, this empowers marketplaces. Providers upload models with embedded proofs; buyers verify provenance instantly. No more "trust me, bro" releases. Integrate with Jolt Atlas's lookup-centric inference (February 2026), and you've got a stack for privacy-first AI: prove training, fine-tuning, and deployment holistically.

These blueprints aren't static. HackMD notes and ScienceDirect overviews highlight zk-SNARK verifiers as the linchpin, checking LLM computations atomically. As circuits evolve, expect hybrid approaches blending ZK with TEEs for hybrid trust models.

Provers today leverage these verifiers to close the loop on AI supply chains, turning opaque pipelines into auditable ledgers. Yet, the real test lies in deployment: how do teams integrate zkML blueprints without refactoring entire codebases? Enter modular designs from repositories like inference-labs-inc/zkml-blueprints, which offer plug-and-play circuits for common ops like matrix multiplications and attention mechanisms.

Crafting Circuits: From Blueprint to Proof

Building a zkML blueprint starts with decomposing ML ops into polynomial constraints. Convolution layers, for instance, become lookup tables over precomputed kernels, slashing proof sizes. This isn't plug-and-play magic; it demands circuit savvy. But tools like the ZKML compiler bridge the gap, converting TensorFlow graphs to SNARK-ready circuits with 22x smaller proofs than vanilla approaches.

Strategically, prioritize lookup arguments over full gate evaluations - Jolt Atlas nails this for ONNX tensors, enabling inference in tight memory footprints. Pair with ZKPROV's dataset commitments, and developers prove zk proofs ai training data integrity end-to-end. Opinion: Skip this now, and you're building on sand. Enterprises ignoring verifiable ai model provenance face regulatory whiplash as EU AI Act mandates data lineage by 2027.

Challenges persist. Proving full training loops for trillion-parameter behemoths? Current STARKs strain under exponentiation-heavy ops. Balanced take: Recursion and aggregation protocols, hinted in zkDL papers, will compress these to minutes. Meanwhile, hybrid ZK-TEE stacks offer interim scalability, verifying enclave executions cryptographically.

Enterprise Playbook: Monetizing Provenance

Forward-thinking firms treat zkML as a moat. Financial models trained on licensed market data? Prove it with dataset licensing zk proofs, unlocking premium licensing deals. Healthcare LLMs on HIPAA-compliant records? Attest without audits. Marketplaces like Hugging Face could embed verifiers natively, filtering models by proof badges.

Consider a workflow: Commit dataset hashes on-chain, train with zk-instrumented frameworks, generate proofs via ZKPROV. Verifiers query badges instantly. This isn't theoretical; open-source zkml frameworks already power trustless inference, as Daniel Kang's release proves. From GitHub's awesome-zkml to on-chain ML overviews, the toolkit matures.

Comparison of zkML Frameworks

| Framework | Primary Focus | Proof Performance | Key Features | Introduced |

|---|---|---|---|---|

| ZKPROV | Training Data Provenance | 3.3s for models up to 8B params | Binds datasets, model parameters, and responses; verifies authorized training without revealing data | June 2025 |

| Jolt Atlas | Model Inference | Low-memory proving | ONNX tensor operations; lookup-centric; suitable for memory-constrained and privacy-centric environments | February 2026 |

| zkDL | Deep Learning | Succinct proofs | DL-specific; verifies inference correctness without disclosing data or model | Pre-2025 |

Nuance here: Pure ZK sacrifices speed for ironclad privacy; TEEs trade verifiability for efficiency. Smart hybrids win - prove TEE attestations with SNARKs. As economic cycles tighten scrutiny on AI ROI, zero knowledge machine learning circuits emerge as disciplined diversification: hedge against data scandals while accelerating deployment.

The zkML blueprint ecosystem, from 2017 arXiv surveys to 2026's Jolt innovations, charts a verifiable future. Developers wielding these tools don't just build models; they forge trust at scale. In a world of poisoned data and licensing landmines, that's not optional - it's the new baseline for sustainable AI dominance.

No comments yet. Be the first to share your thoughts!