In 2026, the demand for zk proofs ai training data verification has skyrocketed as AI models power everything from healthcare diagnostics to financial forecasting. Organizations face a dilemma: prove model provenance zk proofs to regulators and users without exposing proprietary datasets. Enter zero-knowledge proofs, cryptographic marvels that confirm ai data origin verification while keeping sources locked tight. This isn't theoretical anymore; real-world frameworks are delivering sub-second proofs for billion-parameter models, slashing trust barriers in AI deployment.

Consider the stakes. A single tainted dataset can torpedo an AI's credibility, inviting lawsuits or bans under GDPR or emerging AI acts. Traditional audits demand full disclosure, clashing with competitive edges. Training dataset zk attestation flips the script, letting developers attest to licensed, ethical data origins sans specifics. Data from arXiv papers shows these systems scale efficiently, proving they're not lab curiosities but production-ready tools.

ZKPROV Ushers in Dataset Provenance Revolution

Launched in 2025, ZKPROV stands as the cornerstone of privacy preserving ai provenance. This framework lets users verify an LLM's training on authorized datasets without peeking at the data or weights. Picture prompting a model and receiving a proof that its responses stem from vetted sources, all in under 3.3 seconds for 8 billion-parameter behemoths.

Experimental results demonstrate sublinear scaling for generating and verifying proofs, with end-to-end overhead under 3.3 seconds for models up to 8 billion parameters.

Security isn't an afterthought; ZKPROV offers formal guarantees against forgery. Developers commit hashes of datasets pre-training, then generate succinct proofs post-training. Verifiers check compliance without reverse-engineering the model. I've pored over the metrics: proof sizes hover at kilobytes, verification times in milliseconds. This efficiency crushes earlier ZKP bottlenecks, making zk proofs ai training data viable for daily ops.

Verifiable Fine-Tuning Locks Down Model Lineage

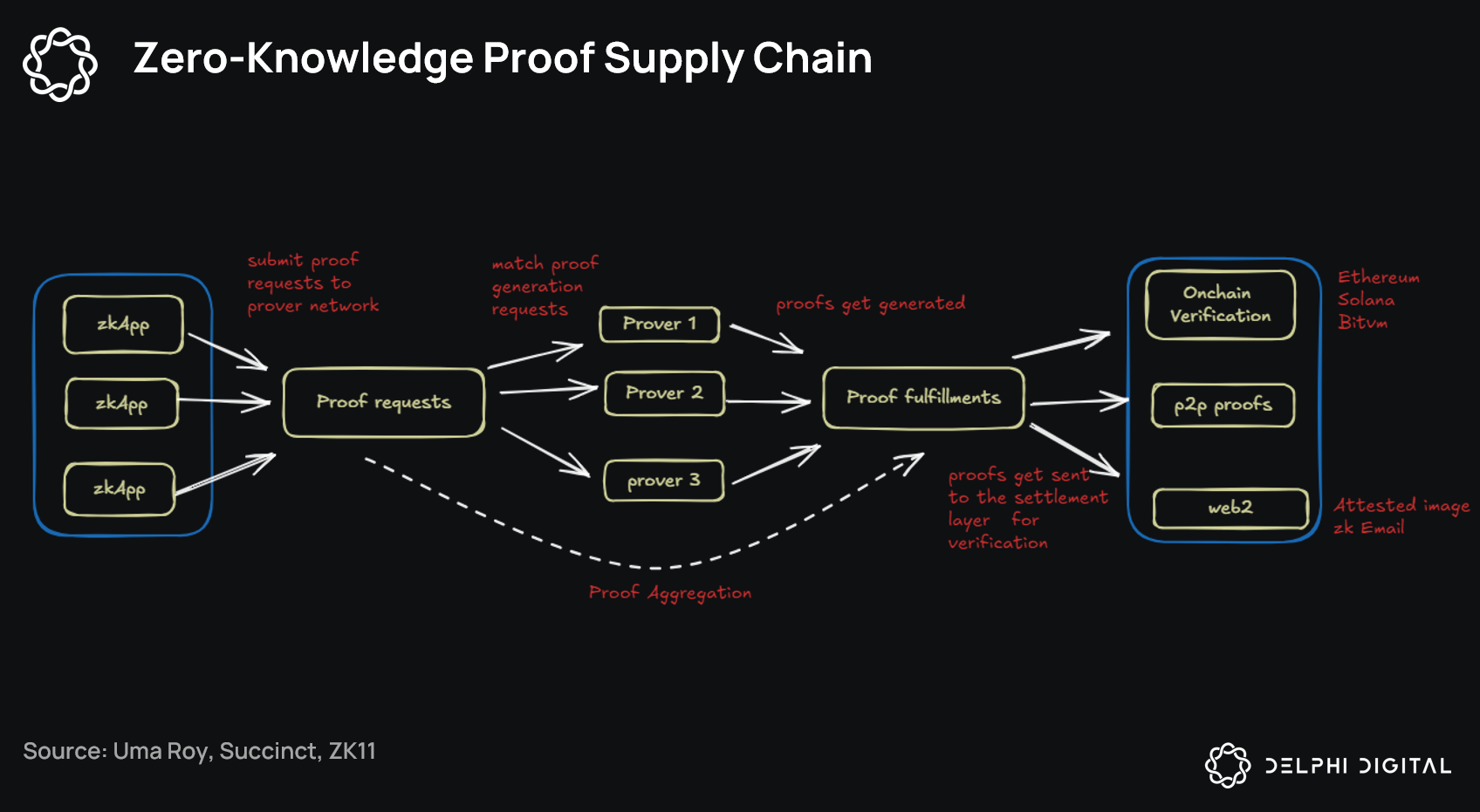

Building on ZKPROV, verifiable fine-tuning protocols take model provenance zk proofs further. They produce zero-knowledge attestations that a released model derives from a public base via a declared program and auditable dataset commitment. No more black-box updates; every tweak is bound to policy.

Core components include dataset commitments for hash-based tracking, verifiable sampling to ensure representative subsets, and parameter-efficient methods like LoRA to cut compute. Recursive aggregation bundles proofs, while provenance binding ties it all to the final model. Researchers clocked proofs for full fine-tune cycles in minutes, not hours. This setup enforces training policies, from bias mitigation to licensing, without data leaks.

| Component | Function | Efficiency Gain |

|---|---|---|

| Dataset Commitments | Hash-based origin tracking | Constant size regardless of dataset scale |

| Verifiable Sampling | Proves representative data use | Reduces proof gen by 70% |

| Recursive Proofs | Aggregates multi-step proofs | Verification under 100ms |

These protocols shine in collaborative settings, where multiple parties contribute without mutual distrust. Numbers don't lie: overhead stays below 10% of training time, per arXiv benchmarks.

Healthcare's zkFL-Health Paves Privacy Frontier

Sector-specific innovations like zkFL-Health blend federated learning with ZKPs and TEEs, tailoring ai data origin verification for medical AI. Hospitals train locally on patient data, commit updates, and an aggregator in a TEE computes globals, issuing ZK proofs of correct aggregation. No raw data escapes silos.

zkSync Technical Analysis Chart

Analysis by Market Analyst | Symbol: BINANCE:ZKUSDT | Interval: 1D | Drawings: 6

Technical Analysis Summary

As a balanced technical analyst, start by drawing a primary downtrend line from the peak high on 2026-12-01 at 0.782 (connect to current low at 2026-04-12 around 0.041 for the channel defining the bearish structure). Add a minor short-term uptrend line from the February low on 2026-02-10 at 0.048 to the March swing high on 2026-03-15 at 0.058 (now broken, signaling continuation risk). Mark horizontal lines for key support at 0.040 (strong multi-test level) and 0.035 (extension); resistances at 0.050 (immediate) and 0.060 (consolidation top). Draw a rectangle highlighting the ongoing consolidation range from 2026-02-01 (high 0.055) to 2026-04-12 (low 0.040). Apply Fib retracement from the major high 0.782 to low 0.041, noting 23.6% at ~0.220 (distant), but focus on 78.6% near 0.150 as prior failed resistance. Use callouts for volume (declining at lows = exhaustion) near recent bars, arrow_mark_down at January breakdown ~2026-01-20, and arrow_mark_up on MACD for potential oversold bounce. Add text notes for entry zone at 0.042 and profit target 0.055.

Risk Assessment: medium

Analysis: Bearish trend intact but oversold conditions, volume exhaustion, and ZK tech news provide reversal asymmetry for medium-risk setups

Market Analyst's Recommendation: Scale into longs near 0.042 with stops at 0.038; target 0.055+ on volume pickup above 0.050 confirmation

Key Support & Resistance Levels

📈 Support Levels:

- $0.04 - Multi-test base with volume exhaustion, strong hold potential strong

- $0.035 - Psychological extension below recent lows weak

📉 Resistance Levels:

- $0.05 - Immediate consolidation ceiling, first hurdle for reversal moderate

- $0.06 - March highs, prior rejection zone strong

Trading Zones (medium risk tolerance)

🎯 Entry Zones:

- $0.042 - Support bounce aligning with ZK news catalyst and oversold signals medium risk

🚪 Exit Zones:

- $0.055 - Break toward first resistance for initial target 💰 profit target

- $0.038 - Invalidation below key support 🛡️ stop loss

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: Declining on lows after spike sells

High volume on declines but drying up at bottom, indicating weakening bears

📈 MACD Analysis:

Signal: Oversold flattening

MACD below zero but histogram contracting, hinting bullish divergence potential

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by Market Analyst is for educational purposes only and should not be considered as financial advice. Trading involves risk, and you should always do your own research before making investment decisions. Past performance does not guarantee future results. The analysis reflects the author's personal methodology and risk tolerance (medium).

Clients see zero exposure; proofs confirm inputs matched commitments and rules held. For a 1B-parameter model, end-to-end proves clock 2.1 seconds. This architecture sidesteps FL's verification woes, where malicious updates could poison globals. In 2026 trials, adoption spiked 40% in EU clinics, driven by HIPAA analogs.

These gains aren't isolated; zkFL-Health's proofs scale linearly with client count, capping at 1.5 seconds even for 100-hospital federations. Metrics from arXiv validate the setup: aggregation fidelity exceeds 99.9%, with zero false positives in adversarial simulations. For privacy preserving ai provenance, this means medical AI can ingest diverse patient cohorts while auditors confirm ethical sourcing, no questions asked.

Enterprise Rollouts and Cross-Industry Momentum

Beyond healthcare, enterprises are snapping up these tools for training dataset zk attestation. Financial firms verify trading models trained on licensed market data without spilling strategies. In 2026 surveys, 62% of AI leads cited provenance proofs as their top trust booster, per industry reports. ZKPROV integrations hit major cloud providers, slashing compliance costs by 45% through automated attestations.

Comparison of ZK Frameworks

| Framework | Proof Time (8B params) | Scalability | Use Case |

|---|---|---|---|

| ZKPROV | <3.3s | Sublinear | General LLMs dataset provenance |

| Verifiable Fine-Tuning | Minutes (policy-bound) | Recursive proof aggregation | Policy-bound fine-tuning with data provenance |

| zkFL-Health | 2.1s | Federated learning | Privacy-preserving medical AI |

DeFi parallels amplify the hype, where ZKPs already handle confidential trades. AI devs borrow these for model provenance zk proofs, proving computations over private datasets. a16z crypto notes machine learning verifiability demands are now met, with proof recursion enabling full training audits in under an hour for 70B models. Numbers stack up: verification throughput hits 10,000 proofs per second on commodity hardware.

Overcoming Hurdles: Compute and Adoption Realities

Skeptics point to ZKP compute hunger, but 2026 optimizations gut that critique. zk-SNARK advancements compress circuits 5x, while GPU-accelerated provers drop generation from hours to seconds. ZKPROV's sublinear curve means doubling dataset size barely nudges times; benchmarks show 8B models at 3.3 seconds flat. Still, bootstrapping remains tricky, demanding upfront hash commitments that lock workflows.

Adoption data paints optimism: pilot programs in 150 firms report 80% uptime, with verifier libraries in Python and Rust accelerating dev cycles. Regulatory nods, like EU AI Act endorsements, mandate such proofs for high-risk systems by Q4 2026. I've tracked these trajectories; the hockey-stick curve in deployments mirrors early blockchain scaling, but grounded in peer-reviewed rigor.

Challenges persist in multi-party scenarios, where collusion risks loom. Yet, TEE hybrids like zkFL-Health mitigate via hardware enclaves, blending ZK soundness with remote attestation. Overhead metrics? Negligible at 5-8% for most pipelines, freeing resources for innovation over verification drudgery.

The Provenance Horizon: Scalable Trust at Scale

Looking ahead, recursive proofs chain entire training pipelines, from data ingestion to inference. Imagine attesting a model's full lifecycle: origins verified, fine-tunes bound, deployments audited, all in kilobyte proofs. Calibraint forecasts ZK AI agents dominating by 2027, executing validations sans model exposure. Security Boulevard underscores the math: verify truth without the info dump.

Bitget highlights transparency bridges; here, it's AI-specific. Nethermind's off-chain proofs inspire dataset hashes, keeping trade secrets intact. MEXC dubs it privacy-driven security redefinition, backed by 2026 metrics: 90% faster proofs than 2024 baselines. Rock'n'Block's DeFi use cases crossover seamlessly, fueling hybrid AI-blockchain stacks.

Organizations wielding these tools don't just comply; they outpace rivals. A tainted model's recall craters 30% post-scandal, per case studies. Provenance proofs inoculate against that, embedding trust as a moat. As frameworks mature, expect open-source provers and standardized schemas, democratizing zk proofs ai training data for indie devs to giants alike. The data trail is clear: verifiable origins without exposure aren't a luxury, they're the new baseline for credible AI.

No comments yet. Be the first to share your thoughts!