Federated learning has reshaped how we train AI models across distributed devices, keeping raw data local while sharing only model updates. But this setup invites skepticism: how do participants confirm that the central aggregator correctly combines gradients or model weights without tampering? Zero-knowledge proofs offer a rigorous solution, allowing zk proofs ai training verification and secure data aggregation. These proofs cryptographically attest to the faithful execution of training algorithms, all while preserving privacy. Recent innovations underscore their potential, though overheads demand careful evaluation.

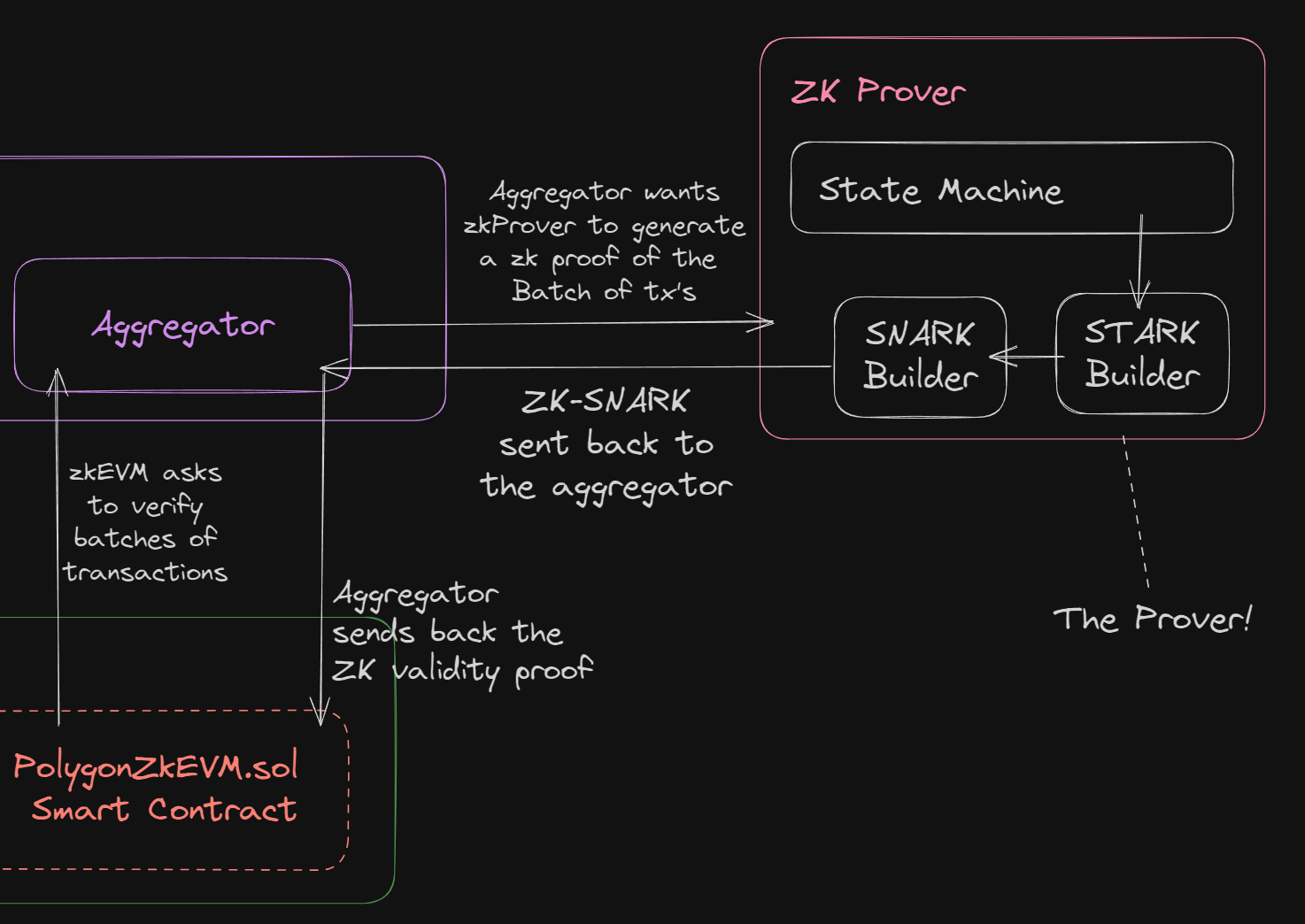

Consider the aggregator's pivotal role. Clients compute local updates on private data, then send them for averaging into a global model. A malicious or faulty aggregator could skew results, poison the model, or leak inferences. Traditional checks fall short; they either demand data exposure or rely on blind trust. Zero knowledge proofs federated learning flips this script by transforming aggregation into provable computations.

Unmasking Vulnerabilities in Model Aggregation

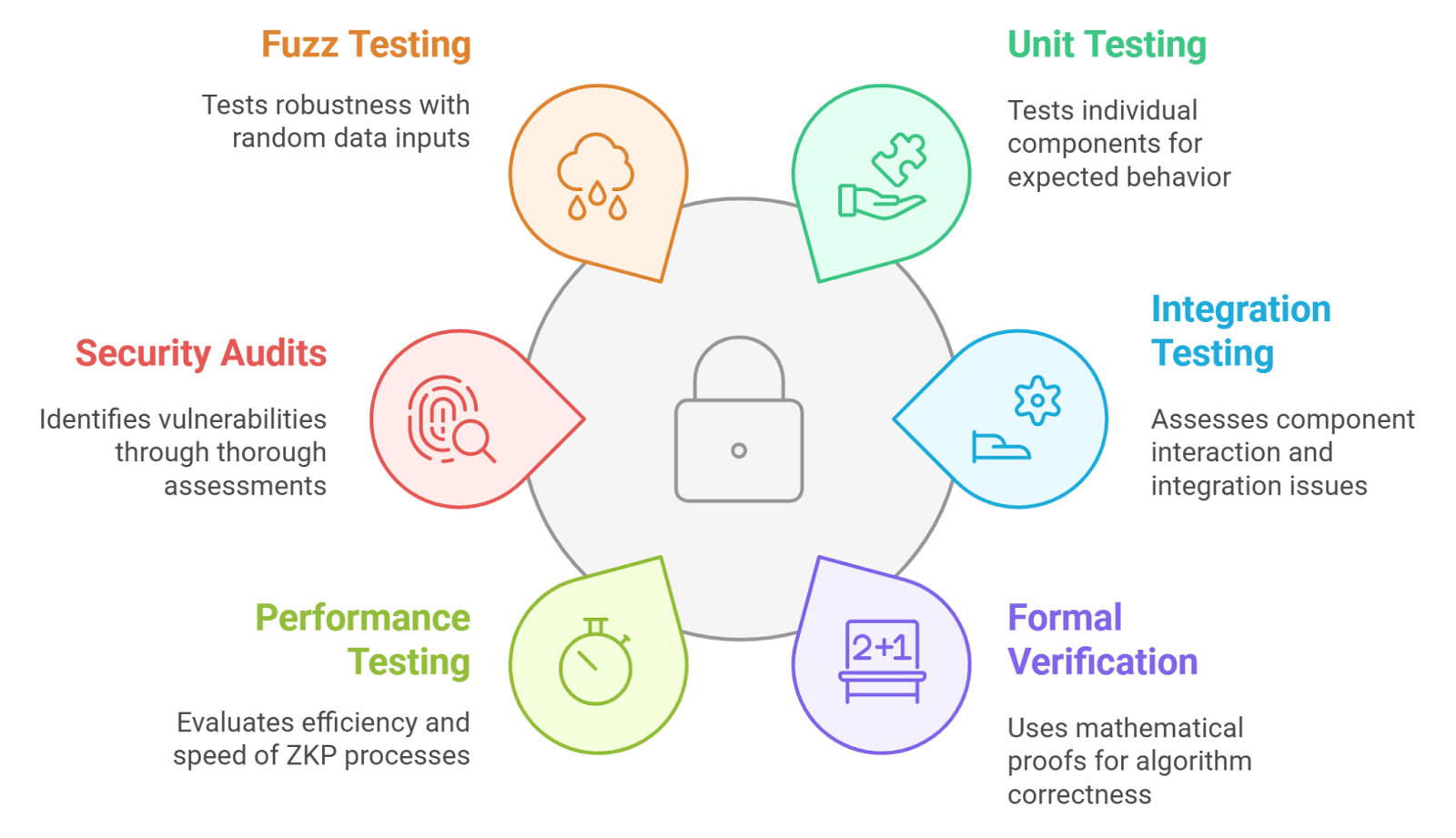

Standard federated learning assumes honest servers, a fragile premise in adversarial settings. Byzantine faults, where nodes deviate arbitrarily, amplify risks. Research highlights this: without verification, aggregators might inject biased weights or exclude valid contributions. Enter frameworks like zkFL, which use zk-SNARKs to prove correct gradient aggregation. Clients verify the server's output matches their inputs' weighted sum, sans revealing local models.

This matters for verifiable ai training data aggregation. Proofs ensure compliance with training protocols, mitigating risks from untrusted parties. Yet, as an expert attuned to computational trade-offs, I caution that generating zk-SNARKs scales poorly with model size. Groth16 circuits, efficient for verification, still burden proving times during training rounds.

zkFL: A Blueprint for Provable Aggregation

zkFL pioneers by circuitizing the aggregation algorithm. Each client proves its gradient computation locally, then the server aggregates and generates a proof of honest summation. Blockchain logs these for tamper-proof auditing. Studies confirm it preserves training accuracy while slashing trust needs. Practical viability shines: verification remains constant-time, ideal for resource-constrained edges.

Blockchain integration adds resilience, but introduces latency. In my view, this hybrid merits adoption where regulatory scrutiny looms, like healthcare AI. It enforces privacy preserving ml compliance, proving datasets stayed siloed.

Core zkFL Benefits

- Verifies malicious aggregator exclusion: zkFL employs ZKPs to prove correct aggregation by the central server, excluding malicious behavior without exposing client models.arXiv

- Maintains model performance parity: Achieves training accuracy comparable to standard FL, confirming practical viability per IEEE and arXiv studies.

- Enables blockchain-anchored proofs: Integrates blockchain for efficient proof management and verifiable aggregation in distributed settings.

- Supports scalable zk-SNARK verification: Leverages efficient Groth16 zk-SNARKs for rapid proof verification, suitable for large-scale FL.

ByzSFL and Beyond: Tackling Byzantine Resilience

ByzSFL elevates this by localizing weight computations, proven via ZKPs. Roughly 100 times faster than rivals, it computes aggregation weights client-side, thwarting server dominance. Distributed nodes issue proofs alongside models, letting aggregators validate integrity sans recomputation.

Parallel advances include Verifiable Fine-Tuning for LLMs, committing to datasets and sampling verifiably. ZK-HybridFL leverages DAG ledgers and sidechains for decentralized validation, boosting convergence speed. fedzk, a Python toolkit, streamlines production deployment. These tools collectively forge ai model provenance zk, tracing training lineage cryptographically.

Proof generation per round, while intensive, yields succinct verifications. Opinion: prioritize for high-stakes domains; benchmark overheads first in pilots.

Implementing these systems demands more than theory; it requires robust tooling. fedzk stands out as a production-ready Python framework, handling the end-to-end workflow from training to proof verification. Developers can integrate it seamlessly into existing FL pipelines, generating cryptographic guarantees for model updates without overhauling infrastructure. This lowers the barrier for experimentation, yet I advise thorough stress-testing under varied network conditions.

Practical FedZK Python Example: Client-Side Training with ZK Proofs

The following is a cautious, simplified Python implementation using Flower (flwr) for federated learning and hypothetical ZK components. Real ZK-SNARK integration for gradient verification requires custom circuits (e.g., via Circom) and efficient provers like Groth16 or Plonk, as full neural net proofs are computationally intensive. This example focuses on structure and key steps.

import torch

import torch.nn as nn

import flwr as fl

from typing import Dict, List, Tuple, Any

# Hypothetical FedZK library for ZK-SNARK integration

# In practice, use libraries like ezkl or custom Circom bindings

class ZKProver:

def __init__(self, circuit_path: str):

self.circuit_path = circuit_path # e.g., 'gradient_update.circom'

def prove(self, witness: Dict[str, torch.Tensor], public_inputs: List[torch.Tensor]) -> bytes:

# Simulate proof generation (replace with actual proving engine)

# Real impl: compile witness to R1CS, generate SNARK proof

return b'\x00' * 48 # Placeholder proof

class CircuitOptimizer:

def __init__(self, prune_ops: bool = True, recursive_proofs: bool = True):

self.prune_ops = prune_ops

self.recursive = recursive_proofs

def optimize(self, gradients: List[torch.Tensor]) -> Dict[str, Any]:

# Prune redundant ops, compose recursive proofs for large circuits

# Caution: Circuit size explodes with model depth; use aggregation circuits

optimized_witness = {'grads': gradients}

return optimized_witness

class FedZKClient(fl.client.NumPyClient):

def __init__(self, model: nn.Module, train_data: Any):

self.model = model

self.train_data = train_data

self.zk_prover = ZKProver('gradient_update.circom')

self.circuit_opt = CircuitOptimizer(prune_ops=True, recursive_proofs=True)

def get_parameters(self, config: Dict[str, str]) -> List[np.ndarray]:

return [param.cpu().numpy() for param in self.model.parameters()]

def set_parameters(self, parameters: List[np.ndarray]) -> None:

params = [torch.tensor(p) for p in parameters]

for param, new_param in zip(self.model.parameters(), params):

param.data = new_param.to(param.device)

def fit(self, parameters: List[np.ndarray], config: Dict[str, str]) -> Tuple[List[np.ndarray], int, Dict[str, Any]]:

self.set_parameters(parameters)

optimizer = torch.optim.SGD(self.model.parameters(), lr=float(config.get('lr', 0.01)))

num_examples = 0

for epoch in range(int(config.get('epochs', 5))):

for batch_x, batch_y in self.train_data: # Assume dataloader

optimizer.zero_grad()

pred = self.model(batch_x)

loss = nn.MSELoss()(pred, batch_y)

loss.backward()

optimizer.step()

num_examples += len(batch_x)

# Extract gradients for proof (in practice, prove over full computation trace)

self.model.zero_grad()

dummy_loss = self.model(self.train_data.dataset.tensors[0][:1])[0].sum()

dummy_loss.backward()

gradients = [p.grad.clone().detach() for p in self.model.parameters()]

# Optimize and generate ZK proof

witness = self.circuit_opt.optimize(gradients)

proof = self.zk_prover.prove(witness, public_inputs=gradients)

# Return updated params with proof metadata

return self.get_parameters({}), num_examples, {'zk_proof': proof.hex()}

# Example usage (server-side aggregation verifies proofs before summing updates)

# model = nn.Linear(784, 10)

# client = FedZKClient(model, trainloader)

# fl.client.start_numpy_client(server_address="[::]:8080", client=client)

**Expert notes:** Operation pruning reduces circuit size by eliding zero gradients or linear ops; recursive proofs (e.g., via Nova) compose sub-proofs for scalability. Always implement server-side proof verification and secure multi-party computation for aggregation. Test thoroughly—ZK proofs add latency; profile proving time for your model size. Production use demands audited libraries and no trusted setups where possible.

Comparative analysis reveals trade-offs across frameworks. zkFL excels in aggregator verification but incurs blockchain latency. ByzSFL prioritizes speed, slashing computation by orders of magnitude through client-side weights. ZK-HybridFL decentralizes entirely, trading central efficiency for resilience. Verifiable Fine-Tuning targets LLMs specifically, ensuring auditable dataset commitments.

Comparison of ZK-FL Frameworks

| Framework | Key Feature | Speed Gain | Use Case |

|---|---|---|---|

| zkFL | ZKPs ensure correct aggregator model aggregation without revealing client data; blockchain for proofs. Pros: Protects against malicious aggregators, efficient verification. Cons: Proof generation per round adds overhead. | Efficient zk-SNARK verification (Groth16) | FL with untrusted central aggregator |

| ByzSFL | Byzantine robustness via local aggregation weights and ZKP proofs. Pros: High security, significant efficiency gains. Cons: Requires client-side computation. | ~100x faster than existing solutions | Secure FL resilient to Byzantine faults |

| ZK-HybridFL | Decentralized FL using DAG ledger, sidechains, ZKPs, smart contracts for privacy-preserving validation. Pros: Faster convergence, higher accuracy. Cons: Blockchain complexity. | Faster convergence | Privacy-preserving decentralized FL |

| fedzk | Production-ready Python framework for end-to-end training, proving, verifying with ZKPs. Pros: Easy to deploy, cryptographic guarantees. Cons: Framework-specific dependencies. | N/A | Implementing privacy-preserving FL systems |

These distinctions guide selection: opt for zkFL in regulated environments demanding audit trails; choose ByzSFL for latency-sensitive IoT deployments. My conservative stance favors hybrids blending ZKPs with partial trust models, avoiding full decentralization's overhead until hardware accelerates proving.

Overheads and Mitigation StrategiesBalancing Security with Efficiency

Computational costs loom large. Proving a single aggregation round with Groth16 might take seconds on GPUs, minutes on CPUs, scaling quadratically with parameter count. Verification stays millisecond-fast, a boon for real-time checks. Mitigation tactics include proof recursion, as in Verifiable Fine-Tuning, aggregating proofs hierarchically; hardware accelerators like custom ASICs; or hybrid schemes proving only critical steps.

Privacy leaks via inference attacks persist, even with ZKPs. Proofs attest computation fidelity, not data minimality. Pair with differential privacy for layered defense. Bandwidth strains from proof transmission add up in massive client pools; compress via proof aggregation protocols.

In high-stakes sectors like finance or medicine, where I cut my teeth managing interest rate risks, such verifiability unlocks deployment. Imagine banks federating fraud models across branches: ZKPs prove unbiased aggregation, complying with data sovereignty laws without centralizing sensitive transactions. Yet, pilot rigorously; one overlooked overhead can derail scalability.

Emerging Horizons for ZK-Verified Training

Horizons brighten with recursive SNARKs and quantum-resistant schemes. ZK-HybridFL's DAG-sidechain model hints at blockchain-agnostic futures, where oracles feed proofs into smart contracts for automated payouts on verified contributions. fedzk's modularity invites extensions to vertical FL, where data partitions by features.

Regulatory tailwinds accelerate adoption. EU AI Act mandates high-risk model transparency; ZKPs furnish ai model provenance zk succinctly. Enterprises eyeing privacy preserving ml compliance find in zkFL a compliance engine, logging provable executions immutably. Ultimately, these proofs transform federated learning from trust-minimized to trust-verified. While no panacea, they equip practitioners to navigate distributed AI's perils prudently. Benchmark, iterate, deploy: risk controlled indeed unlocks opportunity in verifiable training.

No comments yet. Be the first to share your thoughts!