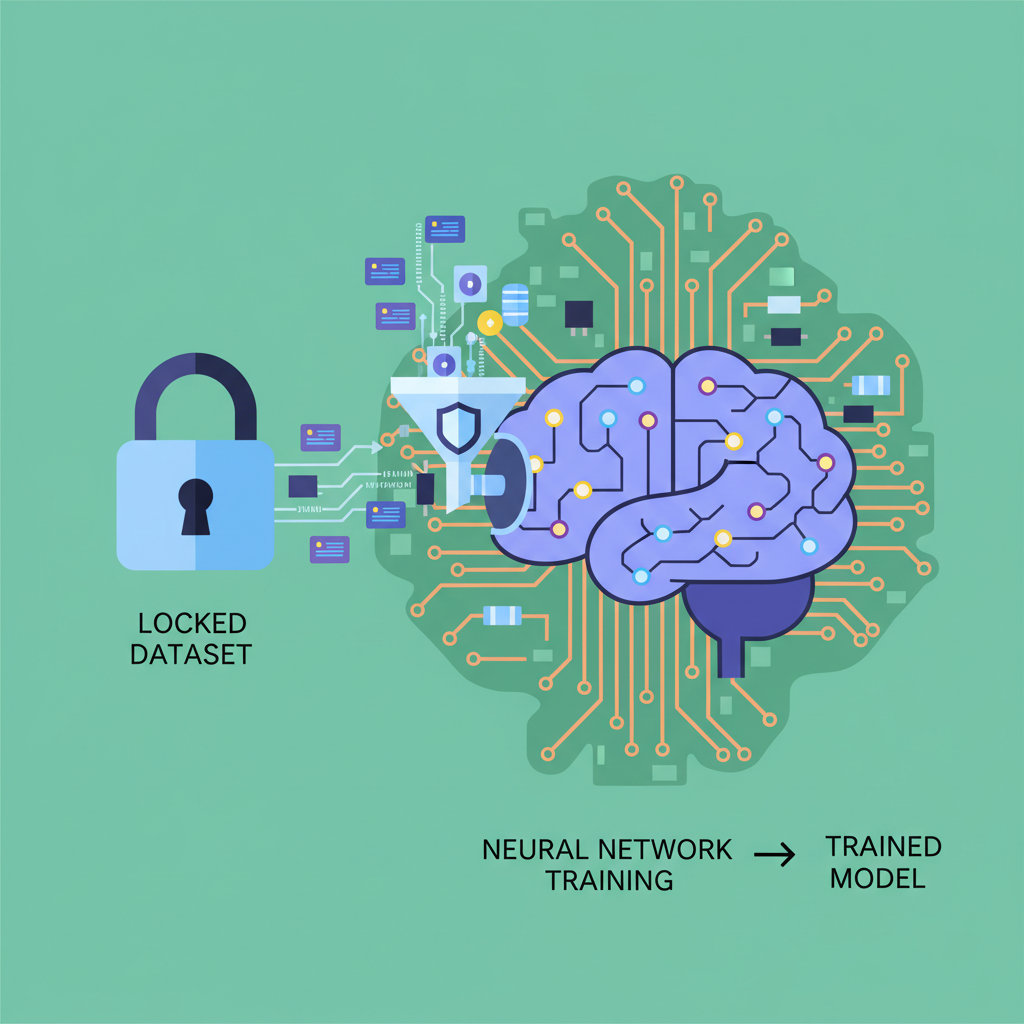

In the rapidly evolving landscape of artificial intelligence, the black box nature of training data has long been a thorn in the side of trust and accountability. Developers release models promising revolutionary capabilities, yet questions linger: what datasets fueled them? Were they ethically sourced, licensed properly, or contaminated with biases? Enter zero-knowledge proofs (ZKPs) for AI training data provenance, a cryptographic leap that verifies origins without spilling sensitive details. This isn't mere theory; recent innovations like ZKPROV demonstrate practical paths forward, balancing privacy with verifiability in ways that could redefine model provenance zk proofs.

Consider the stakes. Healthcare AI models trained on patient records must prove compliance with regulations like HIPAA without exposing raw data. Financial algorithms demand attestation of clean, audited inputs to fend off regulatory scrutiny. Traditional audits falter here, requiring full disclosure that invites breaches or stifles innovation. ZKPs flip the script: they let provers convince verifiers of a statement's truth - say, 'this model trained on authorized datasets' - sans underlying evidence. Succinct, scalable, and sound, these proofs draw from blockchain roots but adapt elegantly to machine learning's computational heft.

Navigating Privacy Pitfalls in Verifiable AI Datasets

The core tension pits data utility against confidentiality. Public datasets like Common Crawl power giants such as GPTs, but proprietary or regulated ones - think genomic sequences or trade secrets - stay siloed. Without verifiable ai datasets, users risk deploying tainted models, eroding faith in AI outputs. ZKPs address this by committing datasets cryptographically: hash them, train atop commitments, then prove the model matches that hash via proof-of-training protocols.

Analytically, this shifts liability. Model deployers issue attestations akin to digital signatures, but exponentially harder to forge. Verifiers check proofs in milliseconds, independent of dataset scale. Skeptics might dismiss overheads, yet optimizations in zk-SNARKs and zk-STARKs slash costs, rendering them viable even for resource-hungry LLMs.

Core Advantages of ZK Proofs

- Privacy Preservation: Verifies AI training data provenance without exposing datasets, as in ZKPROV which binds models to authorized data cryptographically.

- Efficient Verification: Produces succinct proofs of correct training on committed datasets, balancing privacy and speed per zkPoT protocols.

- Compliance Assurance: Ensures regulatory compliance in sectors like healthcare via verifiable integrity, e.g., zkFL-Health for medical AI.

- Bias Auditing without Exposure: Enables bias detection through data commitments and provenance proofs without revealing sensitive information.

- Scalable for Large Models: Supports efficient proofs for LLMs and deep networks, as shown in Verifiable Fine-Tuning with practical performance.

ZKPROV: Binding Models to Datasets Cryptographically

At the vanguard stands ZKPROV, a framework binding training datasets, model parameters, and even responses through ZKPs. Detailed in recent arXiv preprints, it cryptographically ties a trained LLM to authorized inputs, dodging exhaustive per-sample proofs that balloon computation. Experimental benchmarks reveal proofs generated in hours, not days, with verification near-instantaneous - a boon for production pipelines.

This approach resonates because it sidesteps common pitfalls. No need to reprove every epoch; aggregate commitments suffice. Harvard-affiliated research underscores avoiding 'proof of every training step, ' focusing instead on holistic fidelity. In practice, imagine an enterprise fine-tuning on licensed corpora: ZKPROV attests adherence sans revealing trade secrets, fostering collaborations that privacy fears once chilled.

zkPoT and Beyond: Proving Training Integrity Succinctly

Complementing ZKPROV, zero-knowledge proofs of training (zkPoT) empower parties to certify correct execution on committed datasets. ePrint and ACM works detail zkPoT for deep neural networks: commit model and data, train, prove. No dataset leakage, no architecture spoilers. This proves pivotal for zero knowledge training data attestation, where auditors confirm processes sans internals.

Push further: zkFL-Health merges federated learning with ZKPs and TEEs for medical AI. Collaborative training across hospitals yields verifiably correct updates, confidentiality intact. Verifiable Fine-Tuning protocols add succinct proofs for policy-enforced runs, curbing quota violations. These aren't isolated; they signal convergence. A16z notes ZKPs scaling compute off-chain; Kudelski's ZKML verifies procedures per spec. CSA highlights integrity checks sans exposure.

Opinionated take: while hype swirls around generative AI, true durability hinges on such primitives. Without them, privacy preserving model verification remains aspirational. Deployers gain audit trails; users, confidence. Yet challenges persist - proof sizes, recursion for LLMs. Ongoing tweaks, like STARK-friendly circuits, promise mitigation.

Practical deployment demands more than proofs on paper; it requires streamlined workflows that integrate seamlessly into ML pipelines. Frameworks like ZKPROV prioritize efficiency, generating succinct proofs that scale with model size without exponential cost hikes. This analytical edge positions zk proofs ai training data as indispensable for enterprises navigating data sovereignty laws like GDPR or emerging AI acts.

Once armed with such a proof, stakeholders verify compliance in seconds. Auditors scan for dataset origins, regulators confirm licensing, all while data vaults remain sealed. This workflow not only mitigates risks but unlocks novel business models - think licensed dataset marketplaces where proofs serve as trust anchors, enabling fractional ownership without exposure fears.

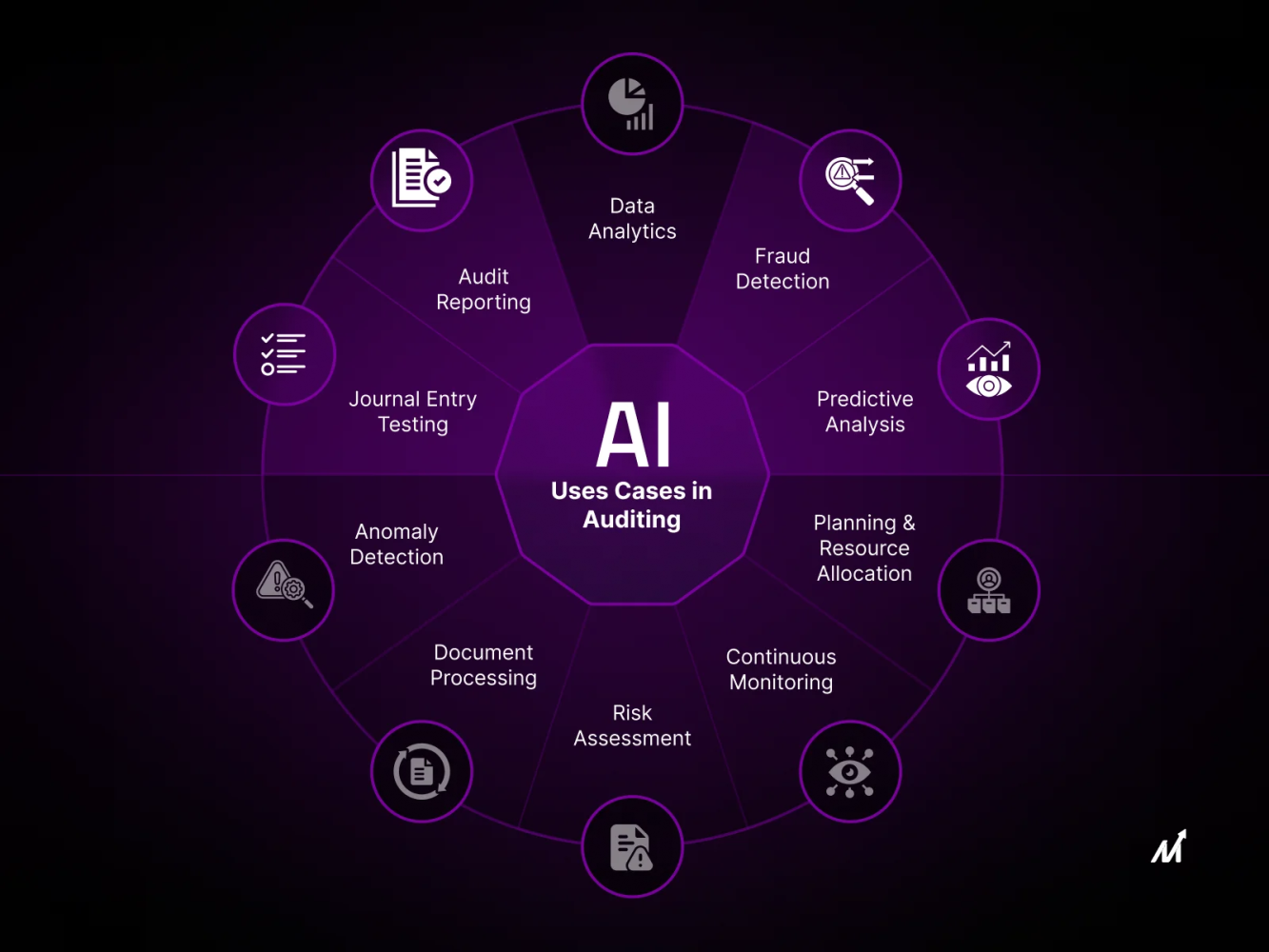

Industry Applications: From Healthcare to Finance

Sector-specific adaptations amplify impact. In healthcare, zkFL-Health fuses ZKPs with federated learning, letting hospitals collaborate on models without sharing patient records. Proofs guarantee update integrity, paving regulatory paths for clinical tools. Finance leverages similar for verifiable ai datasets: banks attest algorithmic fairness on audited transaction logs, dodging bias lawsuits via cryptographic receipts.

Genomics firms, too, stand to gain. Prove a model trained on proprietary sequences for drug discovery, license it broadly, retain IP. These aren't hypotheticals; arXiv prototypes like Verifiable Fine-Tuning enforce quotas on private samples, curbing leakage to near-zero. My view: industries slow to adopt risk commoditization - open models flood markets, proprietary edges erode without provenance shields.

Key ZKP Frameworks for AI Training

| Core Feature | Privacy Level | Proof Time | Ideal Use Case |

|---|---|---|---|

| ZKPROV | Binds trained model to authorized datasets via ZKPs, no data or parameter disclosure | Efficient and scalable (practical for real-world LLMs) | Verifying LLM training provenance in sensitive sectors |

| zkPoT | Proves correct training of committed model on committed dataset without revealing data | Practical for deep neural networks | Verifying DNN training integrity without dataset exposure |

| zkFL-Health | Combines FL, ZKPs, and TEEs for verifiable collaborative medical AI training | Strong confidentiality, no client data exposure | Privacy-preserving healthcare AI federated learning |

| Verifiable Fine-Tuning | Succinct ZKPs for model from public init, training program, and dataset commitment | Practical with tight budgets and no leakage | Policy-enforced fine-tuning with auditable provenance |

Comparative scrutiny reveals strengths. ZKPROV excels in LLM-scale binding; zkPoT prioritizes succinctness for DNNs. Hybrids emerge, blending TEEs for speed, ZK for auditability. Yet, no silver bullet - proof recursion for billion-parameter models strains hardware, though GPU accelerations and recursive SNARKs erode barriers.

Overcoming Hurdles: Scalability and Adoption Realities

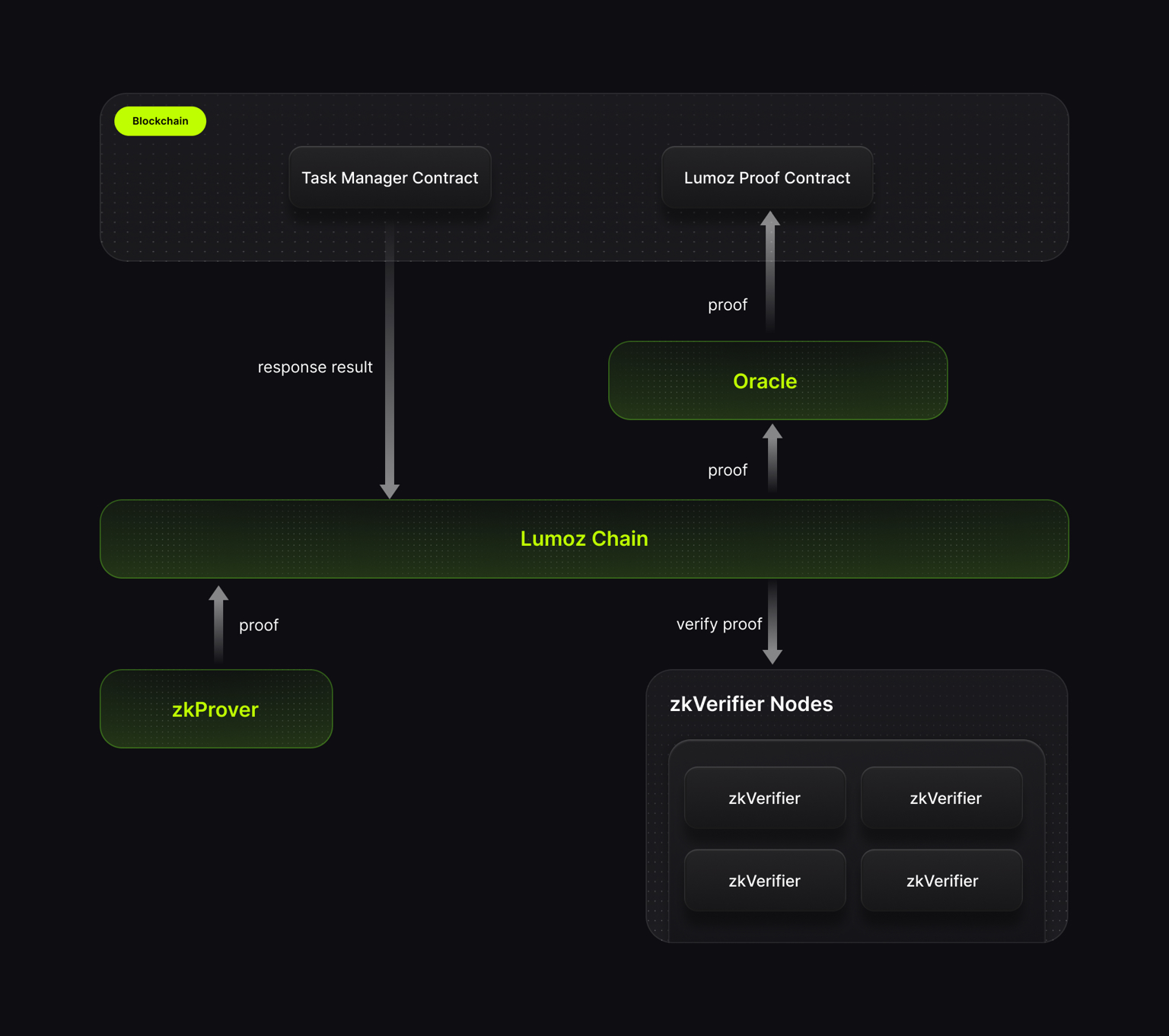

Critics highlight compute tolls: generating proofs rivals training runs. Counterpoint - optimizations like STARKware's Cairo or Polygon zkEVM slash latencies 10x. Economic incentives align too; blockchain integrations monetize proofs via oracles, turning verification into revenue streams. Enterprises weigh this against breach costs - Equifax-scale incidents dwarf ZKP overheads.

Adoption hinges on tooling. Open-source libraries from Modulus Labs or RISC Zero democratize access, bundling circuit design with ML frameworks. Early movers like Telefónica Tech prototype secure AI stacks; Orochi Network fuses ZK with privacy-preserving inference. Skepticism fades as benchmarks prove: Cloud Security Alliance validations show model integrity sans architecture leaks.

Forward-looking, expect ZK-native ML platforms where provenance proofs embed by default. Datasets gain NFT-like attestations, models chain to lineages. This cryptographic scaffolding fortifies AI against deepfakes, hallucinations rooted in dirty data. Ultimately, model provenance zk proofs don't just verify; they cultivate ecosystems where trust compounds, innovation accelerates. Developers wielding these tools won't merely build models - they'll forge unbreakable reputations in an era demanding transparency behind closed doors.

No comments yet. Be the first to share your thoughts!