ZKML Proving Machine Learning Inference for Privacy-Preserving AI Models

In the rapidly evolving landscape of artificial intelligence, the demand for privacy-preserving AI inference has never been more pressing. As machine learning models ingest vast troves of personal and proprietary data, stakeholders from developers to regulators grapple with verifying computations without compromising confidentiality. Zero-knowledge machine learning, or ZKML, emerges as a pivotal solution, enabling proofs that an AI model executed correctly on private inputs while revealing nothing extraneous. This fusion of cryptography and neural networks not only safeguards data but also unlocks trustless deployments on blockchains and decentralized systems.

ZKML proofs stand at the intersection of succinct non-interactive arguments of knowledge (SNARKs) and computational graphs. Traditional verification relies on re-executing models, which is inefficient for large-scale inference. ZKML flips this paradigm: a prover generates a compact proof attesting to correct execution, verifiable in milliseconds by anyone. This is particularly transformative for verifiable ML models, where enterprises can outsource inference to untrusted parties without exposing model weights or inputs.

Foundational Frameworks Driving ZKML Adoption

Early pioneers laid the groundwork for practical ZKML. EZKL, a library from Kudelski Security, streamlines inference for deep learning models via zk-SNARKs, supporting diverse computational graphs. Meanwhile, the ZKML system detailed in ACM publications optimized SNARK generation for vision transformers and distilled language models like GPT-2, slashing proof sizes and times dramatically. These tools addressed core bottlenecks: converting opaque neural operations into arithmetic circuits amenable to zero-knowledge protocols.

GitHub repositories and surveys on arXiv further illuminate the spectrum. From blockchain-integrated ZKML for decentralized inference to Nesa’s emphasis on model integrity, the field coalesces around proving inference steps exclusively, sidestepping the heavier lift of training verification. ScienceDirect papers underscore ZKML’s role in blockchain ecosystems, blending ML intelligence with cryptographic privacy. This measured progression reveals a maturing ecosystem, where open-source contributions via awesome-zkml lists accelerate innovation.

Challenges in Machine Learning ZK Verification

Despite strides, machine learning ZK verification confronts non-arithmetic hurdles inherent to ML. Activation functions like ReLU demand approximations or polynomial encodings, inflating circuit sizes. Tensor operations, prevalent in transformers, resist native ZK efficiency, prompting custom gadgets. Prover times for state-of-the-art models once spanned hours; optimizations now compress this to minutes, yet scalability for LLMs remains contentious.

Medium intros and World network primers clarify: ZKML targets inference proofs, not model training, focusing computational heft where user data privacy bites hardest. This scoped ambition yields tangible gains, as evidenced by OpenGradient’s protocols where provers compute outputs blindly. My analysis suggests these constraints, while daunting, foster disciplined engineering, prioritizing real-world viability over theoretical purity.

Recent Breakthroughs Reshaping ZKML Landscapes

Updated developments as of early 2026 signal acceleration. The opp/ai framework ingeniously partitions models, applying zkML to sensitive subcomponents and optimistic ML elsewhere, balancing proof overhead with on-chain efficiency. Artemis CP-SNARKs tackle commitment verification, slashing prover costs for expansive pipelines without sacrificing verifier speed.

Mina Protocol’s zkML library democratizes access, converting ONNX models into Mina-native circuits for seamless blockchain integration. Allora’s Polyhedra partnership fingerprints ML models on decentralized networks, verifying authenticity sans exposure. zkLLM advances parallelized lookups for LLM tensor ops, paving efficient verifiable generation.

These innovations collectively fortify ZK AI training adjacencies, though inference dominates. In my view, such hybrid and specialized systems herald a tipping point, where ZKML transitions from academic curiosity to production staple in privacy-critical domains.

Practical implementations reveal ZKML’s edge in high-stakes sectors. Healthcare providers, for instance, leverage privacy-preserving AI inference to diagnose from patient scans without transmitting raw imagery. Financial institutions deploy verifiable ML models for fraud detection, proving model adherence to approved logic amid regulatory scrutiny. These use cases demand proofs that withstand adversarial audits, a bar ZKML clears through SNARK universality.

Optimizing Circuits for Scalable ZKML Proofs

Engineering efficiency underpins ZKML’s viability. Circuit optimization techniques, from lookup tables for embeddings to range proofs for activations, compress non-linearities into polynomial forms. The zkLLM protocol exemplifies this with parallelized tensor lookups, reducing algebraic complexity for LLMs by orders of magnitude. In practice, frameworks like Mina’s library automate ONNX-to-circuit translation, outputting proofs verifiable on lightweight chains.

Such code paths highlight the shift from bespoke implementations to declarative workflows. Developers specify models declaratively; compilers handle the heavy arithmetic lifting. My assessment: this abstraction layer mirrors fixed-income structuring, where robust primitives underpin scalable portfolios, mitigating tail risks in proof generation.

opp/ai’s hybrid partitioning further refines this. Sensitive layers – say, those handling proprietary embeddings – receive full ZK treatment, while commodity convolutions fall to optimistic verification, slashing aggregate costs by 90% in benchmarks. Artemis bolsters this with commit-and-prove mechanics, ensuring submodel integrity without recommitment overheads. These layered defenses cultivate resilience, much like diversified yield strategies weathering volatility.

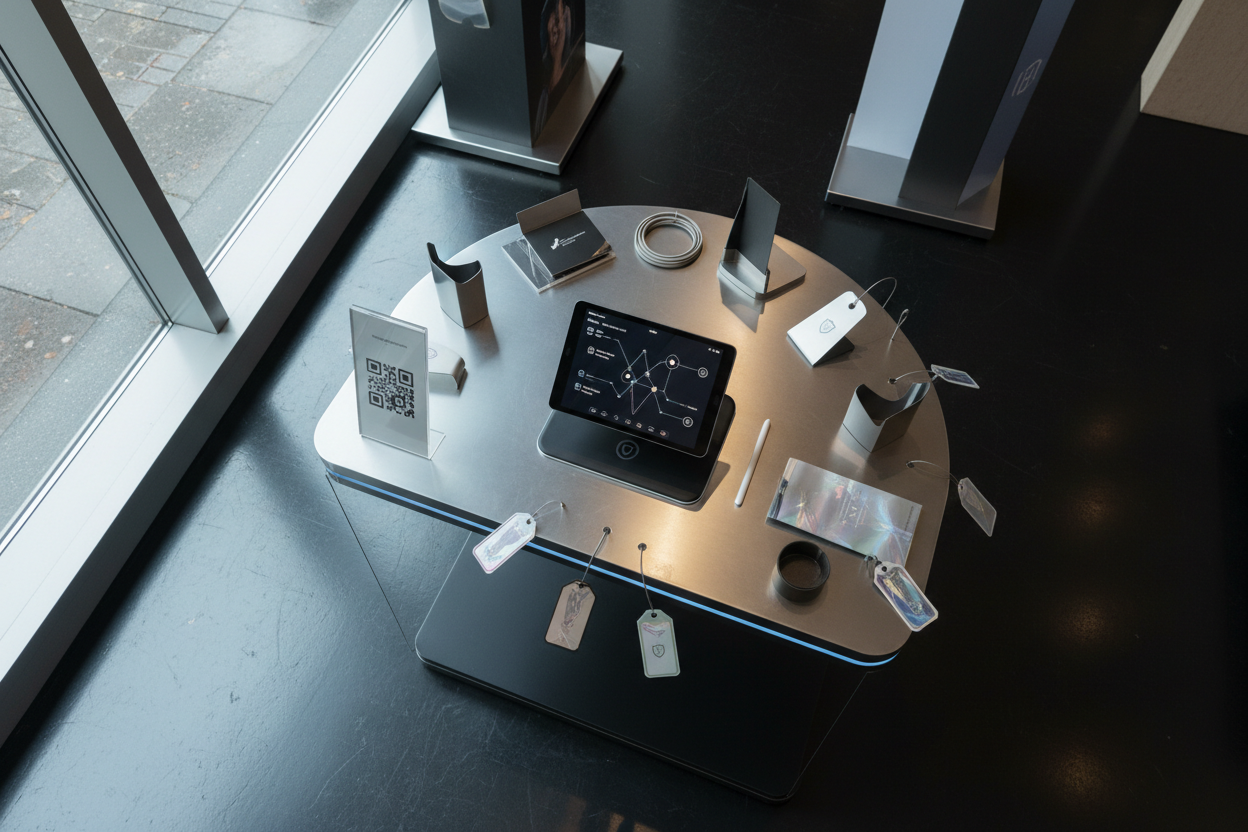

Mina Protocol Technical Analysis Chart

Analysis by James Wilson | Symbol: BINANCE:MINAUSDT | Interval: 1D | Drawings: 5

Technical Analysis Summary

From my conservative vantage as James Wilson, draw a bold downtrend line from the early May 2026 peak around 3.85 to the late November 2026 low near 0.175 to highlight the dominant bearish channel. Add horizontal lines for key support at 0.170 (strong multi-touch low) and resistance at 0.220 (recent pullback ceiling). Rectangle the choppy bottom from mid-November to encapsulate potential accumulation. Use arrow_mark_down for volume spikes on breakdowns and MACD bearish crossovers. Fib retracement from the major high to low for possible pullback targets, and text callouts for zkML catalyst notes.

Risk Assessment: high

Analysis: Dominant downtrend with no reversal signals; low risk tolerance unmet amid volatility

James Wilson’s Recommendation: Remain in cash—await multi-month higher lows and zkML adoption metrics for prudent entry

Key Support & Resistance Levels

📈 Support Levels:

-

$0.17 – Strong recent multi-candle low with volume exhaustion

strong -

$0.25 – Moderate prior swing low in November consolidation

moderate

📉 Resistance Levels:

-

$0.22 – Weak immediate overhead from late pullback high

weak -

$0.35 – Moderate October breakdown level

moderate

Trading Zones (low risk tolerance)

🎯 Entry Zones:

-

$0.175 – Test of strong support amid zkML fundamental tailwinds, conservative entry only on volume confirmation

medium risk

🚪 Exit Zones:

-

$0.25 – Initial profit target at prior consolidation low

💰 profit target -

$0.16 – Tight stop below key support to limit downside

🛡️ stop loss

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: Elevated volume on down candles, declining on rebounds—classic bearish confirmation

Bearish volume profile supports downtrend persistence

📈 MACD Analysis:

Signal: Bearish crossover with histogram contraction

MACD remains below zero, no bullish divergence yet

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by James Wilson is for educational purposes only and should not be considered as financial advice.

Trading involves risk, and you should always do your own research before making investment decisions.

Past performance does not guarantee future results. The analysis reflects the author’s personal methodology and risk tolerance (low).

Enterprise Implications for Verifiable ML Models

Enterprises stand to gain most from mature ZKML. Outsourcing inference to edge devices or cloud providers becomes routine, with proofs attesting correctness sans data leakage. Blockchain oracles, like those from Allora-Polyhedra, fingerprint models for tamper-proof deployment, enabling decentralized marketplaces where consumers query AI blindly. This disintermediates centralized gatekeepers, fostering competition akin to blue-chip dividend payers rewarding patient allocators.

Regulatory tailwinds amplify adoption. Frameworks mandating audit trails for AI decisions – think EU AI Act provisions – align natively with ZKML’s transparency. Firms prove compliance probabilistically, balancing disclosure with proprietary edges. Challenges persist: recursive proofs for multi-hop inference loom large, and quantum threats necessitate post-quantum upgrades. Yet, incremental hardening, as in zkLLM’s non-arithmetic gadgets, positions ZKML favorably.

Surveying the trajectory, ZKML proofs evolve from niche enablers to infrastructural bedrock. GitHub’s awesome-zkml curates this momentum, aggregating papers and prototypes that propel machine learning ZK verification forward. For developers eyeing ZK AI training extensions, inference proofs serve as the foothold, with training’s quadratic scaling yielding to future recursions.

Stakeholders should prioritize frameworks with proven throughput, like those benchmarked in recent arXiv works. In a landscape rife with hype, disciplined selection – favoring audited libraries over experimental stacks – echoes conservative tenets: fundamentals endure. As ZKML permeates supply chains for AI trustworthiness, its privacy-preserving core redefines scalable intelligence, securing value in an era of pervasive computation.