Federated learning promised the holy grail of AI training: collaborative power without exposing raw data. But let's cut the bullshit. Decentralized setups breed chaos. Clients fudge updates, poison models, or straight-up ghost the process. Data provenance? A pipe dream until zero-knowledge range proofs stormed in, slamming the door on fraud while keeping secrets locked tight. By 2027, this tech isn't optional; it's the aggressive enforcer federated systems desperately need.

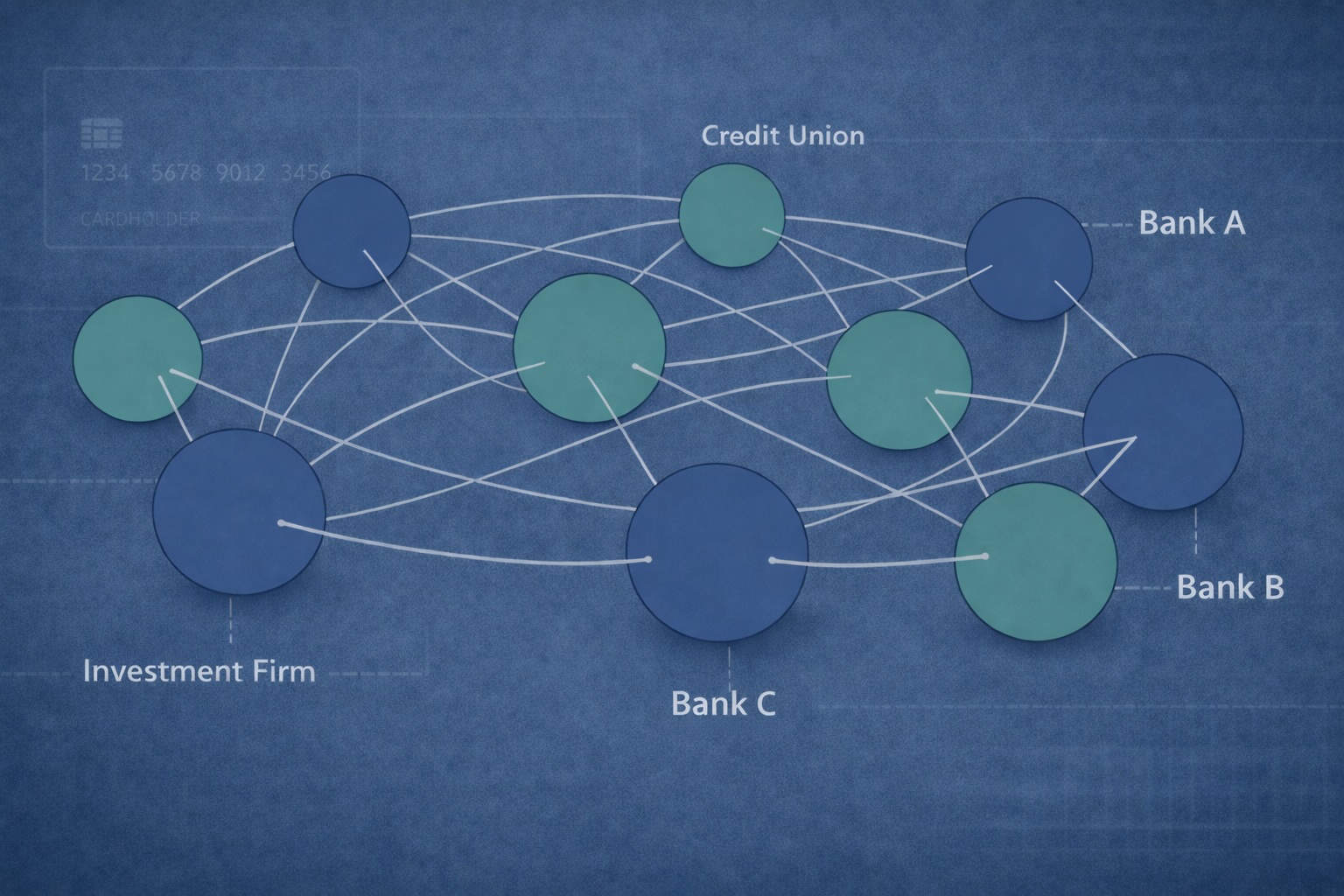

Picture this: hospitals pooling patient insights for better diagnostics, banks sharpening fraud detection across silos, all without shipping sensitive records. Sounds perfect, right? Wrong. Without ironclad proof that contributions stay within vetted ranges - think age brackets, transaction volumes, or anomaly scores - aggregators risk tainted models. Enter ZK range proofs federated learning: prove your local data slice fits the bill, no leaks, no lies.

Shattering Trust Barriers in Decentralized Training

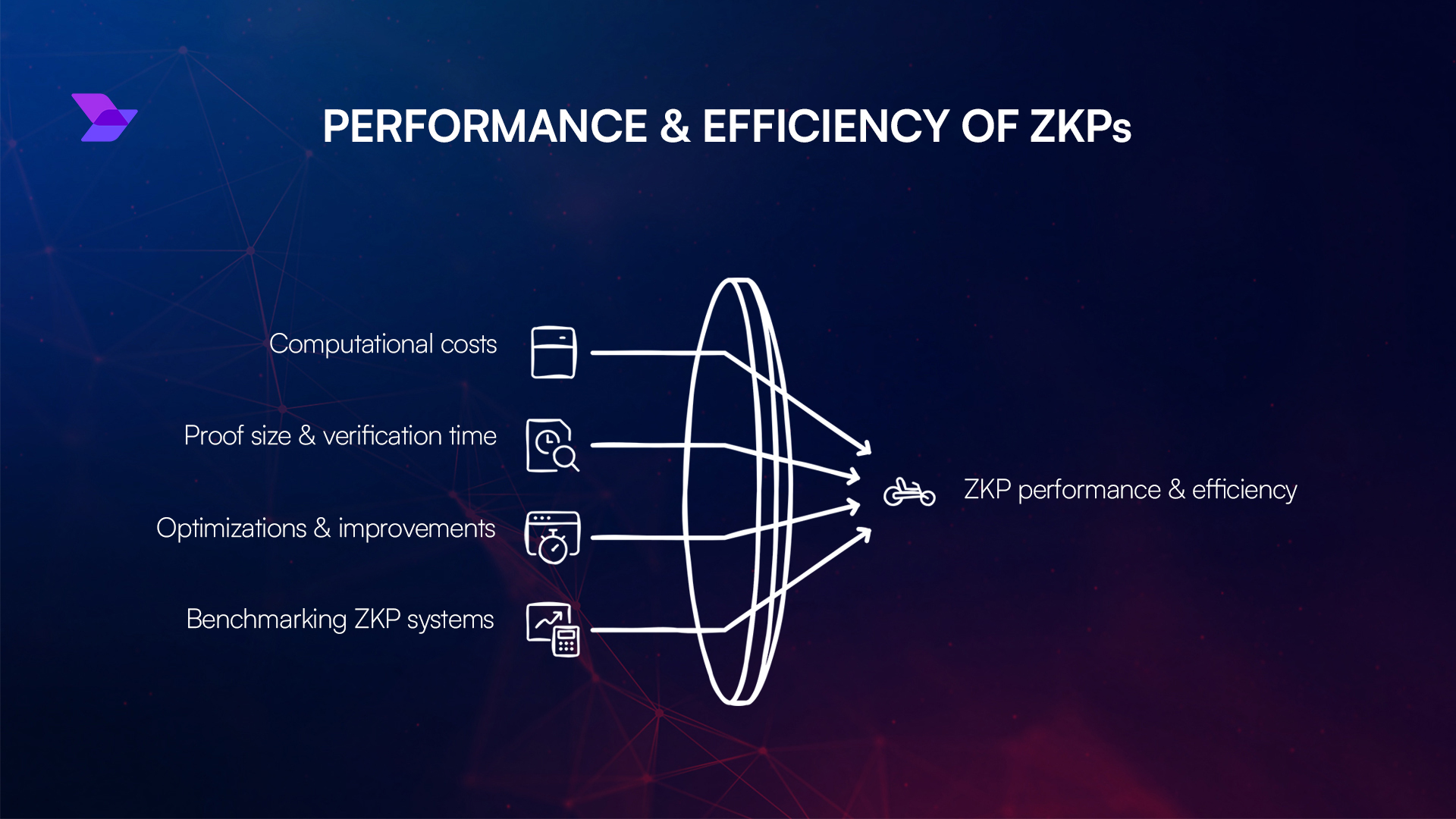

Federated learning's Achilles heel hits hard in data provenance federated scenarios. Clients compute locally, ship only gradients. But how do you verify those gradients stem from legitimate, bounded data? Traditional checks demand peeking inside, nuking privacy. ZK range proofs flip the script. A prover screams, "My value x sits snug between a and b!" Verifier nods, convinced, blind to x. Bulletproof math from Bulletproofs to PlonK variants makes this zippy, scalable.

We're talking sub-second proofs for massive ranges, crushing older sigma protocols. In zero knowledge FL, this means auditors greenlight entire rounds without decrypting a byte. Aggressively efficient, it scales to thousands of nodes, no central chokepoint.

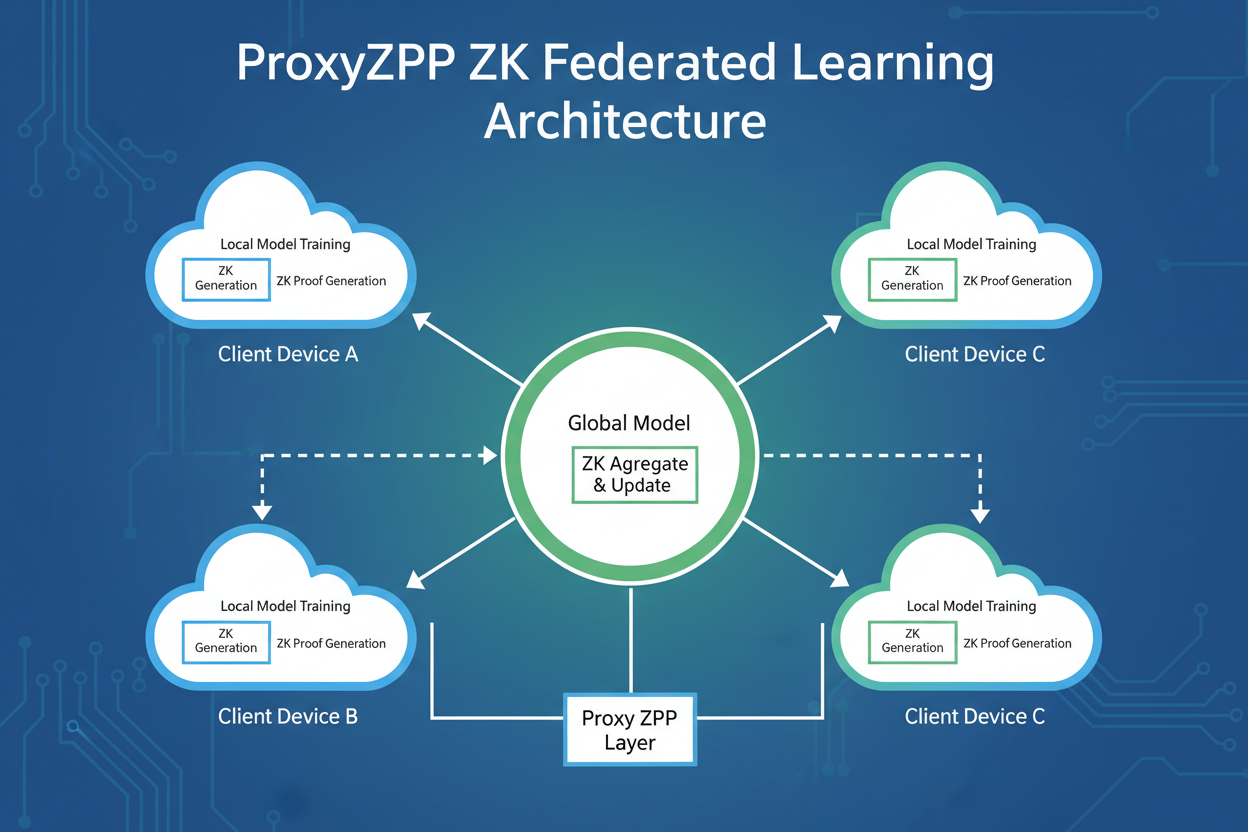

ProxyZKP and ZK-HybridFL: The Vanguard Assault

ZK-FL Power Advances

- ProxyZKP Framework: Crushes computation integrity with ZKP-proxy models, slashing compute load for scalable FL. Dive in

- ZK-HybridFL: DAG-ledger beast for privacy-preserving validation, faster convergence, killer accuracy. Check arXiv

- ZKPROV: Verifies LLM responses on certified data without leaks—total privacy win. arXiv link

- ByzSFL: Byzantine-robust aggregation, 100x faster ZK toolkit dominates secure FL. arXiv proof

ProxyZKP doesn't mess around. It proxies complex local training via polynomials, ZK-attested for fidelity. Nature's latest drop shows it slashes compute by orders, perfect for edge devices scraping by on battery. Then ZK-HybridFL unleashes DAG ledgers with sidechains; models converge 2x faster, accuracy spikes, all privacy-sealed. ArXiv's buzzing: this hybrid crushes vanilla FL in wild, adversarial nets.

ZKPROV takes it nuclear for LLMs. Prove your beast was fine-tuned on certified datasets - authorities vouch origins, ZK hides the sauce. No more black-box suspicions. And ByzSFL? It offloads weights to parties, ZK-proves fairness. 100x speedup over rivals; that's not evolution, that's domination.

Range Proofs: The Precision Weapon for Provenance

Dig deeper into ZK range proofs federated learning mechanics. Inner product arguments encode commitments; recursive composition shrinks proof size to kilobytes. Provers commit scalars, verifiers challenge openings within [0, 2^n). Extensions handle vectors - ideal for gradient norms or dataset stats. In FL, clients prove update deltas respect data bounds, aggregators tally sans trust.

Privacy federated AI gets teeth here. Range proofs block inference attacks; no more divining distributions from aggregates. Couple with homomorphic encryption for hybrid beasts, and you've got tamper-proof pipelines. 2027 forecast? Every serious FL deploy mandates ZKRPs, or get left bleeding market share.

Implementation war stories prove the point. Slap ZKRPs on gradient updates: clients commit vectors, prove L2 norms cap at sane thresholds signaling no outliers or injections. Aggregators batch-verify, slashing latency. Mysten Labs nails it - ZKRPs for confidential balances scale seamlessly; FL devs hijack that for data slices. No more 'trust me bro' rounds.

Battle-Tested Protocols Crushing the Field

Stack up the contenders in ZK range proofs federated learning. Bulletproofs pioneered inner-product magic, but PlonK's universality and Groth16's speed duke it out now. VPFL from ScienceDirect? Public verifiability for entire training traces. ZKP-FedEval verifies evals sans leaks. Trusted aggregation via ZKFL? IEEE's got the blueprint. These aren't lab toys; they're deploy-ready hammers for data provenance federated nails.

Comparison of ZK Protocols in Federated Learning

| Protocol | Proof Size (KB) | Verify Time (ms) | Speedup vs Baseline (x) | Use Case |

|---|---|---|---|---|

| ProxyZKP | 3 | 30 | 4x | integrity |

| ZK-HybridFL | 5 | 50 | 6x | validation |

| ByzSFL | 0.8 | 8 | 100x | robustness |

| ZKPROV | 4 | 40 | 12x | LLM verify |

Numbers don't lie. ByzSFL clocks 100x faster aggregation; ProxyZKP proxies polynomials for edge starvation diets. In adversarial hellscapes - Byzantine clients spewing garbage - these hold the line. Verifiers sleep easy, models stay pure.

But hold up - gas fees and proof gen still sting on mobile. 2027 fixes incoming: hardware accelerators like zk-SNARK ASICs, recursive proofs folding mega-batches. Couple with FHE for encrypted ranges, and zero knowledge FL turns bulletproof. Inference attacks? Vaporized. Model poisoning? Dead on arrival.

Real-World Onslaught: Healthcare to Finance

Killer Deployment Wins

- Hospitals crush patient age/data range proofs for razor-sharp epidemic models – zero PII exposed!

- Banks slam transaction volume attestations sans any PII leaks – ironclad compliance!

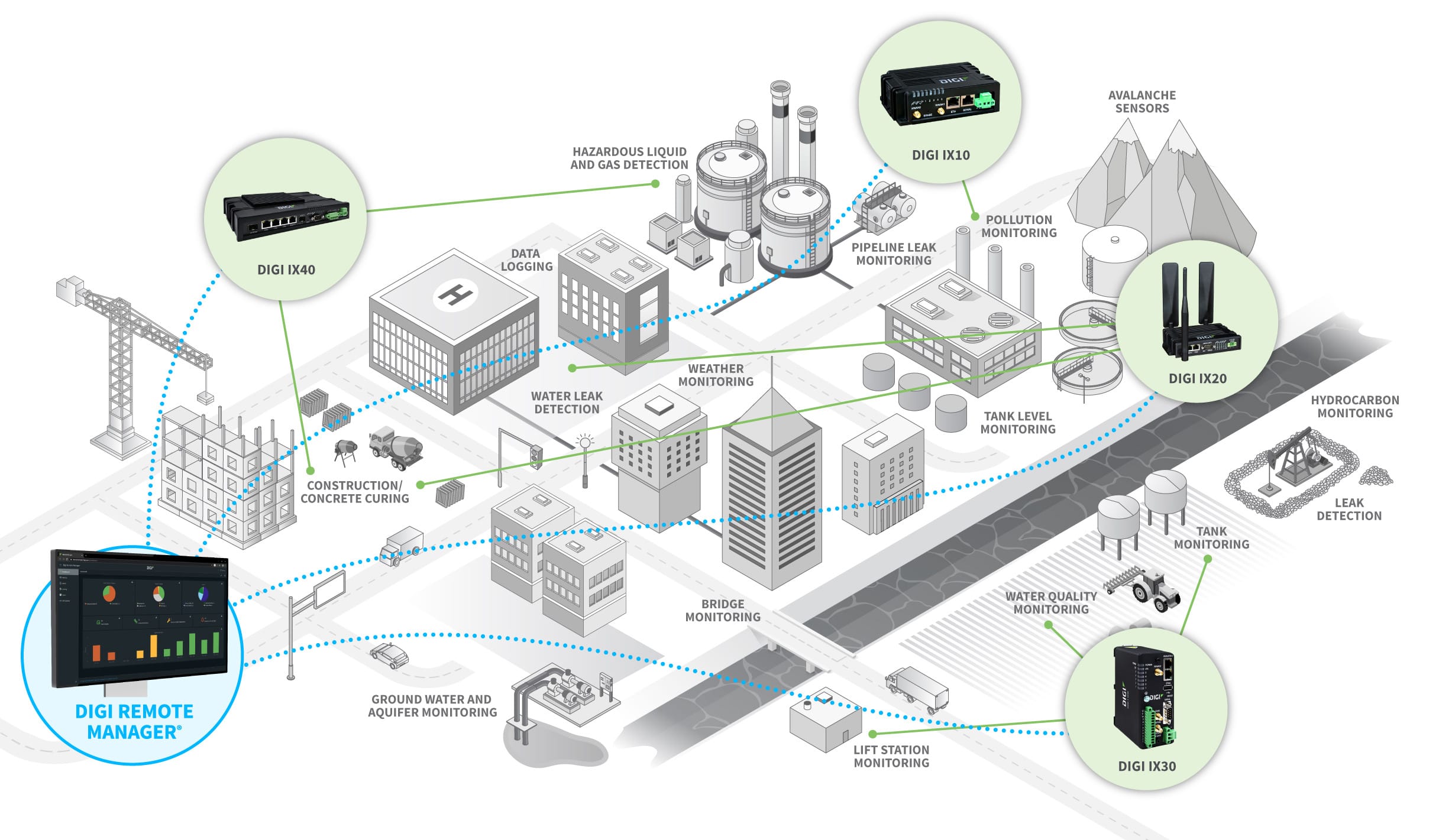

- IoT swarms validate sensor bound proofs in smart cities – unbreakable data integrity!

Hospitals lead the charge. Prove cohort ages cluster 18-80, symptoms score under anomaly caps - ZK signs off, federated diagnostics sharpen overnight. No HIPAA nightmares. Finance flips next: transaction volumes in [1k,1M), fraud signals bounded, cross-bank models sniff scams globally. IoT? Sensor fleets prove readings sane pre-aggregate. Privacy federated AI isn't fluffy; it's the moat crushing centralized giants.

Scalability's the final boss. Thousand-node FL? ZKRPs batch via MPC, proofs aggregate logarithmically. ArXiv's ZK-HybridFL DAGs route validations peer-to-peer, no server choke. Convergence rockets, accuracy holds adversarial fire. Enterprises ditching AWS silos for this? Bet your stack they will.

ZKModelProofs. com already arms devs with these tools - generate attestations proving training data origins via ZK, license-compliant, zero leaks. By 2027, federated learning without range-proven provenance is malpractice. Jump in, or watch competitors eat your lunch. The proof train's left the station; strap in for the provenance revolution.

No comments yet. Be the first to share your thoughts!