In the evolving landscape of artificial intelligence, where models are trained on vast datasets and deployed at scale, trust becomes the ultimate currency. Yet, verifying the integrity of AI compute - especially during model training - remains elusive. Enter Brevis ZK proofs, a breakthrough in verifiable AI compute that promises to validate training processes without compromising privacy or efficiency. As someone who values enduring technological moats, I see Brevis positioning itself as a foundational layer for trustworthy machine learning.

Brevis isn't just another zero-knowledge infrastructure play; it's architecting an infinite computing layer tailored for the demands of AI and blockchain convergence. Their recent launch of ProverNet, a decentralized marketplace for ZK proof generation now live on mainnet beta, marks a pivotal shift. This platform matches complex workloads, like those in ZK proofs model training, with optimized provers via an on-chain auction mechanism. Drawing from over 250 million proofs generated for partners including Uniswap and BNB Chain, Brevis eliminates the need for applications to run their own proving infrastructure.

ProverNet: Decentralizing Proof Generation for High-Throughput AI Workloads

At its core, ProverNet employs the Truthful Online Double Auction (TODA) for continuous proof auctions, with USDC settlements in this beta phase. Provers register nodes, leveraging new GPU and CPU setup docs to compete for tasks. For AI developers, this means scalable access to high throughput ZK verification, crucial when validating model training that involves billions of parameters and iterations. Imagine off-chain training computations verified on-chain without redundant blockchain execution - that's the scalability Brevis unlocks.

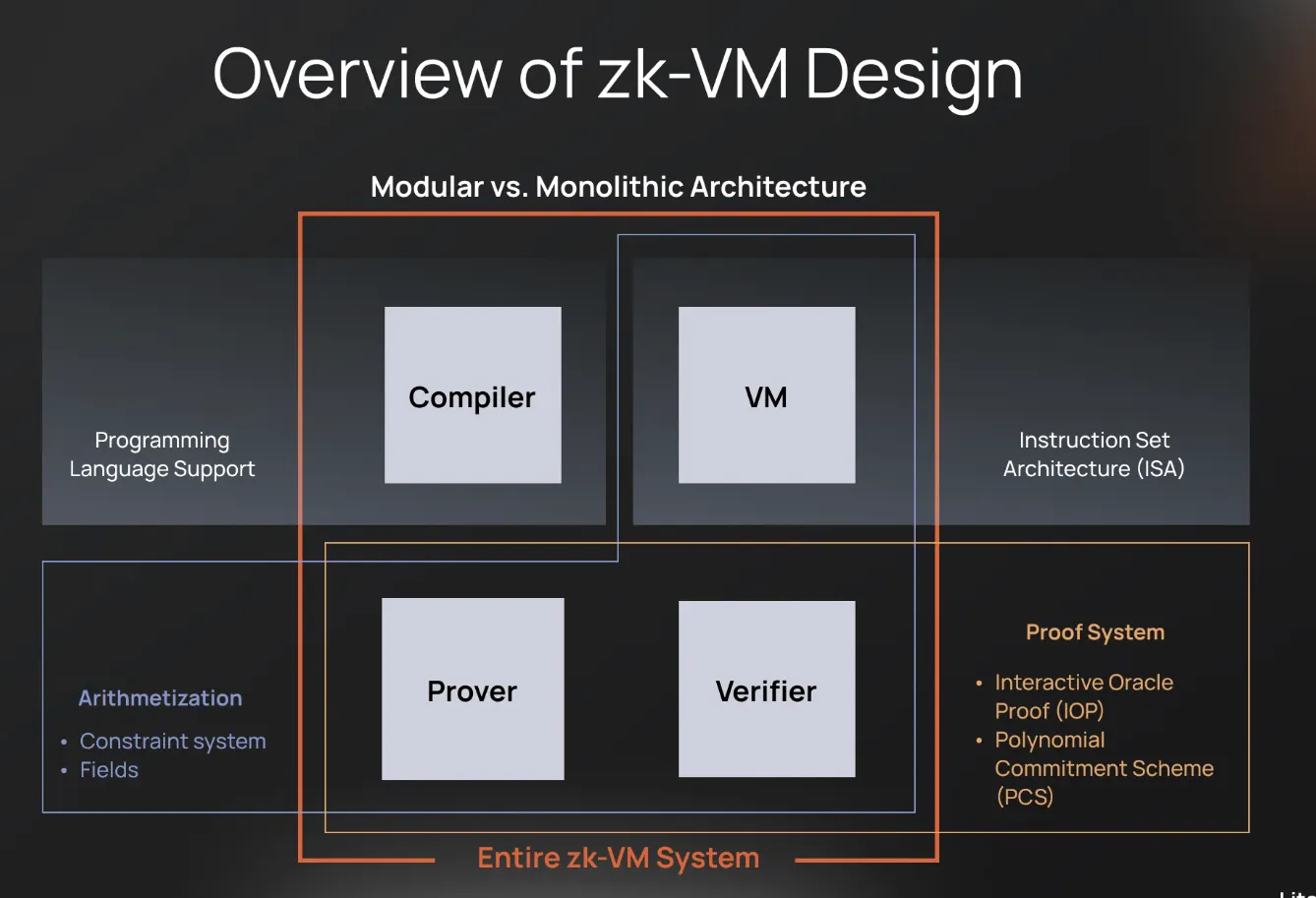

Brevis CEO Michael's foresight resonates deeply: in a decade, 99% of blockchain app computations will occur off-chain, attested by ZK proofs. This isn't hyperbole; it's a logical endpoint for systems strained by on-chain limits. Brevis bridges this gap, extending from Ethereum block proofs via ETHProofs. org to AI-specific validations. Their Pico zkVM, in particular, shines here, enabling off-chain execution of deep learning inferences - and by extension, training validations - while returning succinct proofs to the chain.

ZK Proofs as the Keystone for Model Training Integrity

Traditional AI training lacks transparency; black-box models invite skepticism about data usage, parameter tweaks, or even adversarial manipulations. Brevis ZK proofs address this head-on with Brevis ZK proofs AI technology. By proving computations without revealing inputs, models, or intermediate states, Brevis ensures compliance and provenance. For instance, a training run can be attested for correct dataset processing and optimization steps, all while preserving trade secrets.

Brevis ProverNet Advantages

- Scalability: Delivers infinite off-chain compute via ZK proofs, efficiently handling complex AI model training validations for long-term blockchain growth.

- Privacy Preservation: Validates deep learning inference with Pico zkVM proofs without exposing model parameters, safeguarding AI integrity indefinitely.

- Cost Efficiency: On-chain TODA auctions match workloads to optimized provers, minimizing expenses by avoiding proprietary infrastructure over time.

- Decentralized Prover Network: Mainnet beta marketplace connects apps to global GPU/CPU provers, ensuring resilient, competitive proof generation.

- Seamless zkVM Integration: Pico zkVM enables straightforward verifiable computing for AI training, bridging off-chain power with on-chain trust.

This approach transforms model training validation into a privacy-preserving audit trail. Developers submit workloads to ProverNet, where specialized provers generate proofs at competitive rates. The blockchain then verifies these in constant time, sidestepping the computational bloat of full re-execution. In my long-term view, this moat - combining zkVM generality with a robust marketplace - positions Brevis as indispensable for enterprises scaling AI under regulatory scrutiny.

From Inference to Training: Expanding the Verifiable Compute Frontier

Brevis has already demonstrated AI inference proofs using zero-knowledge to validate deep learning outputs sans parameter exposure. Extending this to training validation follows naturally. Training involves sequential matrix multiplications, gradient descents, and loss computations - all expressible in zkVM circuits. Pico zkVM's efficiency allows these to run off-chain, with proofs confirming fidelity to the specified hyperparameters and data flows. Future upgrades, including the BREV token for settlements, will further incentivize prover participation, solidifying the ecosystem.

Consider the ripple effects: decentralized finance protocols training fraud-detection models, DAOs validating contributor-submitted AI agents, or enterprises proving licensing compliance in federated learning. Brevis doesn't just verify; it enables novel architectures where AI compute scales infinitely, bounded only by proof economics. As a value-oriented thinker, I appreciate how ProverNet's auction dynamics foster sustainable growth, mirroring the competitive edges that endure in equities.

Yet, realizing this vision demands overcoming steep hurdles: the astronomical compute demands of training proofs, the fragility of privacy in shared prover networks, and the nascent state of zkVMs for non-trivial AI operations. Brevis confronts these with surgical precision. Pico zkVM's Rust-based circuits compile training loops into efficient arithmetic representations, slashing proof times from days to hours. ProverNet's decentralized auction mitigates centralization risks, distributing workloads across global nodes while TODA ensures economic truthfulness - no collusion, no free-riding.

This decentralized proving marketplace isn't merely a service; it's an economic flywheel. As proof demand surges from ZK proofs model training, prover incentives align, driving hardware optimizations and circuit innovations. We've seen this pattern in equity markets: platforms with network effects compound value over time. Brevis, with its track record across PancakeSwap to MetaMask integrations, exhibits that rare moat of proven scale.

Real-World Applications: From DeFi to Enterprise AI

Picture a DeFi protocol training a risk model on proprietary transaction data. Without Brevis, on-chain verification exposes strategies; with it, a succinct proof attests to correct gradient updates and convergence metrics. Or consider pharmaceutical firms validating federated learning across hospitals - verifiable AI compute proves model fidelity without data leakage, complying with GDPR-like regimes. Even open-source collectives benefit: DAOs can crowdfund training runs, verified transparently to reward honest contributors.

Brevis's Ethereum block proof demos via ETHProofs. org preview broader horizons. Migrating production workloads here will bootstrap high throughput ZK verification for AI, where proofs under a megabyte settle in seconds. Pair this with dataset provenance tools, and you forge unbreakable chains of trust: from data origins to trained weights. In my two decades charting long-term trends, such composability echoes the compounding returns of wide-moat compounders.

Extending beyond inference, full training validation unlocks AI inference proofs at unprecedented scale. Brevis's demonstrations already validate deep learning outputs off-chain, preserving parameter secrecy. Training amplifies this: express backpropagation in zkVM, prove hyperparameter adherence, and audit for poisoning attacks. ProverNet handles the volume, auctioning GPU-heavy tasks to specialists. Future BREV token economics will refine this, rewarding high-uptime provers and slashing costs through competition.

Brevis isn't building a tool; it's engineering the infrastructure for AI's trustless era, where verification costs plummet as adoption soars.

For developers eyeing integration, Brevis offers seamless SDKs bridging Rust circuits to EVM chains. No need for bespoke provers; submit jobs, await proofs, verify on-chain. This abstraction layer empowers experimentation - train on zkVM-emulated TPUs, validate cross-chain, iterate endlessly. As regulatory pressures mount on AI opacity, from EU AI Act to U. S. executive orders, Brevis equips builders with compliance-by-design.

A Long-Term Bet on Infinite Compute

Reflecting on macro cycles, blockchain's scalability inflection mirrors internet infrastructure booms of the early 2000s. Brevis, as the verifiable compute linchpin, stands to capture outsized value. Their $7.5 million seed underscores early conviction, but ProverNet's mainnet beta - live with USDC auctions - signals execution velocity. Over 250 million proofs aren't promises; they're battle-tested reliability.

In a world where AI models balloon to trillions of parameters, on-chain naivety crumbles. Brevis ZK proofs erect the bulwarks: privacy intact, compute infinite, trust absolute. For value seekers, this isn't hype; it's the patient accumulation of technological primacy. As off-chain computation claims that predicted 99%, Brevis will be the quiet force verifying it all, rewarding those who bet early on enduring foundations.

No comments yet. Be the first to share your thoughts!