Verifiable Credentials via ZK Proofs for Open-Source Dataset Usage in AI

In the rapidly evolving landscape of artificial intelligence, open-source datasets fuel innovation but introduce profound risks around provenance and compliance. Without verifiable proof of data origins, models risk inheriting biases, licensing violations, or tainted sources, undermining trust at scale. Enter verifiable credentials powered by zero-knowledge proofs (ZKPs): a cryptographic leap that lets AI developers attest to dataset integrity without exposing sensitive details. This fusion addresses core pain points in ZK proofs open-source datasets, enabling dataset usage tracking while preserving privacy.

Recent advancements underscore this shift. ZKPROV, detailed in arXiv paper 2506.20915, stands out by focusing on dataset provenance for large language models rather than mere computational fidelity. It generates proofs binding training data, model weights, and outputs, allowing verifiers to confirm claims like “trained exclusively on licensed open-source sets” sans disclosure. Data from sources like a16z crypto highlights ZKPs’ blockchain roots in off-chain scaling, now extending to machine learning verification.

ZKPROV Redefines Verifiable Machine Learning

ZKPROV carves a niche in verifiable ML by prioritizing data lineage over execution traces. Traditional approaches verify if computations ran correctly; ZKPROV proves what fed those computations. Researchers from Harvard note it equips users to audit LLM training without revealing proprietary datasets, critical as models ingest terabytes from diverse open sources. In practice, this means developers can attach succinct proofs to model releases, fostering enterprise adoption where compliance trumps opacity.

Consider the implications: a model claiming Common Crawl derivatives can prove it via ZKPROV, sidestepping manual audits. This data-driven assurance counters rising scrutiny, as seen in Medium analyses of AI-generated content provenance. Splunk’s insights on digital fingerprints further align, positioning ZKPs as privacy shields against deepfake-era doubts.

Verifiable Credentials Meet Zero-Knowledge Open Data

Core Advantages of VCs + ZKPs

-

Licensing Compliance: Verify adherence to open-source licenses via proofs without exposing full datasets, supported by Verida.

-

Scalable Audits: Enable efficient, non-interactive verification of training processes at scale using SNARKs, like in zkVerify.

-

Bias Mitigation: Confirm dataset diversity and provenance cryptographically without disclosure, per ZKlaims.

Verifiable credentials (VCs) act as tamper-proof digital attestations, selectively disclosing attributes like “dataset licensed under CC-BY-SA. ” Layering ZKPs elevates this: holders prove statements such as “all samples from verified open-source repositories” without linking to full credentials. Updated context from February 2026 spotlights tools like Zakapi, which compiles SQL policies into ZK circuits for queries on age or KYC, adaptable to dataset checks.

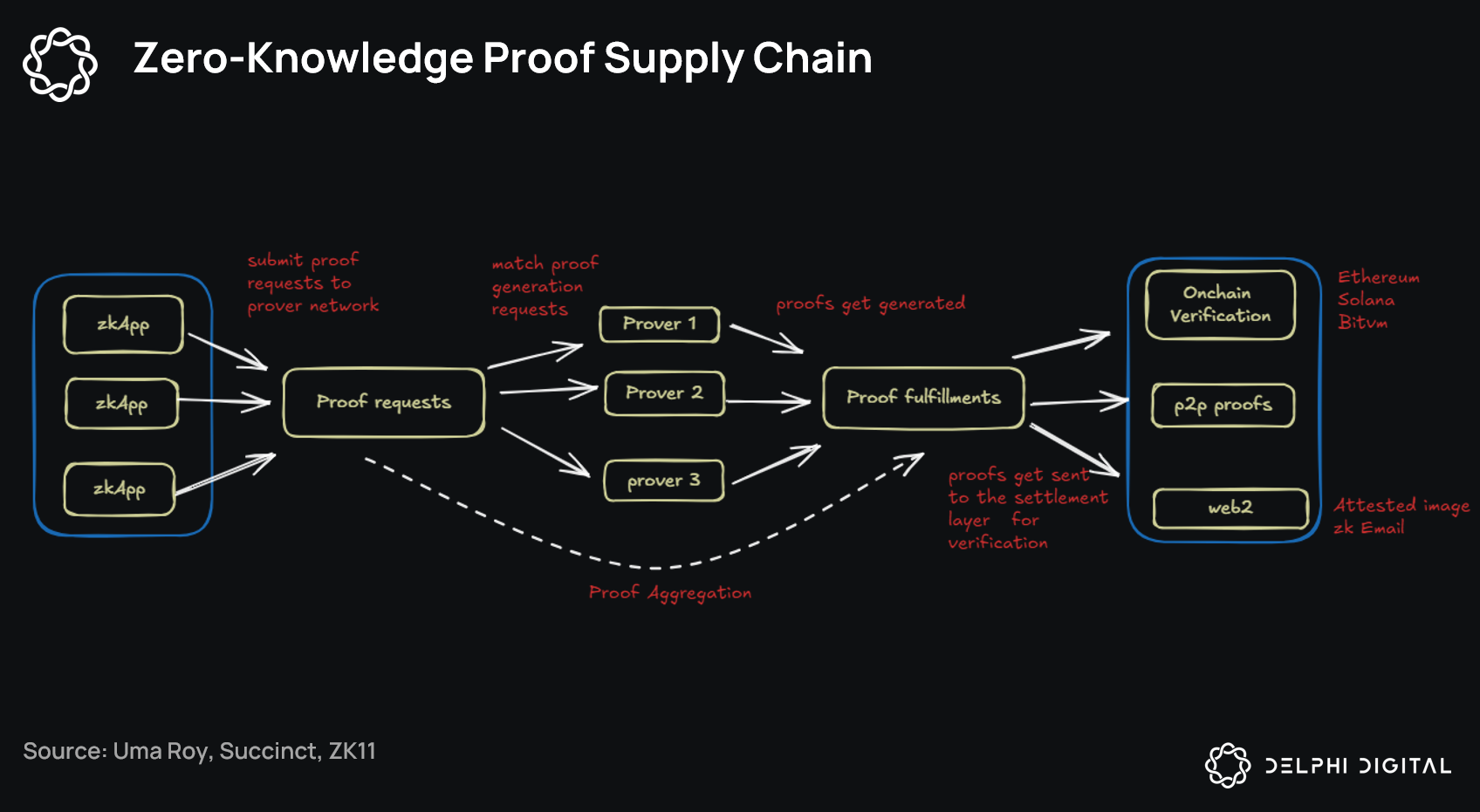

ZKlaims pushes further with SNARKs for non-interactive proofs, ideal for decentralized AI ecosystems. zkVerify targets ML directly, validating private training and inference. Verida integrates these for regulatory-compliant data proofs, supporting KYC and licensing in credential networks. Together, they form a robust stack for verifiable credentials AI, where zero knowledge open data becomes operable reality.

Practical Pathways for Dataset Usage Tracking

Implementing this stack starts with credential issuance: dataset curators mint VCs attesting origins, hashed commitments, and usage terms. AI trainers aggregate these into Merkle proofs, then ZK-circuit them via libraries like those open-sourced by Google for age assurance. Orochi Network’s work on ZKP-ML verification shows computations stay hidden, yet verifiable, slashing breach risks in collaborative training.

Quantitatively, arXiv metrics reveal ZKPROV proofs under 1MB for billion-parameter models, with verification in seconds. This efficiency suits production, where Google SERPs data indicates surging interest in ZK-AI intersections. Opinion: while hype swirls around scaling laws, provenance proofs deliver the grounded trust multiplier AI desperately needs, turning open-source abundance into reliable fuel.