ZK Proofs for Proving AI Training Data Licensing Compliance in Enterprise Models 2026

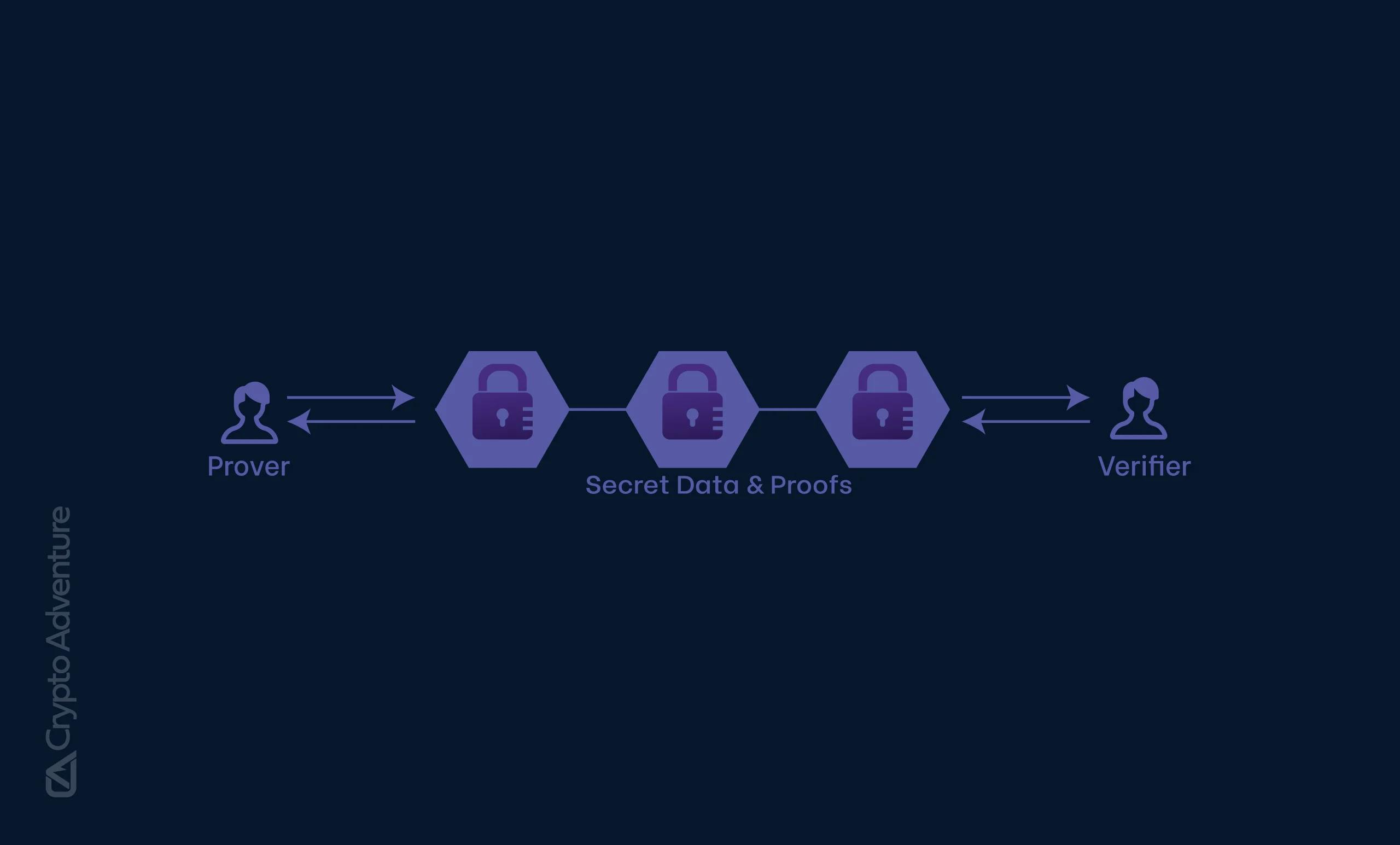

In March 2026, enterprises deploying AI models face a stark reality: regulators and clients demand ironclad proof of ZK proofs training data licensing compliance, yet exposing datasets risks intellectual property theft or privacy breaches. Zero-knowledge proofs (ZKPs) emerge as the linchpin, allowing firms to attest that training data originates from licensed sources without revealing a single byte. This technology, once niche, now underpins AI model provenance compliance, transforming how organizations build trust in machine learning pipelines.

The pressure intensifies as AI-generated content floods markets, prompting Gartner’s forecast that by 2028, half of organizations will adopt zero-trust data governance. Without verifiable attestations, models risk rejection in sectors like finance and healthcare, where data lineage dictates deployability. ZKPs address this by generating succinct proofs that training incorporated only authorized datasets, balancing zero knowledge dataset verification with operational efficiency.

The Licensing Labyrinth Enterprises Must Navigate

Enterprise AI training often draws from sprawling datasets, each bound by intricate licensing terms. Public corpora like Common Crawl offer broad access, but proprietary supplements from vendors impose restrictions on usage, redistribution, and even model outputs. Non-compliance invites lawsuits, as seen in recent high-profile disputes over scraped content. Traditional audits falter here; they require data dumps, inviting scrutiny and delays.

ZKPs flip the script. Providers commit to dataset hashes pre-training, then prove inclusion via cryptographic circuits. Verifiers confirm compliance instantaneously, sans raw data. This privacy preserving ML data origins approach aligns incentives: licensors protect assets, licensees demonstrate adherence, and auditors gain efficiency. Yet, implementation hurdles persist, from proof generation latency to circuit complexity for billion-parameter models.

ZKPROV Ushers in Verifiable Dataset Binding

At the forefront stands ZKPROV, a framework binding training datasets, model parameters, and even responses in a privacy-efficient package. Detailed in a seminal arXiv paper, it clocks proof generation under 3.3 seconds for 8B-parameter LLMs, occupying a sweet spot in verifiable machine learning by prioritizing dataset provenance over mere computational correctness.

Consider the workflow: dataset curators publish Merkle commitments of licensed contents. During training, ZKPROV embeds these into the proof system, attesting subset usage. Post-training, enterprises generate verifiable AI training attestations for regulators or partners. This not only satisfies licensing but fortifies against adversarial queries probing data origins. Opinion: while skeptics decry overhead, ZKPROV’s metrics suggest it’s viable for production, outpacing naive disclosure methods.

Federated Frontiers with ZK-HybridFL

Beyond monolithic training, federated learning amplifies compliance woes, as nodes aggregate updates from siloed data. Enter ZK-HybridFL, fusing directed acyclic graph ledgers, sidechains, and ZKPs to validate local contributions sans exposure. Benchmarks reveal faster convergence and superior accuracy versus baselines, ideal for decentralized setups where licensing spans jurisdictions.

This hybrid validates model deltas cryptographically, ensuring only licensed local data influences globals. Enterprises in multi-party collaborations, think pharmaceutical consortia, benefit immensely; proofs double as audit trails. Rohan Sharma’s patent for zk-SNARK-based governance further scales this, enabling real-time compliance for weight-hidden models. zkVMs complement by compiling familiar code into provable circuits, democratizing ZKP adoption for AI devs.

These tools signal a maturation: ZKPs evolve from theoretical curios to enterprise staples, fortifying AI model provenance compliance amid rising stakes. As zkVerify layers millisecond verification atop, the agent economy gains verifiable compute, but challenges in scalability loom for trillion-parameter behemoths.

ExecMesh exemplifies this pragmatism, prioritizing cryptographic commitments for immediate audit trails in AI provenance. Independent of bleeding-edge ZK circuits, it verifies dataset hashes and training logs, proving privacy preserving ML data origins for regulated industries. Preprints highlight its edge: deployable today, with paths to full ZK augmentation.

Overview of ZK Proof Frameworks for AI Compliance

| Framework | Core Technology | Key Features for Compliance | Performance | Source |

|---|---|---|---|---|

| ZKPROV | ZKPs for dataset binding | Binds datasets, model params, responses; verifies certified LLM training data without exposure 🔒 | <3.3s proof gen/verif for 8B params | arXiv:2506.20915 |

| Rohan Sharma’s Patent (Cryptographic AI Governance) | zk-SNARKs | Real-time compliance proofs without model weight exposure | Real-time at scale | rohansharma.net |

| ZK-HybridFL | ZKPs + DAG ledger/sidechains | Validates federated learning updates without sensitive data exposure | Faster convergence, higher accuracy | arXiv:2601.22302 |

| zkVerify | Universal ZK verification layer | Fast, cost-effective proofs for AI compute verification | Millisecond-fast | zkVerify |

| ExecMesh | Commitment-based ZK | Cryptographically verifiable AI provenance & audit trails | Immediate compliance verification | Preprints.org |

| zkVMs | Zero-Knowledge Virtual Machines | Provable circuits for privacy-preserving AI apps | Scalable verifiable computations | Forbes |

Comparative Edge: Frameworks in the Arena

Navigating options demands scrutiny. ZKPROV excels in binding datasets to responses, ideal for LLM auditors demanding end-to-end provenance. ZK-HybridFL targets federated realms, securing multi-node licensing across borders. Meanwhile, blockchain-infused systems like those in AI-blockchain journals shield model weights during sharing, tackling IP fears head-on. Each carves a niche, but hybrids prevail for enterprise scale.

| Framework | Proof Time | Model Scale | Key Strength |

|---|---|---|---|

| ZKPROV | and lt;3.3s | Up to 8B params | Dataset-response binding |

| ZK-HybridFL | Variable (ledger-synced) | Federated large | Decentralized validation |

| ExecMesh | Instant commitment | Any (audit-focused) | Compliance trails now |

This tableau underscores no one-size-fits-all; enterprises mix them surgically. Finance giants, for instance, layer ExecMesh atop ZKPROV for dual-speed compliance: rapid audits plus deep proofs. Opinion: purists chasing full ZK may lag, while pragmatists capture market share first.

Real-World Traction and Hurdles

Adoption surges in 2026. zkVerify’s universal layer slashes verification to milliseconds, fueling agent economies where AI agents trade compute under licensing proofs. Patent landscapes bloom; Sharma’s zk-SNARK governance promises weight-agnostic compliance, verifiable sans exposure. zkVMs lower barriers, letting devs craft circuits in Rust or Go, compiling to proofs for zero knowledge dataset verification.

Yet friction endures. Proof sizes balloon with data volume, verifier trust assumptions falter under quantum shadows, and integration taxes legacy stacks. House of ZK posits correctness over provenance as the holy grail; fair point, but licensing blocks deployment today. Verifiers must evolve, blending ZK with trusted execution for hybrid vigor.

zkSync Technical Analysis Chart

Analysis by Market Analyst | Symbol: BINANCE:ZKUSDT | Interval: 1D | Drawings: 6

Technical Analysis Summary

To annotate this ZKUSDT chart effectively in my balanced technical style, start by drawing a primary downtrend line connecting the swing high on 2026-01-12 at approximately 0.72 to the swing high on 2026-02-18 at 0.012, extending it forward to project potential future resistance around 0.0015 by mid-March 2026. Add horizontal lines at key support 0.0020 and resistance 0.0055. Use rectangles to highlight the recent consolidation range from 2026-02-25 to 2026-03-02 between 0.0020 and 0.0045. Mark the high-volume dump with a vertical line and callout on 2026-01-15. Place arrow_mark_down on the MACD bearish crossover around 2026-02-10, and callout for declining volume pattern post-dump. Fib retracement from the Jan high to recent low for potential bounce levels at 23.6% (0.0038). Add text notes for entry zone near support with medium risk.

Risk Assessment: medium

Analysis: Chart shows oversold conditions with volume exhaustion after sharp decline, but crypto volatility + ZK sector strength tempers downside; medium tolerance fits dip-buy on confirmation

Market Analyst’s Recommendation: Scale in longs near support with tight stops, target resistance flips; hold cash for breakout confirmation amid 2026 ZKP hype.

Key Support & Resistance Levels

📈 Support Levels:

-

$0.002 – Strong multi-touch low from late Feb-Mar, volume shelf forming

strong -

$0.002 – Psychological/absolute low extension, high conviction hold

moderate

📉 Resistance Levels:

-

$0.006 – Recent swing high and downtrend touch, initial hurdle

moderate -

$0.01 – Mid-Feb breakdown level, prior consolidation top

weak

Trading Zones (medium risk tolerance)

🎯 Entry Zones:

-

$0.003 – Bounce from strong support in low volume capitulation, align with ZKP news tailwinds

medium risk

🚪 Exit Zones:

-

$0.006 – First resistance test for partial profits

💰 profit target -

$0.002 – Below key support invalidation

🛡️ stop loss

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: declining after high-dump spike

Initial massive red volume bar on Jan dump signals distribution, now low/drying up suggesting exhaustion

📈 MACD Analysis:

Signal: bearish crossover persisting

MACD line below signal with histogram contracting, but divergence possible on oversold bounce

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by Market Analyst is for educational purposes only and should not be considered as financial advice.

Trading involves risk, and you should always do your own research before making investment decisions.

Past performance does not guarantee future results. The analysis reflects the author’s personal methodology and risk tolerance (medium).

Gartner’s zero-trust pivot by 2028 looms large, pressuring laggards. Sectors like healthcare, mandating HIPAA-aligned provenance, pioneer ZK attestations for patient-derived models. Blockchain provenance audits, per ResearchGate, already nix raw data dumps, proving compliance cryptographically.

Charting the Path Forward

By late 2026, expect zkVM proliferation, rendering ZKPs as routine as HTTPS. Agent swarms will self-attest licensing in real-time trades, zkVerify underpinning the bustle. Challenges? Circuit optimization lags hardware curves, but FPGA accelerations close gaps. Nuanced view: ZKPs won’t solo-solve AI trust; they anchor a stack with governance and oracles.

Enterprises wielding verifiable AI training attestations gain moats: compliant models fetch premiums, partnerships flourish sans NDAs. Skeptics note overheads – 10-20% training inflation – but ROI crystallizes in dodged fines and unlocked data markets. Patience pays; as circuits mature, ZK proofs cement ZK proofs training data licensing as table stakes.

Vision sharpens: AI pipelines where provenance proofs ship with models, regulators verify at glance, licensors monetize securely. This ZK renaissance fortifies enterprise models against 2026’s compliance tempests, blending privacy with proof in a verifiable dawn.