ZK Proofs for Verifying AI Training Data Provenance Without Revealing Datasets

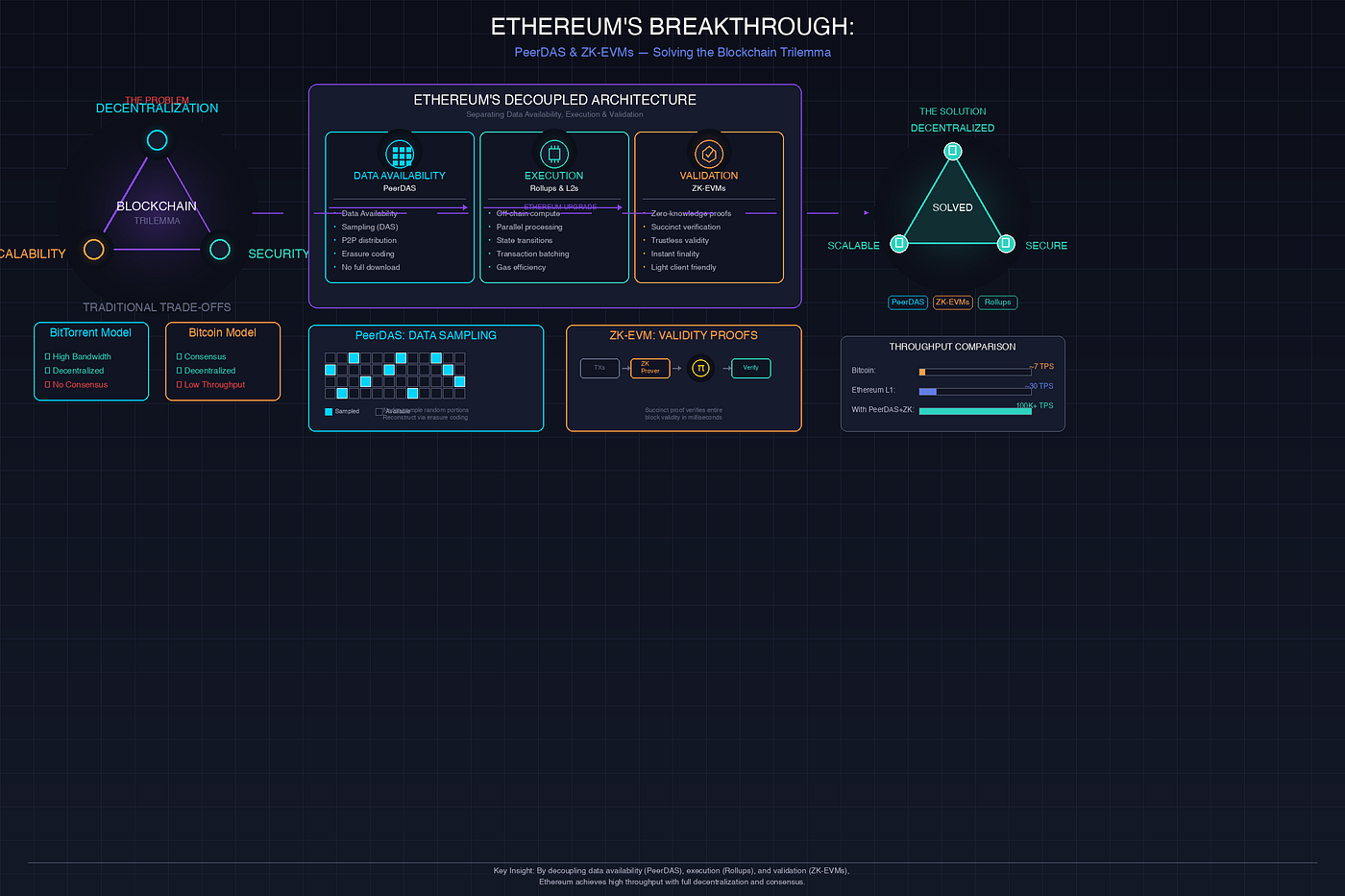

In the rush to build ever-more powerful AI models, one nagging question lingers: where did all that training data come from? Developers and regulators alike demand proof of AI model provenance verification, yet revealing datasets risks exposing trade secrets, patient records, or copyrighted material. Enter zero-knowledge proofs (ZKPs), cryptographic marvels that let you verify ZK proofs AI training data origins without spilling a single byte of sensitive info. This isn’t just theory; recent innovations are making it practical, balancing privacy with accountability in ways that could redefine trustworthy AI.

Why Data Provenance Matters More Than Ever

Picture this: a healthcare AI claims it’s trained on licensed clinical trials data, but how do you know? Without robust checks, you’re gambling on black-box assurances. Traditional audits force full disclosure, clashing with GDPR or HIPAA mandates. ZKPs flip the script, enabling zero knowledge dataset attestation that confirms data sources, licensing compliance, and even training fidelity, all while keeping contents hidden.

From my vantage blending tech and strategy, this shift feels inevitable. We’ve seen scandals where models regurgitate pirated content; now, tools like these enforce discipline without stifling innovation. Sectors like finance, where models crunch proprietary trades, stand to gain most, proving training data licensing ZK without competitive leaks.

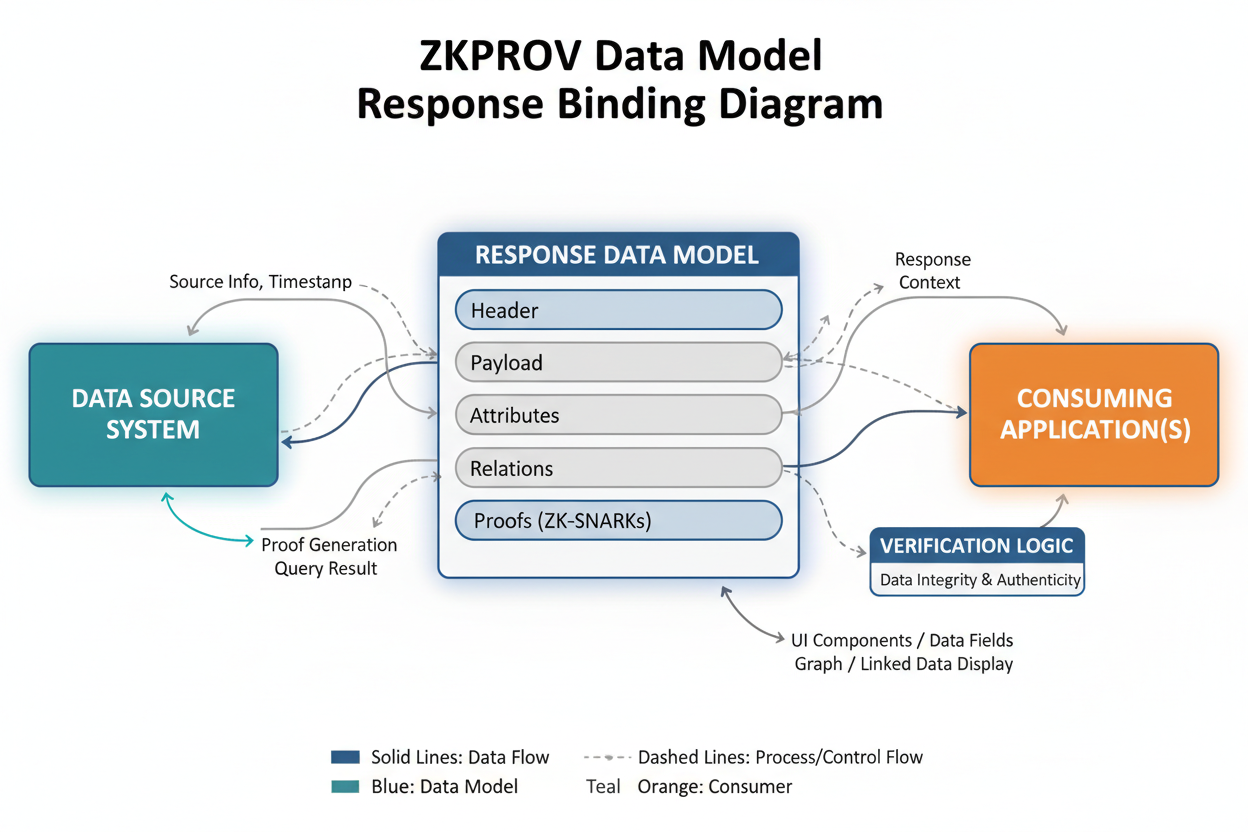

ZKPROV: Binding Data, Models, and Outputs Seamlessly

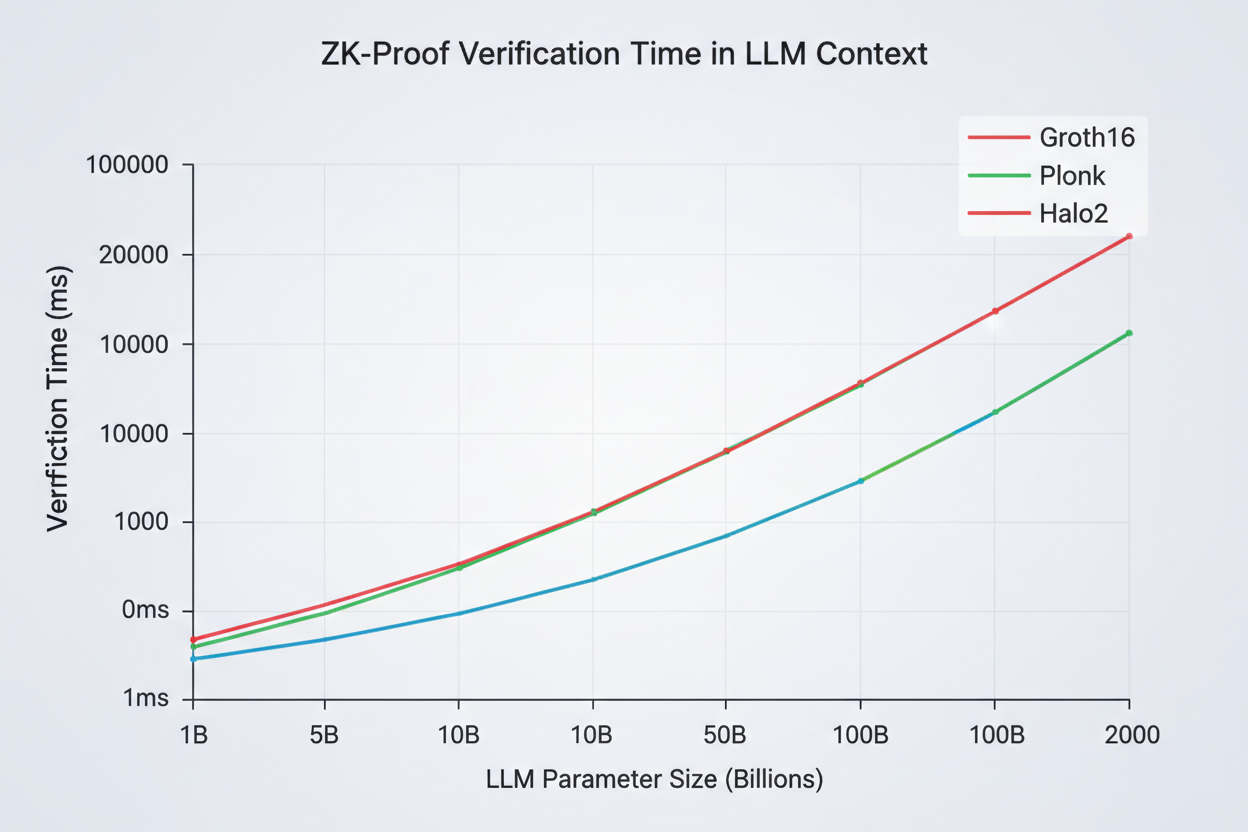

At the forefront sits ZKPROV, a framework detailed in a recent arXiv paper. It ingeniously binds training datasets, model parameters, and even model responses into verifiable packages. Attach a ZK proof to any LLM output, and verifiers confirm it stems from authorized data – no peeking required. Experiments clock end-to-end overhead under 3.3 seconds for 8-billion-parameter models, with sublinear scaling that screams scalability.

What impresses me is the formal security: it guarantees dataset confidentiality alongside provenance. No more trusting model cards at face value; this delivers cryptographic muscle. For developers, it’s a boon – generate proofs once, deploy anywhere, fostering ecosystems where privacy preserving AI data proofs become standard.

Key Advantages of ZKPROV

-

Sub-3.3s verification for large LLMs up to 8B parameters (arXiv)

-

Binds data, params, responses via zero-knowledge proofs

-

Sublinear proof generation and verification scaling

-

Formal privacy guarantees preserving dataset confidentiality

-

Enables licensed data compliance with verifiable provenance

Federated Learning Gets a ZK Upgrade with zkFL-Health

ZKPs don’t stop at single datasets. Take zkFL-Health, merging federated learning (FL) with ZKPs and trusted execution environments. In multi-institution medical AI, clients train locally, commit updates cryptographically. An aggregator in a TEE crunches the global model and spits out a succinct ZK proof proving it used exact commitments and correct rules – sans revealing client data.

Verifier nodes check proofs and log commitments on-chain for an immutable trail. This nukes single points of trust, perfect for clinical use where auditability meets confidentiality. Imagine hospitals collaborating on diagnostics AI: integrity assured, privacy intact, regulators happy. It’s a blueprint for regulated AI, extending beyond health to any collaborative setup hungry for verifiable joint training.

These frameworks signal a maturing field where ZK proofs AI training data verification evolves from niche research to deployable infrastructure. zkVerify takes it further, offering proof verification for model training, inference, and even LoRA adaptations. This modular approach suits dynamic AI pipelines, ensuring every stage – from pre-training to fine-tuning – carries provable privacy and compliance badges. Developers can mix and match, scaling privacy preserving AI data proofs across workflows without architectural overhauls.

Comparison of ZK AI Frameworks

| Framework | Key Features | Performance/Overhead | Primary Applications |

|---|---|---|---|

| ZKPROV | Binds training datasets, model parameters, and responses; ZK proofs on LLM outputs | <3.3s end-to-end overhead for 8B models; sublinear scaling | Verifying authorized datasets for LLMs without disclosure |

| zkFL-Health | Federated Learning with ZKPs & TEEs; clients commit updates, aggregator proves correct aggregation | Succinct ZK proof; on-chain audit trail | Privacy-preserving medical AI training across institutions |

| zkVerify | ZK proofs for model training, inference, and LoRA adaptation | Not specified | AI trust, provable privacy, and regulatory compliance |

| ZKML | Cryptographic proofs verifying correct execution of ML training procedures | Not specified | General verifiable machine learning inference |

Overcoming Hurdles in ZK-AI Integration

Don’t get me wrong; ZKPs aren’t plug-and-play yet. Proof generation demands hefty compute, especially for billion-parameter behemoths. ZKPROV’s sublinear scaling helps, but widespread adoption hinges on hardware accelerations like GPU-optimized circuits. Then there’s interoperability: proofs from one system must verify across chains or clouds seamlessly. zkFL-Health smartly leverages TEEs as a bridge, blending hardware trust with crypto rigor for hybrid robustness.

Regulatory alignment adds another layer. While ZKPs shine for zero knowledge dataset attestation, bodies like the EU AI Act crave standardized formats. Imagine a universal ZK schema for training data licensing ZK – it could streamline audits, slashing compliance costs by orders of magnitude. My balanced view? Optimism tempered by pragmatism: pilot in high-stakes verticals first, iterate on feedback, then flood the market.

Real-World Edge: From Finance to Pharma

Envision banks deploying trading models proven on licensed market data, sans leakage risks. Or pharma giants pooling trial datasets for drug discovery AI, with ZKPs vouching for integrity. ChainScore Labs nails it: zk-SNARKs unlock private compliance and provenance, turning liability into competitive moat. Kudelski’s ZKML verifies training procedures to spec, closing loops on reproducibility that plague open-source models.

This isn’t hype; it’s strategic necessity. As AI agents proliferate – think autonomous traders or diagnostic bots – demand for AI model provenance verification skyrockets. CoinDesk spots ZKPs as the backbone for trusted AI identities, enabling seamless org interactions. Telefónica Tech echoes: prove statements sans extras, scaling secure ecosystems effortlessly.

zkSync Technical Analysis Chart

Analysis by Robert Martinez | Symbol: BINANCE:ZKUSDT | Interval: 1D | Drawings: 5

Technical Analysis Summary

In my conservative hybrid style, begin by drawing the primary downtrend line (trend_line tool) from the swing high at 2026-02-10 around $0.42 to the recent low at 2026-05-20 around $0.055, highlighting the dominant bearish channel. Add horizontal_lines at key support $0.050 and resistance $0.200, $0.100 for S/R zones. Use rectangle for the recent consolidation range from 2026-05-01 $0.055 to $0.075. Place callouts on declining volume and MACD bearish crossover. Mark potential long entry with long_position at $0.058 and tight stop_loss below $0.048. Fib_retracement from recent low to minor high for pullback targets. Use text for labeling risk-managed setups only.

Risk Assessment: medium

Analysis: Extreme downtrend with oversold conditions, but no reversal confirmation; ZKP fundamentals supportive yet Web3 volatility high

Robert Martinez’s Recommendation: Observe only—enter low-risk longs on support hold with <1:3 R:R; preserve capital in portfolios

Key Support & Resistance Levels

📈 Support Levels:

-

$0.05 – Strong recent swing low with volume spike, potential capitulation bottom

strong -

$0.075 – Minor support from late April consolidation low

weak

📉 Resistance Levels:

-

$0.1 – Key prior support turned resistance, 50% retrace from decline

moderate -

$0.2 – Major resistance from March breakdown level

strong

Trading Zones (low risk tolerance)

🎯 Entry Zones:

-

$0.058 – Conservative long entry on bounce from strong support $0.050, aligned with ZKP news tailwinds, low risk setup

low risk

🚪 Exit Zones:

-

$0.085 – Initial profit target at minor resistance and 38.2% fib retrace

💰 profit target -

$0.048 – Tight stop loss below recent low to manage downside risk

🛡️ stop loss

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: declining on downside

Volume drying up on further lows suggests weakening sellers, potential exhaustion

📈 MACD Analysis:

Signal: bearish crossover persisting

MACD below zero with histogram contracting, but watch for bullish divergence

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by Robert Martinez is for educational purposes only and should not be considered as financial advice.

Trading involves risk, and you should always do your own research before making investment decisions.

Past performance does not guarantee future results. The analysis reflects the author’s personal methodology and risk tolerance (low).

Yet balance demands scrutiny. ZK tech matures fast, but over-reliance risks centralizing proof infrastructure. Decentralized verifiers, as in zkFL-Health’s on-chain logs, counter this nicely. Ankita Singh’s Medium piece captures the essence: completeness ensures honest provers succeed, soundness blocks cheats, zero-knowledge seals privacy. Together, they forge verification bridges sturdy enough for production AI.

Strategically, integrate ZKPs early in your stack. Start small: attest fine-tuning runs, graduate to full provenance chains. Tools like these don’t just verify; they build moats around your IP, signaling to partners and watchdogs alike that you’re ahead of the curve. In a world chasing AI dominance, privacy preserving AI data proofs aren’t optional – they’re the discipline defining winners from also-rans. The trajectory points clear: verifiable, private AI isn’t tomorrow’s promise; it’s today’s toolkit, ready to deploy.