ZK Proofs for Verifying AI Training Data Licensing Without Revealing Dataset Contents

In the rapidly evolving landscape of artificial intelligence, ensuring that training datasets are properly licensed presents a thorny dilemma. Developers need to prove compliance with data usage agreements, yet revealing dataset contents risks intellectual property theft or privacy breaches. Enter zero-knowledge proofs (ZKPs), a cryptographic innovation that flips this script. With ZK proofs for training data licensing, parties can verify AI dataset provenance ZK style, confirming authorization without exposing a single byte of sensitive information. This isn’t just theory; recent frameworks are making it practical today.

Consider the stakes. AI models like large language models guzzle vast datasets, often pieced from licensed sources, public repositories, and proprietary collections. Regulators demand transparency, enterprises insist on compliance, and creators want royalties protected. Traditional audits force disclosure, breeding distrust. ZKPs sidestep this by letting the prover demonstrate knowledge of licensed data’s hash or Merkle proof, without the data itself. It’s like proving you read the book by reciting a perfect summary, sans spoilers.

Demystifying Zero-Knowledge Proofs in AI Contexts

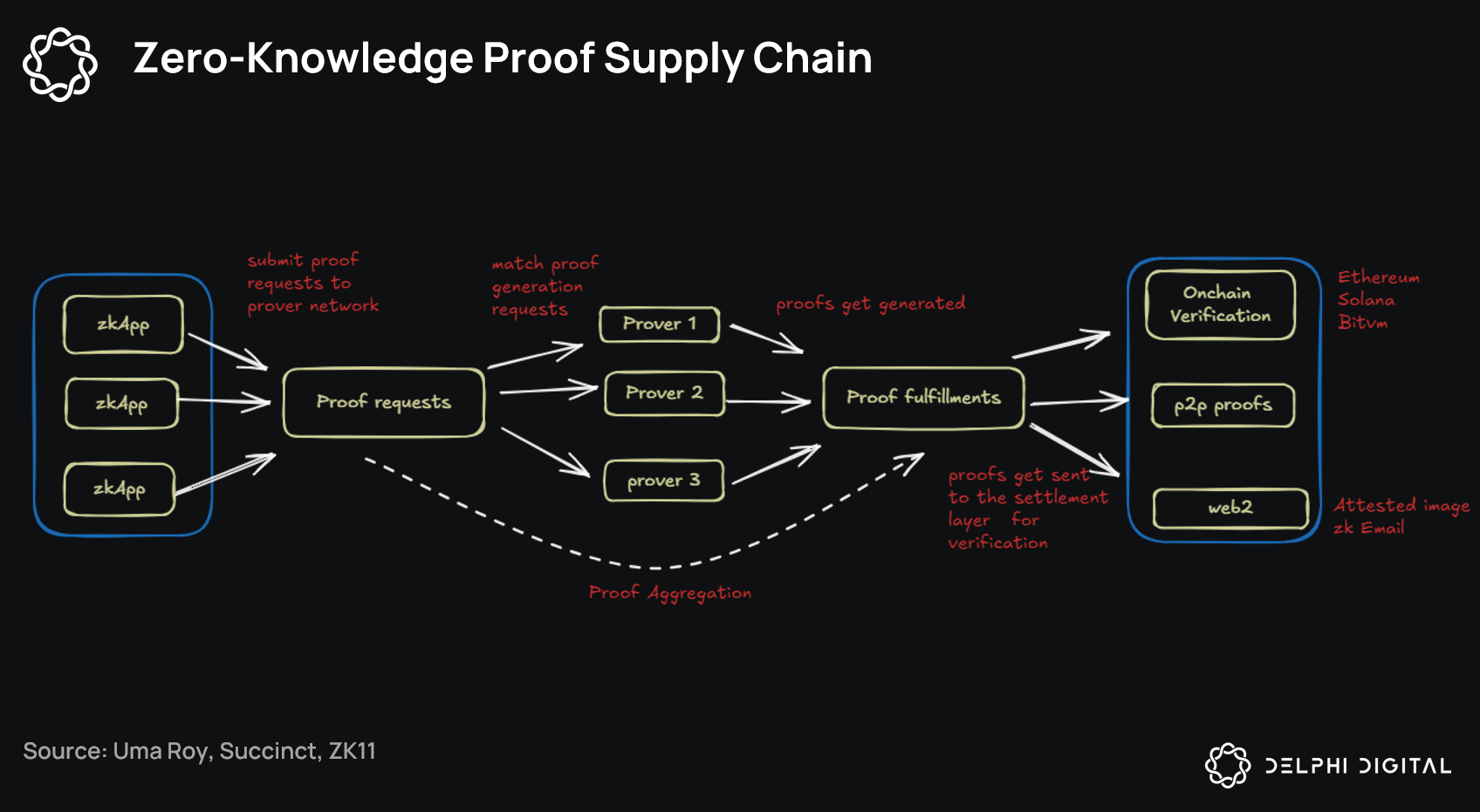

At their core, ZKPs rely on protocols like zk-SNARKs or zk-STARKs, where a prover convinces a verifier of a statement’s truth with negligible information leakage. In zero knowledge proofs AI compliance, this translates to attesting that a model’s training incorporated only permitted data slices. Imagine a neural network’s weights as a fingerprint of its diet; ZKPs prove the diet matched the menu without serving the meal.

Why does this matter now? Compute costs for full proofs once deterred adoption, but optimizations in recursive proofs and hardware acceleration have slashed times from days to minutes. Platforms like zkVerify exemplify this shift, offering millisecond-fast validation for verifiable training data attestations. Their universal layer integrates seamlessly, supporting private training and inference while upholding privacy.

Core ZK Proof Benefits

-

Privacy preservation for proprietary datasets: Verify licensing without exposing data, as in ZKPROV framework.

-

Scalable compliance for enterprise AI: Efficient proofs enable regulatory adherence, supported by zkVerify platform.

-

Reduced legal risks in data licensing: Prove authorized training without disclosure, minimizing disputes per ZenoTrust™ insights.

-

Open-source models with commercial safeguards: Balance sharing and protection, as in ByzSFL secure federated learning.

-

Audit trails without data exposure: Generate verifiable logs privately, like zkFL-Health for medical AI.

ZKPROV: Pioneering Privacy-Preserving Provenance

Launched in June 2025, the ZKPROV framework stands out as a game-changer for privacy preserving model provenance. Detailed in a seminal arXiv paper, it verifies that LLMs train on authority-certified datasets, tying relevance to user queries without spilling model parameters or data secrets. Experimental benchmarks show proof generation in under an hour for billion-parameter models, with verification in seconds – feasible for production.

ZKPROV’s elegance lies in its modular design. It computes dataset hashes incrementally during training, aggregates them into a commitment, and generates succinct proofs. Verifiers check against public licensing manifests, ensuring only greenlit sources contributed. This addresses a blind spot in current AI stacks: provenance opacity. No longer do we guess if a model ingested pirated content; ZKPs provide ironclad, court-admissible evidence.

Opinion: While skeptics decry overhead, ZKPROV’s efficiency metrics silence them. In my view, it’s the missing link for trustworthy AI, blending cryptography’s rigor with machine learning’s scale.

Emerging Systems Building on ZK Foundations

Beyond ZKPROV, innovations proliferate. ByzSFL, from January 2025, bolsters federated learning with Byzantine-robust ZK aggregation. Parties compute weights locally, prove correctness privately, yielding secure averages without a trusted aggregator. This cuts collusion risks in multi-party training, vital for collaborative AI.

Then there’s zkFL-Health, unveiled December 2025, fusing ZKPs with trusted execution environments for medical AI. Multi-institutional datasets train verifiably correct models, shielding patient data while enabling audits. Integrity proofs ensure no tampering, confidentiality bars leaks – a boon for healthcare’s ethical tightrope.

These aren’t silos; they converge on a unified vision. ZK proofs training data licensing emerges as the standard, much like HTTPS did for web trust. Platforms like zkVerify accelerate this, their hardware-optimized verifiers promising cost parity with non-ZK workflows. As adoption grows, expect licensing marketplaces to embed ZK natively, rewarding compliant data with premium attestations.

Yet integration hurdles remain. Generating ZK proofs demands specialized knowledge, and not all AI frameworks support them natively. zkVerify tackles this head-on with plug-and-play modules, bridging the gap for developers wary of crypto complexity. Their verifiable training data attestations shine in agent economies, where AI agents swap models mid-task, demanding instant trust signals.

Real-World Applications Across Industries

Healthcare offers a prime testing ground. zkFL-Health’s architecture proves indispensable for hospitals pooling anonymized scans without central data hoarding. Regulators verify compliance via public proofs, patients retain sovereignty, and models improve collectively. This sidesteps GDPR pitfalls, turning liability into leverage.

Finance follows suit. Banks train fraud detectors on licensed transaction histories, proving to auditors that no illicit data snuck in. ZKPs enable zero knowledge proofs AI compliance at scale, where legacy systems balk. Enterprises like those using ZenoTrust integrate regulatory reasoning atop ZK, automating audits across jurisdictions.

Creative sectors benefit too. Media firms license image corpora for generative AI, attesting usage caps without metadata dumps. ZKPROV-style commitments track token burns or derivative limits, fostering fair ecosystems. It’s a creative boon: artists license confidently, knowing royalties tie to verifiable provenance.

These cases underscore a pivotal shift. ZK proofs don’t just verify; they incentivize ethical data markets. Providers badge datasets with ZK commitments, buyers query proofs pre-training. Blockchain hybrids amplify this, timestamping licenses immutably.

Overcoming Technical and Adoption Barriers

Sure, proof sizes and recursion depth challenge smaller teams. But STARK advancements and AI-assisted proof optimization, as explored in recent papers, compress footprints dramatically. Tools like those from Montreal AI Ethics Institute experiment with training proofs directly, baking compliance into gradients.

Adoption lags cultural more than technical. Developers habituated to opacity resist the audit mindset. My take: treat ZK as infrastructure, like Docker revolutionized devops. Early movers gain moats – compliant models fetch premiums in marketplaces, non-proven ones face discounts.

Regulatory tailwinds help. EU AI Act mandates high-risk provenance; ZKPs deliver succinct compliance. U. S. bills echo this, prioritizing privacy tech. Platforms evolve accordingly, with zkVerify’s edge-native audits fitting decentralized norms.

Looking ahead, hybrid systems dominate. Combine ZK with federated learning for global datasets, or embed in inference chains for end-to-end trust. Licensing evolves to dynamic proofs: prove not just origin, but ongoing usage fidelity.

Navigating the Path Forward

Interoperability standards loom large. Initiatives akin to ZKPROV’s toolkit unify protocols, letting proofs chain across frameworks. Open-source pushes, like ByzSFL’s toolkit, democratize access, lowering entry for startups.

Challenges persist – quantum threats to elliptic curves spur post-quantum ZK races. Yet lattice-based schemes mature fast, future-proofing stacks. Cost curves bend toward zero with ASIC verifiers, mirroring Bitcoin’s efficiency march.

Ultimately, ZK proofs training data licensing redefines AI’s social contract. No more black-box models; verifiable transparency becomes table stakes. Developers prove integrity, users trust outputs, ecosystems flourish. We’ve seen cryptography secure finance; now it safeguards intelligence itself.