Enterprise AI Deployments Rely on ZK Proofs for Training Data Compliance 2026

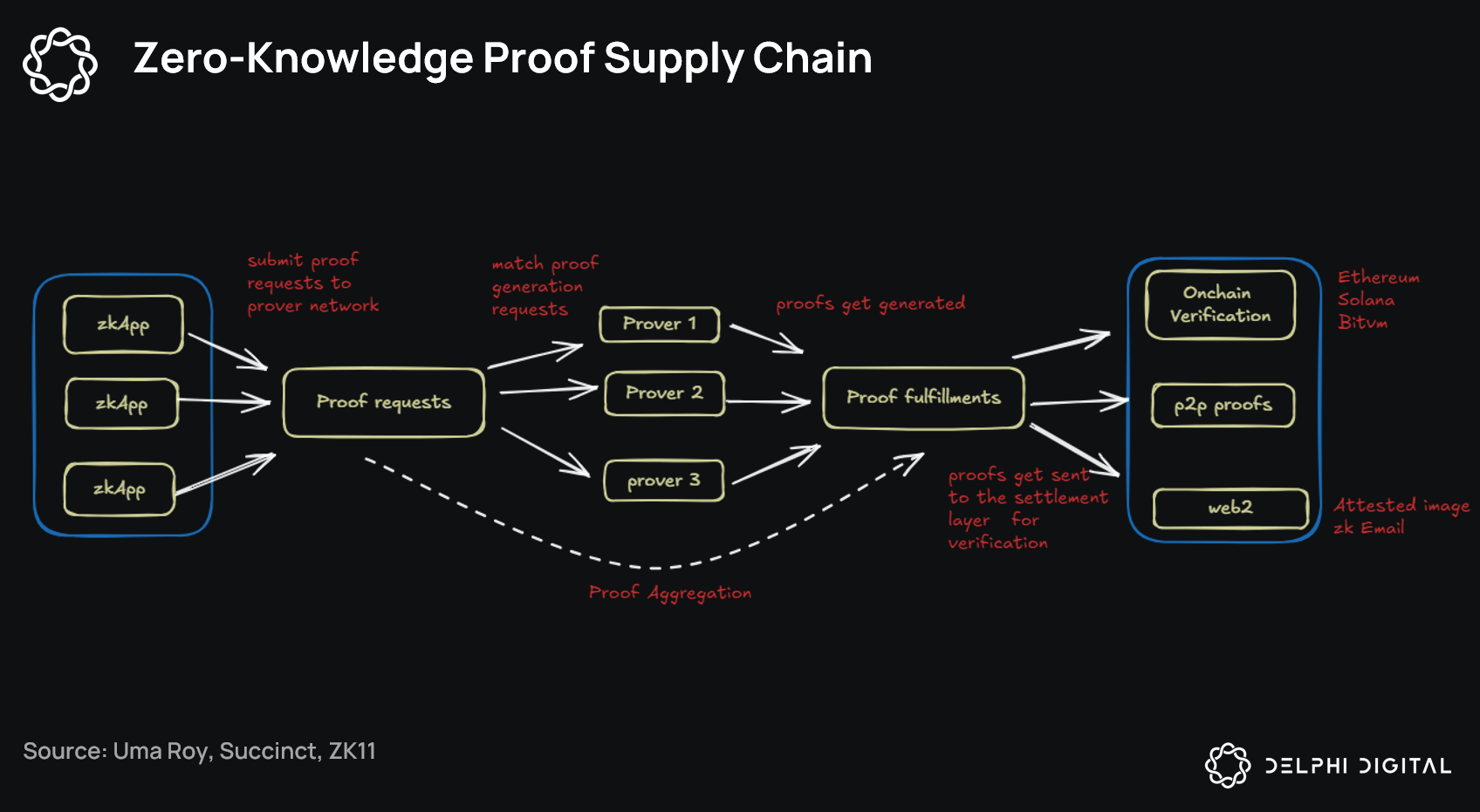

In 2026, enterprise AI teams are staring down a brutal reality: deploy cutting-edge models fast, or get buried under a avalanche of privacy regulations and data provenance demands. Finance giants crunching credit risk data, healthcare networks training on patient records, they’re all racing to prove their training data compliance 2026 without handing over the keys to their kingdom. Enter zero-knowledge proofs (ZKPs), the cryptographic wizardry that’s flipping the script on enterprise AI ZK proofs. Suddenly, you can attest to data origins, licensing, and integrity while keeping sensitive details locked tighter than a DeFi vault. It’s not hype; it’s the backbone of secure AI deployments ZK that regulators are starting to demand.

Picture this: your AI model aces performance benchmarks, but auditors demand proof it wasn’t trained on pirated datasets or breached GDPR boundaries. Without ZKPs, you’re either redacting everything into oblivion or risking multimillion-dollar fines. Sources like Security Boulevard nail it-zero-knowledge compliance lets you flash regulatory adherence badges without exposing the goods. And with courts signaling tougher stances on copyrighted training data, per Baker Donelson’s 2026 forecast, ignoring this tech isn’t an option. It’s the difference between thriving deployments and endless legal headaches.

The Compliance Crunch Hitting Enterprises Hard

Fast-forward to February 2026, and the landscape is unforgiving. Sweeping US privacy laws are reshaping risks, as Evrim Ağacı points out, while global regs pile on. Organizations face a paradox: AI thrives on massive datasets, yet every byte carries compliance landmines. Adverse rulings could spike costs for developers, forcing a pivot to verifiable methods. That’s where ZK model attestations enterprise shine. Platforms like zkVerify let you prove training integrity and ethical adherence sans proprietary leaks, building ironclad trust.

5 ZK Proof Wins for AI Compliance

-

Prove data provenance without revealing sources – zkVerify lets you validate training data integrity while keeping sensitive info hidden!

-

Validate licensing & regs privately – zero-knowledge compliance proves adherence to privacy laws like GDPR without exposing data.

-

Supercharge federated learning with ZK guarantees – DP-RTFL framework adds privacy proofs for secure, verifiable AI training across devices.

-

Slash audit times from months to minutes – ZKPs enable instant, cryptographic verification of AI model compliance!

-

Future-proof vs. evolving laws – Stay ahead of 2026 privacy regs with adaptable ZK tech for compliant AI deployments.

This isn’t theoretical fluff. Calibraint highlights how Zero Knowledge Proof AI powers agents for complex validations minus model exposure. Quicknode lists ZKML in the top 10 ZKP apps, emphasizing verification of ML training without data spills, directly tackling data protection regs.

ZKPs as the Privacy Engine for AI Scale

Dive deeper, and ZKPs aren’t just shields; they’re accelerators. AInvest calls them the strategic layer for private AI compute, letting enterprises run confidential ops while spitting out proofs. Outperforming chains like Solana in privacy-scalability combos? Bold claim, but the math checks out for high-stakes systems. Phemex echoes this for secure AI training in data marketplaces, fostering innovation without fear.

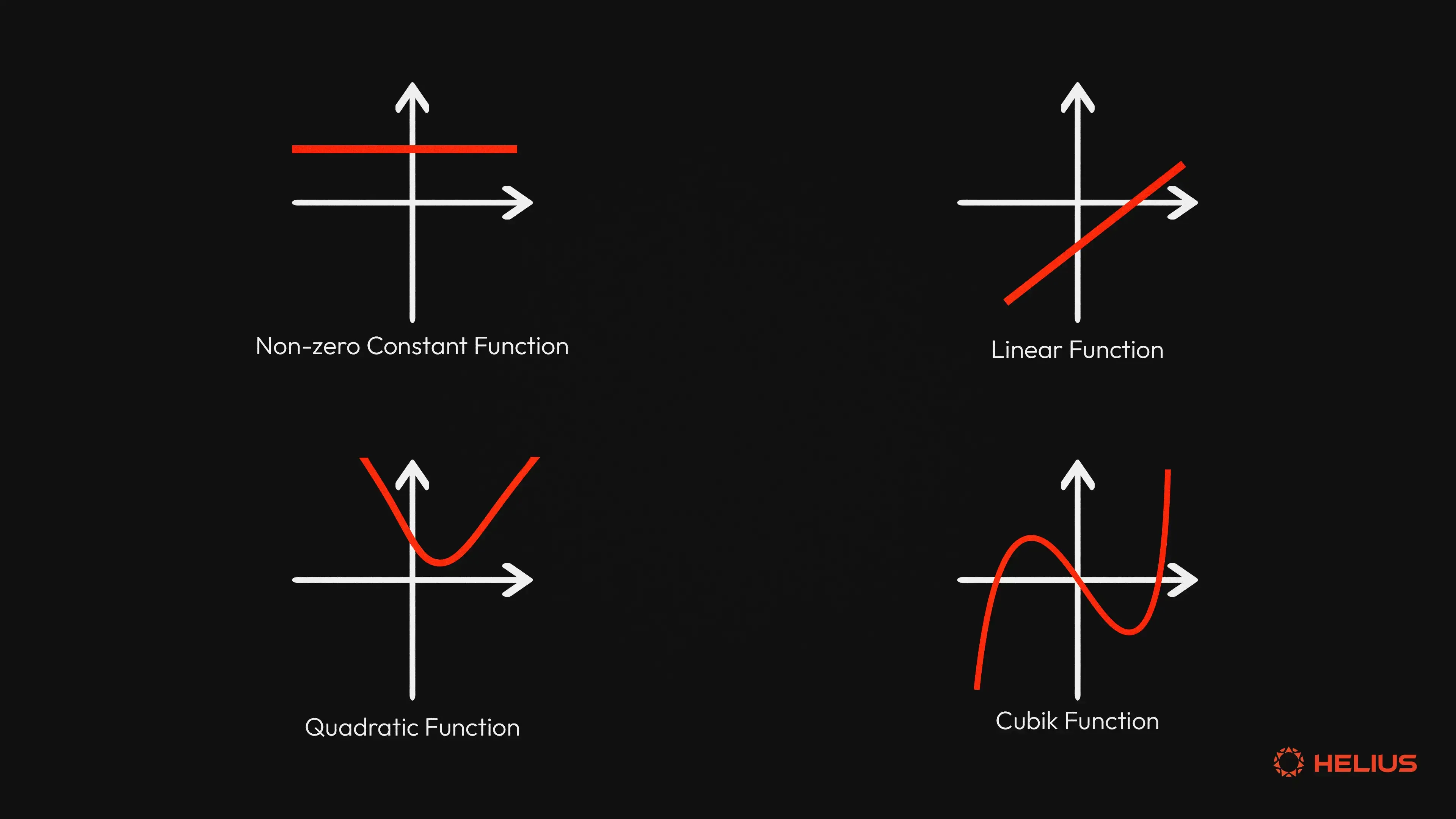

Take AIMultiple’s use cases: ZKPs slash breach risks in auth systems, a blueprint for training pipelines. zkrollups. io solves the privacy paradox by splitting unregulated from compliant privacy, per LexieCrypto assessments. In enterprise stacks, this means regulatory AI provenance baked in, no trade-offs.

Frameworks That Turn ZK Theory into Deployment Reality

Now, the real juice: practical tools. DP-RTFL framework blends local differential privacy with ZK integrity proofs for federated learning, ideal for finance’s credit assessments. Arxiv papers detail how it delivers verifiable guarantees on sensitive data. Pair that with privacy-preserving cloud arches combining federated learning, diff privacy, and ZK compliance proofs for multi-cloud training. Cryptographic policy enforcement? Check. These aren’t lab toys; they’re powering production secure AI deployments ZK.

zkVerify takes it further, verifying model aspects like data integrity without secrets. As enterprises scale AI, these integrations ensure compliance isn’t a bottleneck but a superpower. Check out this guide on ZKPs for verifiable AI outputs to see the mechanics in action. The shift is seismic-ZKPs aren’t optional; they’re the compliance currency of 2026 AI.