ZK Proofs Verify AI Training Data Origins Without Revealing Datasets

AI models devour datasets like sharks in a feeding frenzy, but proving where that data came from without spilling the guts? That’s the knife-edge challenge ripping through the industry. Enter ZK proofs training data – zero-knowledge proofs that slam the door on leaks while screaming ‘trust me, it’s legit. ‘ No more blind faith in black-box models; we’re talking ironclad AI data provenance that regulators and devs crave.

Picture this: healthcare giants training LLMs on patient records. One wrong move, and HIPAA nightmares erupt. ZK proofs flip the script. They let you verify a model slurped certified data without exposing a single byte. It’s aggressive privacy warfare, and it’s here now.

ZKPROV Ignites the Provenance Revolution

ZKPROV isn’t some lab toy; it’s a cryptographic beast from arXiv and Harvard brains. This framework binds your training datasets, model parameters, and even responses into a zero-knowledge proof cocktail. Attach it to LLM outputs, and boom – users confirm your model trained on the right stuff without peeking under the hood.

What’s savage? Sublinear scaling means proof generation and verification don’t explode with model size. For 8B parameter behemoths, end-to-end overhead clocks under 3.3 seconds. That’s not theory; that’s battlefield-ready. ZKPROV carves out zero knowledge model verification by laser-focusing on dataset origins, not just compute correctness. Other verifiable ML tools chase execution fidelity; ZKPROV owns the data lineage war.

Crushing Compliance in Regulated Arenas

Regulated sectors like healthcare aren’t playing nice. They demand proof your AI didn’t train on pirated or biased slop. Dataset licensing ZK enters as the enforcer. ZK proofs attest to licensed, ethical data origins without revealing trade secrets. No more lawsuits over shadowy sourcing; just verifiable attestations that hold up in court.

ZK Proofs’ Killer AI Wins

-

Privacy Shield: ZKPROV locks down sensitive datasets – verify LLM training origins without spilling secrets, perfect for healthcare.

-

Lightning Verification: ZKPROV proofs generate & verify with minimal overhead – fast, practical for real-world AI blasts.

-

Automated Licensing: Prove data compliance instantly – no more licensing headaches, ZKPs handle the heavy lifting.

-

Decentralized Markets Boost: ZKPs supercharge data markets like Phemex envisions – secure trades, explosive growth.

-

Scalable AI Origins: zkVerify & ZKPoT enable massive verifiable training – trust at scale, no data leaks.

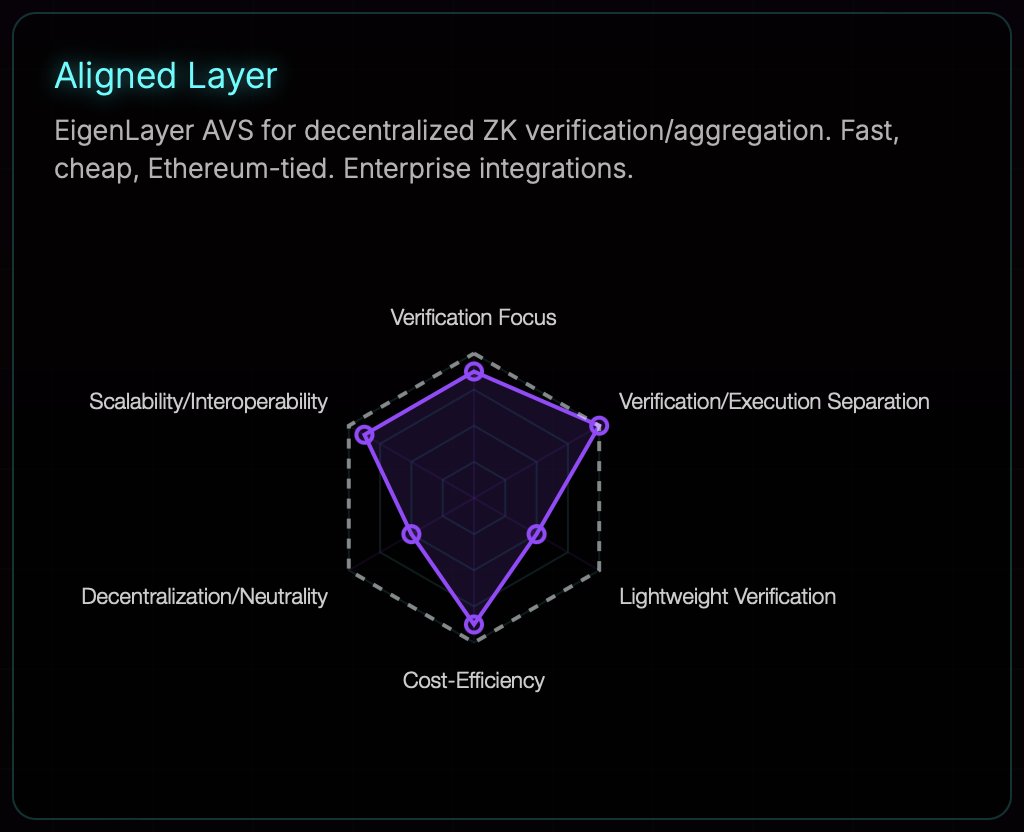

Take zkVerify’s platform: it weaves ZKPs into private training and secure inference. Prove model fairness and provenance without baring proprietary guts. This isn’t fluffy ethics; it’s a moat against competitors and watchdogs. Platforms like these turbocharge trust, letting enterprises deploy AI that screams compliance.

Why Traditional Audits Are Dead Weight

Forget audits that demand full dataset dumps. They’re slow, costly, and leak like sieves. ZK proofs obliterate that nonsense with mathematical certainty. Prover shows truth; verifier nods without seeing the sausage-making. In decentralized data marketplaces, this unlocks secure AI training. Sellers license data; buyers verify usage sans exposure. Innovation explodes because verifiable AI origins grease the wheels.

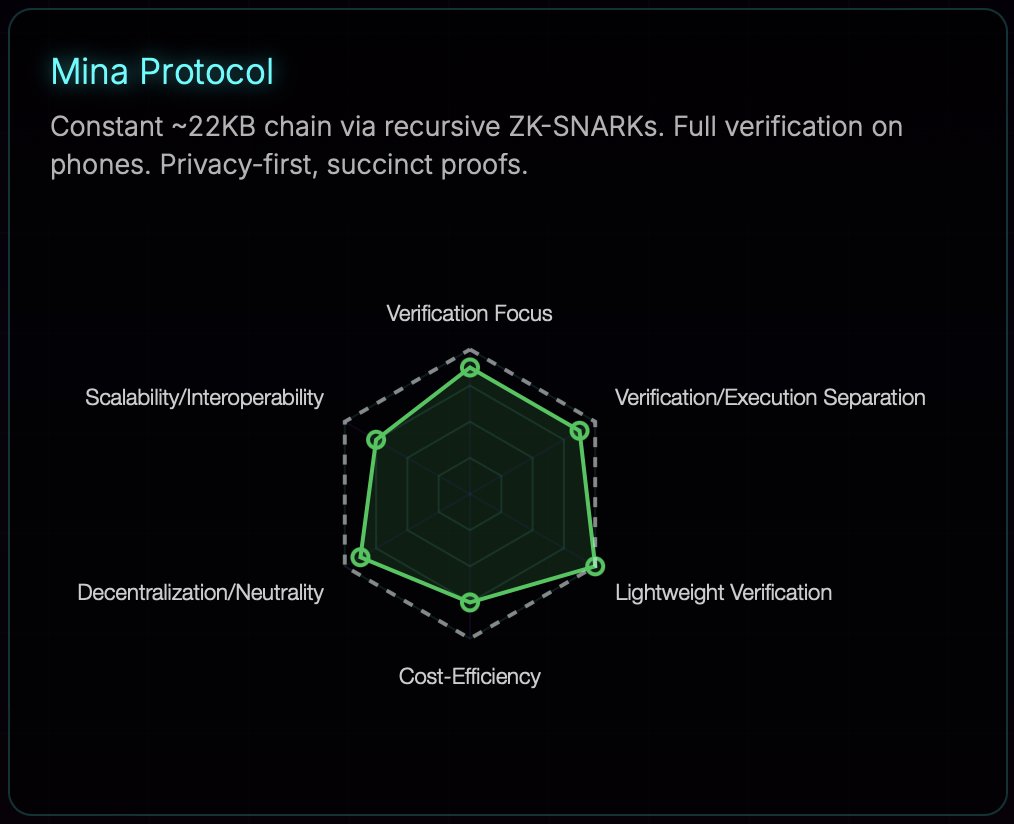

Deep neural nets get zkPoT – zero-knowledge proofs of training. Commit to a dataset, train the model, prove it matches without reveals. Cryptology ePrint nails it: pure power for proving correct training on committed data. Stack this with ZKPROV, and you’ve got a fortress.

But don’t stop at theory – these tools are scaling to crush production workloads. Imagine deploying LLMs where every response carries a ZK proof tag, whispering verifiable AI origins to skeptical users. No more ‘trust us’ handwaves; pure, provable pedigree.

Scalability That Punches Above Its Weight

ZK proofs used to be gas-guzzlers, choking on massive models. Not anymore. ZKPROV’s sublinear magic keeps overhead razor-thin, even for those 8 billion-parameter monsters. Generate a proof, verify it, all under 3.3 seconds end-to-end. That’s the difference between lab curiosity and enterprise hammer. Stack in zkVerify’s toolkit, and you’ve got private training pipelines that spit out fair, provenance-proven models without a data drip.

Healthcare? Locked and loaded. Prove your diagnostic AI trained on certified, de-identified records without triggering privacy alarms. Finance follows suit – verify trading models slurped licensed market data, dodging insider trading FUD. Dataset licensing ZK turns data deals into bulletproof contracts. Sellers get paid; buyers get assurance. No leaks, no disputes.

Versus the Old Guard: No Contest

Traditional audits? Dinosaur bones. They demand dataset peeks, invite hacks, and drag timelines into oblivion. ZK flips that script with cryptographic steel – prove training fidelity on committed data via zkPoT, origins via ZKPROV. Kudelski Security nails ZKML: verify without revealing the blueprint. Cloud Security Alliance echoes it for model integrity. Orochi Network pushes practical ML computations, blind to inputs.

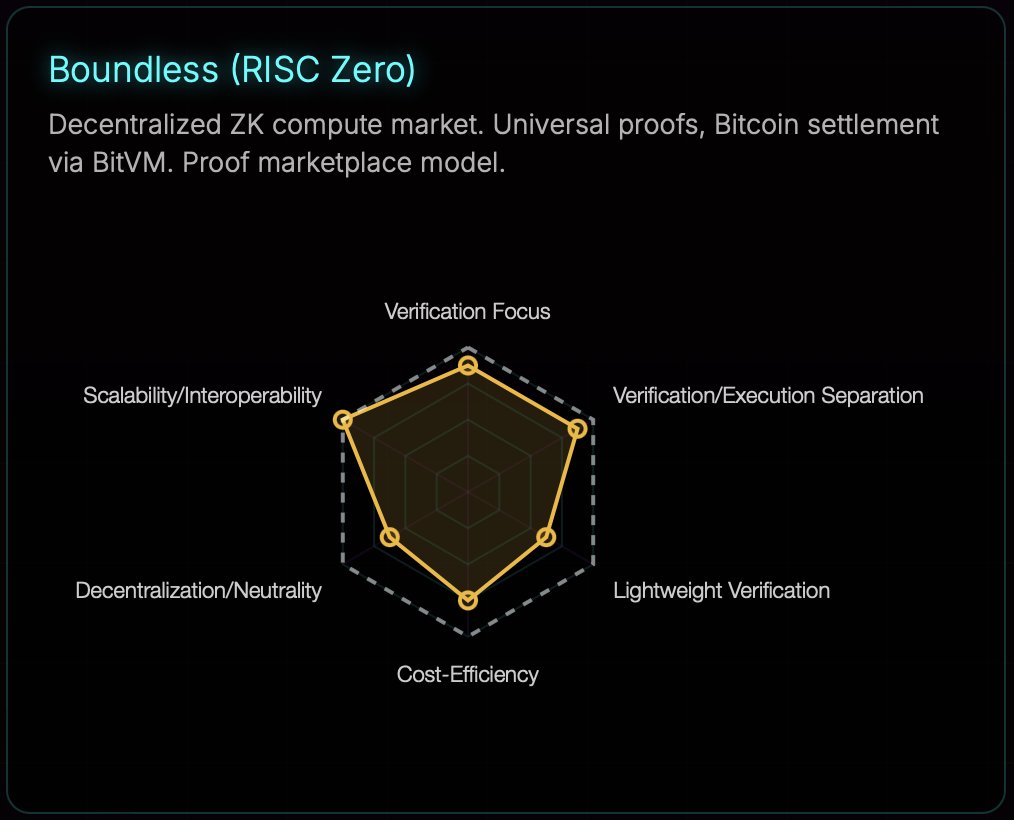

Phemex spots the goldmine: ZK supercharges AI data marketplaces. Decentralized bazaars where datasets trade freely, usage proven sans exposure. Ankita Singh on Medium breaks it down – prover convinces verifier sans secrets spilled. That’s the zero-knowledge gospel reshaping ML.

Challenges linger, sure. Proof gen still hungrier than native training. But recursive SNARKs and hardware accelerators are inbound, slashing cycles. Harvard’s ZKPROV already threads the needle – unique in provenance over mere compute checks. arXiv papers stack evidence: it’s not if, but when ZK owns verifiable ML.

zkSync Technical Analysis Chart

Analysis by Market Analyst | Symbol: BINANCE:ZKUSDT | Interval: 1D | Drawings: 5

Technical Analysis Summary

To annotate this ZKUSDT chart effectively in my balanced technical style, start by drawing a prominent downtrend line connecting the October 2026 high near 0.0800 to the December 2026 low around 0.0040, highlighting the dominant bearish impulse. Add a short-term uptrend line from the late December low to the early February consolidation for potential reversal signs. Mark horizontal lines at key support (0.0040) and resistance (0.0200, 0.0400). Use fib_retracement from the major high to low for retracement levels. Overlay date_price_range rectangles for the late-2026 consolidation zone. Place arrow_mark_down at the November breakdown and arrow_mark_up at the December bounce. Add callouts for volume divergence and MACD bearish crossover. Finally, text notes for entry zones and risk assessment.

Risk Assessment: medium

Analysis: Bearish structure but oversold signals and ZK news tailwinds balance risks; volatility high post-dump

Market Analyst’s Recommendation: Scale in longs cautiously around support, target 0.02, stop 0.0035; monitor for breakout

Key Support & Resistance Levels

📈 Support Levels:

-

$0.004 – Strong volume-supported bottom, multiple tests

strong -

$0.006 – Intermediate support from prior lows

moderate

📉 Resistance Levels:

-

$0.02 – Key resistance from November lows, fib 0.236

strong -

$0.04 – Mid-range resistance, prior swing high

moderate

Trading Zones (medium risk tolerance)

🎯 Entry Zones:

-

$0.005 – Consolidation bounce with volume pickup, aligns with strong support

medium risk -

$0.006 – Break above minor uptrend for confirmation

low risk

🚪 Exit Zones:

-

$0.02 – Profit target at first major resistance/fib level

💰 profit target -

$0.004 – Tight stop below absolute low for risk management

🛡️ stop loss

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: climax then drying up

High volume on dump, low on consolidation signals seller exhaustion

📈 MACD Analysis:

Signal: bearish divergence at lows

MACD histogram contracting, potential bullish cross ahead

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by Market Analyst is for educational purposes only and should not be considered as financial advice.

Trading involves risk, and you should always do your own research before making investment decisions.

Past performance does not guarantee future results. The analysis reflects the author’s personal methodology and risk tolerance (medium).

Bottom line? ZK proofs training data isn’t hype; it’s the enforcement layer AI begged for. Enterprises wielding this lock in competitive edges – compliant, private, trusted models that outpace rivals shackled by opacity. Devs, grab these frameworks. Regulators, sharpen your pencils. The provenance revolution roars forward, datasets secured, innovation unchained. Pips in crypto waited for no one; neither does AI trust.