ZK Proofs for Verifying AI Training Data Provenance Without Data Exposure

In the shadowy underbelly of AI development, where models feast on petabytes of data, a critical vulnerability lurks: how do we trust the origins of that training data without laying it bare for all to see? Enter zero-knowledge proofs for AI training data provenance, a cryptographic sleight-of-hand that verifies integrity while shrouding sensitive details. This isn’t just technical wizardry; it’s a strategic imperative for enterprises navigating privacy-preserving dataset provenance in regulated realms like healthcare and finance. As AI models scale to billions of parameters, proving AI model provenance ZK without exposure becomes the linchpin for compliance and trust.

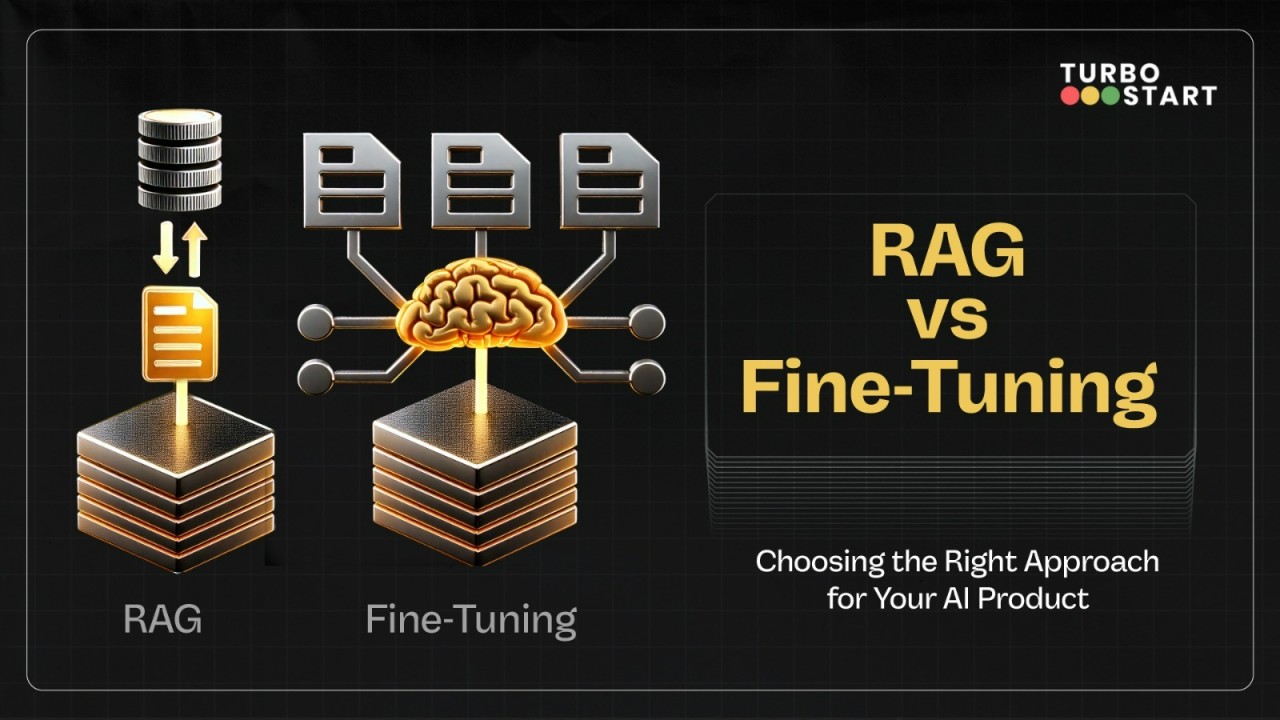

Consider the stakes. Regulators demand auditable data lineages, yet exposing datasets risks intellectual property theft or privacy breaches. Traditional audits falter here, demanding full disclosure. ZK proofs AI training data flip the script: the prover demonstrates exact adherence to training specs, dataset commitments, and licensing without revealing a single datum. This balance of verifiability and secrecy is reshaping zkML model integrity, turning opaque black boxes into transparent fortresses.

Unraveling the Privacy Paradox in AI Pipelines

AI pipelines brim with friction points. Data sourcing, preprocessing, and fine-tuning often occur in silos, breeding distrust among collaborators. Without robust verification, accusations of poisoned datasets or unlicensed content erode confidence. Zero-knowledge training data verification emerges as the antidote, enabling succinct proofs that bind inputs to outputs cryptographically. Recent momentum underscores this shift; frameworks now scale to massive LLMs, proving training fidelity in seconds rather than days.

Strategically, this empowers decentralized AI ecosystems. Developers can attest to ethical sourcing, while verifiers confirm compliance sans scrutiny. In finance, where models predict market cycles akin to my own bond analyses, tainted data could cascade into catastrophic trades. ZK tech insulates against such risks, fostering collaborative innovation without the paranoia of data espionage.

Breakthrough ZK Frameworks for AI Provenance

-

ZKPROV: Cryptographic framework by Namazi et al. (June 2025) binding datasets, model parameters, and responses with ZK proofs for verifiable LLM training without disclosure. Sublinear proof scaling, <3.3s overhead for 8B models. arXiv

-

zkFL-Health: Architecture by Sharma et al. (Dec 2025) combining federated learning, ZKPs, and TEEs for privacy-preserving medical AI training. Verifiable aggregation with on-chain commitments. arXiv

-

Verifiable Fine-Tuning: Protocol by Akgul et al. (Oct 2025) with succinct ZK proofs for models from public init under auditable quotas, samplers, and data commitments. No index leakage. arXiv

ZKPROV: Efficiency Meets Ironclad Privacy

At the vanguard stands ZKPROV, unveiled by Namazi et al. in June 2025. This framework ingeniously ties training datasets, model parameters, and even responses through zero-knowledge proofs attached to LLM outputs. Imagine deploying a model where every inference carries an embedded attestation: “Trained solely on certified, licensed data, as specified. ” No dataset leaks, no parameter peeks; just sublinear proof generation scaling gracefully to 8-billion-parameter behemoths, with end-to-end verification under 3.3 seconds. Practical? Undeniably so.

Its opinionated design prioritizes real-world deployment. By committing to manifests that encapsulate data sources, licenses, and epoch quotas, ZKPROV enforces policies with zero violations. No more finger-pointing over index leakage or preprocessing sleights; cryptographic bindings ensure replayable, auditable fidelity. For strategic minds, this means monetizing models confidently, licensing compliance baked in from genesis.

Federated Frontiers: zkFL-Health’s Collaborative Edge

Building on this, zkFL-Health by Sharma et al. in December 2025 weaves federated learning with ZK proofs and TEEs for medical AI. Clients train locally, commit updates; aggregators in TEEs compute globals and issue ZK proofs affirming exact input usage and rule adherence. Verifiers chain commitments on-ledger, birthing immutable audits without trusting intermediaries. This hybrid obliterates single points of failure, vital for healthcare where patient data sanctity reigns supreme.

Nuance lies in its verifier-centric trust model. No host peeks at updates; proofs suffice. Scalability shines too, supporting distributed teams without bandwidth hogs or privacy trade-offs. Pair this with verifiable fine-tuning protocols from Akgul et al. , and we glimpse a future where zero knowledge training data verification is routine, quotas enforced, samplers hid, utility pristine.

Verifiable Fine-Tuning protocols, crafted by Akgul et al. in October 2025, elevate this paradigm further. They generate succinct proofs affirming a model’s evolution from a public base through declared programs and dataset commitments. Manifests bind sources, preprocessing, licenses, and epoch quotas; verifiable samplers enable replayable batches with index privacy. Proofs clock in practically, enforcing quotas flawlessly, utility intact, leakage nil. This isn’t mere verification; it’s policy-as-code, where deviations trigger cryptographic alarms before deployment.

Comparison of Leading ZK Frameworks for AI Training Data Provenance

| Framework | Key Innovation | Scalability | Ideal Sector |

|---|---|---|---|

| ZKPROV | Binding training datasets, model parameters, and responses with ZK proofs attached to LLM outputs | Sublinear scaling, end-to-end overhead <3.3s for 8B params | General LLMs |

| zkFL-Health | Federated aggregation in TEEs with ZK proofs for verifiable collaborative training, on-chain audit trail | Distributed clients, succinct proofs with on-chain audits | Healthcare collaboration |

| Verifiable Fine-Tuning | Auditable samplers and quotas with commitments binding data sources, preprocessing, licenses, and verifiable sampling | Tight budgets with zero violations, practical proof performance | Regulated fine-tuning |

These frameworks converge on a shared truth: ZK proofs AI training data isn’t a luxury but a baseline for trustworthy AI. Yet hurdles persist. Proof generation demands hefty compute, though recursion and hardware acceleration erode this gap. Interoperability lags; standards for commitments and circuits remain nascent. Still, projects like Inference Labs’ JSTprove and zkml-blueprints signal maturation, blueprints for ML circuits democratizing access.

Strategic Imperatives for Enterprises

From a long-term investor’s vantage, akin to tracing bond yields through cycles, AI model provenance ZK mirrors macro diligence. Tainted data echoes subprime ripples; verifiable chains preempt defaults. Enterprises must prioritize: audit pipelines now, integrate ZK at ingest. Platforms like ZKModelProofs. com stand ready, generating attestations for datasets sans exposure, ensuring licensing compliance in privacy’s veil. This strategic pivot unlocks federated marketplaces, where models trade on proven pedigrees, not blind faith.

Privacy-preserving dataset provenance extends beyond tech; it’s governance. Regulated sectors crave it most. Healthcare silos patient insights via zkFL-Health; finance fortifies quants against shadow data. zkML model integrity proofs cascade benefits: reduced liability, accelerated audits, novel revenue from certified models. Skeptics decry overheads, but sub-second verifications for billion-param models debunk that. History rhymes; just as cycles reward the prepared, AI’s provenance wars favor the cryptographically astute.

Roadblocks and the Path Forward

Computational intensity tops concerns. Proving intricate ML ops strains current SNARKs, yet ZIP’s precise inference proofs and ARPA’s scalable visions hint at breakthroughs. Ecosystem fragmentation? GitHub repos like zkml-blueprints unify formulations, fostering reusable circuits. Decentralized plays, ZkAGI on Solana, blend ZK with federated learning, portending blockchain-native AI. My take: bet on hybrids. TEEs augment ZK where proofs falter, recursion slims sizes. By 2027, expect routine attestations, commoditized like SSL certs today.

Strategic deployment demands nuance. Start small: pilot on fine-tuning subsets. Measure overhead against breach costs; ROI tilts ZK-ward. Collaborate via open proofs; verify peers sans data swaps. In commodities, I track provenance from mine to market; AI demands analogous rigor. ZKModelProofs. com pioneers this, empowering developers with tools for secure, verifiable ML futures. As proofs mature, trust scales, innovation surges, black boxes yield to crystalline ledgers. The cycle turns toward transparency, privacy intact.