In the realm of federated AI learning, where models train across decentralized datasets without centralizing sensitive information, ensuring verifiable training data provenance emerges as a critical safeguard. Organizations grapple with proving that models incorporate licensed, ethical data sources amid rising regulatory scrutiny and privacy demands. Zero-knowledge proofs (ZKPs) offer a pathway to attest data origins and computation integrity without exposing underlying details, yet their integration into federated systems remains nascent and fraught with efficiency hurdles.

Navigating Trust Gaps in Decentralized Model Training

Federated learning sidesteps data silos by having edge devices compute local updates, aggregated centrally into a global model. This approach shines in sectors like healthcare, where patient records stay put, unlocking insights for disease detection without compromising confidentiality. However, without robust verification, malicious actors could poison datasets or misrepresent contributions, eroding trust. Traditional audits falter here; they either demand raw data access, violating privacy, or rely on unproven self-reports.

Enter ZK proofs for training data provenance. These cryptographic primitives allow participants to prove adherence to protocols - from dataset licensing compliance to faithful gradient computations - while revealing nothing extraneous. Recent research underscores this potential: frameworks binding datasets to model outputs via zk-SNARKs balance privacy with auditability, as seen in ZKPROV's efficient dataset-model linkages.

Yet caution prevails. Scalability lags; proof generation can bottleneck resource-constrained devices. In healthcare applications, where federated setups process electronic health records across hospitals, even marginal delays amplify risks. A Stack Overflow discussion highlights how FL centralizes insights without data movement, but provenance proofs must evolve to match.

Spotlight on VerifBFL: Blockchain-Verified Federated Integrity

The VerifBFL framework stands out by marrying zk-SNARKs with incrementally verifiable computation (IVC) atop blockchain. Local training and aggregation yield proofs verified on-chain in under 0.6 seconds, with generation times below 81 seconds for training and 2 seconds for aggregation. Differential privacy bolsters defenses against inference attacks, making it viable for high-stakes environments.

This setup addresses core vulnerabilities: a rogue aggregator tampering with updates or nodes fabricating local results. By attesting every step zero-knowledge style, VerifBFL fosters verifiable federated learning zero knowledge proofs, essential for collaborative AI in distributed ledgers. Performance metrics suggest practicality, but real-world deployment demands testing against adversarial networks.

Blockchain integration, as in architectures combining ZKPs, FL, and DLT with decentralized identifiers, extends tamper-proof audit trails. Quorum-based systems further secure model sharing, aligning with ethical AI mandates from bodies like the NIH, where TEEs and FL train on de-identified data sans central hubs.

zkFL and ProxyZKP: Tackling Malicious Aggregators and Local Computations

zkFL confronts the aggregator trust dilemma head-on. Clients generate proofs ensuring the central server follows protocols faithfully, without exposing local models. Blockchain handles these attestations zero-knowledge, preserving training velocity while elevating security. This resonates in privacy-sensitive domains; IEEE experiments on breast cancer detection datasets hit 91.2% accuracy with 35% reduced leakage using PyTorch and zk-SNARKs.

ProxyZKP innovates further with polynomial proxy models. Nodes train private models alongside proxies, exchanging gradients for decentralized verification. ZKPs on multivariate polynomials slash proof times 30-50% versus zk-SNARKs or Bulletproofs, per Nature studies. Such efficiency edges AI model data verification ZK toward mainstream adoption, enabling verifiable dataset licensing AI in federated setups.

Zcash Technical Analysis Chart

Analysis by Mary Taylor | Symbol: BINANCE:ZECUSDT | Interval: 1D | Drawings: 9

Technical Analysis Summary

To annotate this ZECUSDT 1D chart in my conservative style, begin with a prominent downtrend line from the August 2026 peak at ~95 USDT to the recent low near 40 USDT, using 'trend_line' tool in red for caution. Add horizontal_lines at key support 40 USDT (strong, green) and resistance 55 USDT (weak, red). Mark the explosive rally phase with a date_price_range rectangle from May to August 2026 highlighting accumulation to distribution. Use fib_retracement from peak to recent low for potential retracement levels. Place callouts on declining volume and MACD bearish crossover. Arrow_mark_down at breakdown post-peak. Text box with 'Preserve capital: Wait for support confirmation' in top right. Vertical_line at major news catalyst inferred around July 2026 spike. All lines extended right for projection, emphasizing risk management.

Risk Assessment: medium

Analysis: High volatility from hype-driven rally and correction; supports in play but no clear reversal yet. Crypto's tail risks amplified despite Zcash tech tailwinds.

Mary Taylor's Recommendation: Observe only—no new positions until 40 USDT holds with confirming volume/MACD. Preserve capital first; consider 1-2% portfolio allocation max for hybrid defensive play.

Key Support & Resistance Levels

📈 Support Levels:

- $40.2 - Recent swing low with volume cluster; critical for bulls strong

- $32.5 - Deeper retracement to rally base; secondary defense moderate

📉 Resistance Levels:

- $55.1 - Near-term overhead from December high; weak breakout potential weak

- $72 - 61.8% fib retracement of rally; major hurdle moderate

Trading Zones (low risk tolerance)

🎯 Entry Zones:

- $41.5 - Dip buy at strong support if volume increases and MACD turns up; aligns with low-risk tolerance low risk

- $52 - Break above weak resistance for confirmation long; conservative pullback entry medium risk

🚪 Exit Zones:

- $55 - Initial profit target at resistance 💰 profit target

- $38 - Tight stop below support to preserve capital 🛡️ stop loss

- $70 - Stretch target at fib level if momentum builds 💰 profit target

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: Declining post-spike, confirming distribution

High volume on upside exhaustion, low on pullback—classic bearish signal

📈 MACD Analysis:

Signal: Bearish crossover with divergence at peak

MACD line below signal, histogram contracting—momentum fading

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by Mary Taylor is for educational purposes only and should not be considered as financial advice. Trading involves risk, and you should always do your own research before making investment decisions. Past performance does not guarantee future results. The analysis reflects the author's personal methodology and risk tolerance (low).

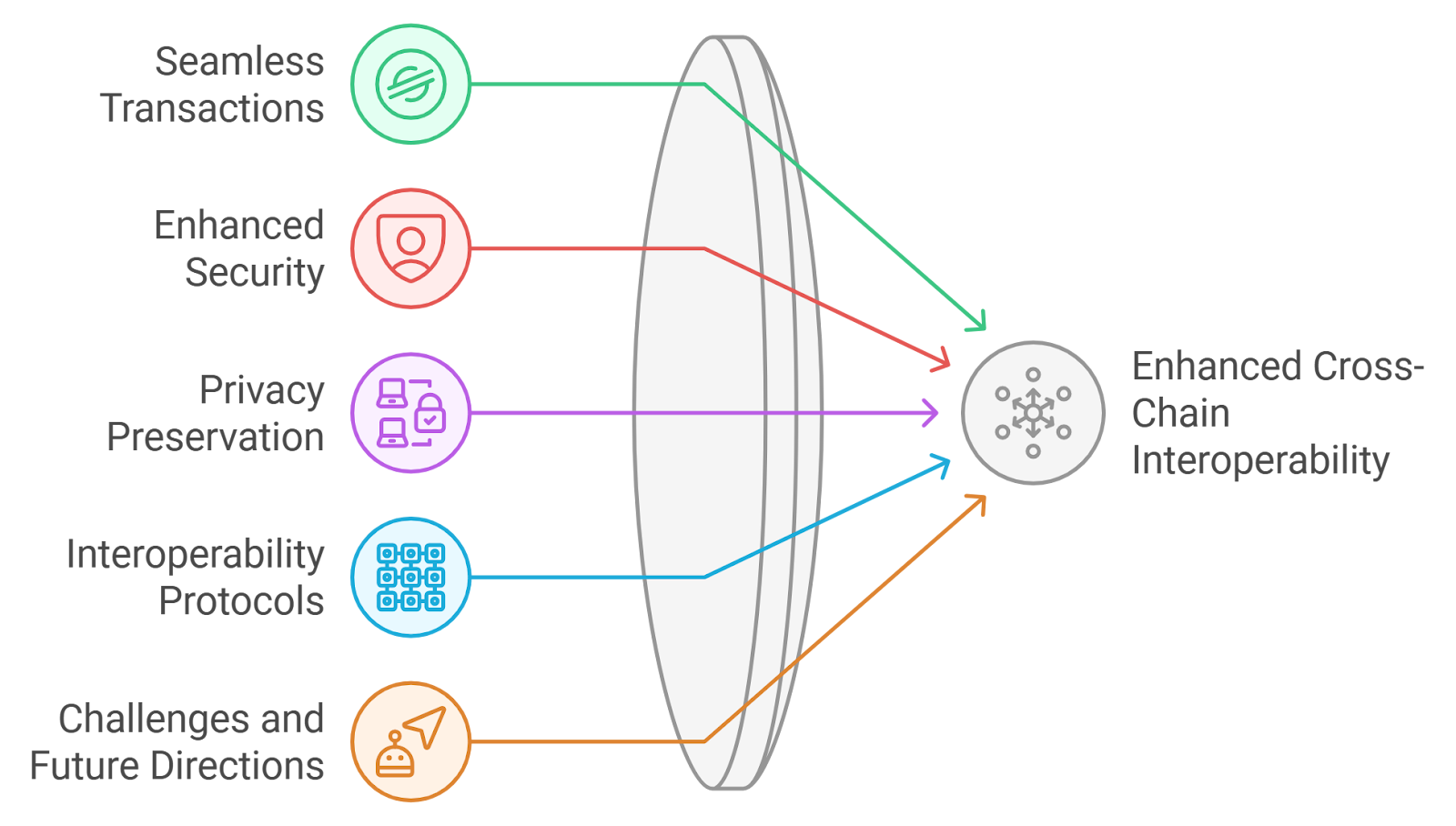

These advancements signal momentum, yet interoperability gaps persist. ResearchGate's end-to-end ZKP-FL frameworks promise data privacy and aggregation trust, but standardization lags. In healthcare's transformative 2025 landscape, per Lifebit, privacy-preserving AI training proofs could redefine collaborative model building, provided risks like computational overhead are mitigated.

Healthcare exemplifies the stakes. Federated learning unites hospital databases - electronic health records spanning cohorts - without shuttling raw data, as Lifebit envisions for 2025 transformations. Yet provenance voids persist: how to certify that a breast cancer model drew from licensed, de-identified sources across providers? ZKPs bridge this, with NIH-backed ethical AI leveraging TEEs alongside FL to train distributively. Still, provenance proofs must scale to match, lest overhead stifles adoption.

Comparative Edge: Frameworks in the Arena

Dissecting these systems reveals trade-offs worth scrutiny. VerifBFL excels in blockchain-anchored verifiability, its IVC streamlining incremental proofs for ongoing audits. zkFL shifts power to clients, neutralizing aggregator malice through model-agnostic checks. ProxyZKP's proxy elegance suits edge devices, where polynomial commitments outpace traditional SNARKs in proof velocity.

Comparison of ZK-FL Frameworks

| Framework | Proof Gen Time | Key Features | Use Case Strengths | Limitations | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| VerifBFL | Local training: <81s Aggregation: <2s On-chain verification: <0.6s | zk-SNARKs + IVC, Blockchain integration, Differential Privacy (DP) | Healthcare privacy, Auditability, Blockchain-based FL integrity | Proof generation overhead for very large models | zkFL | Minimal impact on training speed (exact times unspecified) | ZKPs for malicious aggregator verification, Blockchain handling of proofs | Secure central aggregation, Enhanced privacy against untrusted servers | Blockchain dependency may limit scalability | ProxyZKP | 30–50% faster than zk-SNARKs/Bulletproofs | Polynomial proxy models, Decentralized gradient exchange, Computation integrity proofs | Efficiency in local training verification, Healthcare data provenance | Proxy model maintenance, Potential accuracy trade-offs |

ACM and ScienceDirect architectures layer DLT with decentralized identifiers, fortifying identity in multi-party computations. MDPI's Quorum-FL hybrids craft audit trails for model provenance, tamper-proof yet data-static. These hybrids amplify ZK proofs training data provenance, yet interoperability eludes us - disparate proof formats hinder cross-framework trust.

Opinion tempers enthusiasm: efficiency gains impress, ProxyZKP's 30-50% speed-up a boon for battery-strapped mobiles in remote diagnostics. But real deployments whisper caveats. Inference attacks linger despite differential privacy; model inversion remains a specter. Resource asymmetry plagues FL - powerful servers versus IoT endpoints - demanding adaptive ZK schemes. Standardization bodies must intervene, lest siloed proofs fragment the ecosystem.

Beyond Proofs: Regulatory and Enterprise Imperatives

Regulators circle: EU AI Act and HIPAA precursors mandate traceable data lineages. Verifiable dataset licensing AI via ZKPs preempts fines, attesting compliance sans disclosure. Enterprises eye this for supply chain AI, where federated suppliers prove dataset integrity. ResearchGate's ZKP-FL end-to-enders integrate privacy with aggregation fidelity, a blueprint for production.

Challenges mount, however. Quantum threats loom over elliptic curve-based SNARKs; post-quantum ZK variants trail. Computational footprints - even optimized - strain GPUs in volume. VerifBFL's 81-second local proofs suit batch jobs, not real-time inference. zkFL's blockchain toll adds latency, though layer-2 scaling beckons.

ProxyZKP hints at maturity, its Nature-validated speeds enabling privacy-preserving AI training proofs on consumer hardware. Pair with PyTorch toolkits from IEEE trials, and federated breast cancer detectors hit clinical viability at 91.2% accuracy, leakage curbed 35%.

Provenance isn't optional; it's the ledger of trust in decentralized intelligence.

Forward paths crystallize around hybrid vigor: zk-SNARKs for succinctness, Bulletproofs for range proofs on data stats. Incremental verification, as in VerifBFL, suits lifelong learning cycles. Blockchain oracles could federate off-chain proofs on-chain, balancing cost. For healthcare's data deluge - personalized medicine, early detection - these tools forge resilient models.

Investors and developers, proceed measuredly. Pilot in sandboxes, benchmark against baselines. The promise of federated learning zero knowledge proofs gleams, but execution demands vigilance. Capital preservation mirrors this: verify origins rigorously, aggregate judiciously, thrive securely.

No comments yet. Be the first to share your thoughts!